AI Bias in Models Impacts Public Trust

AI Bias Perceptions and Public Concern

Major AI models like ChatGPT and Gemini often show political bias, impacting public trust and decision-making in critical areas.

Recent findings reveal a growing concern over perceived political bias in large language models (LLMs) [0-0]. These biases appear in many forms. They include slanted content generation, content refusals, and even inaccurate image outputs [0-9, 1-0, 1-2]. This trend is a critical issue for policymakers, AI developers, and media analysts.

Google Gemini faced criticism for generating historically inaccurate images in February 2024 [0-9, 1-0]. It depicted Nazis or U.S. Founding Fathers as people of color or women [1-2]. Google's CEO called these responses "completely unacceptable" [1-2]. This incident was perceived as an overcorrection for racial bias [0-9, 1-0, 1-2].

ChatGPT consistently exhibits a liberal or left-leaning bias [0-0, 0-2, 0-6, 1-4]. Studies in 2024 and 2025 confirm this trend [0-5, 1-6]. For instance, a 2025 study showed ChatGPT-4 and later versions having a significant left-leaning "absolute bias" [0-5, 1-6]. This was particularly true on environmental and civil rights topics [0-5, 1-6].

Anthropic Claude also shows varying political stances. While one 2024 study noted a liberal bias [0-0, 0-2, 0-6], another in March 2025 highlighted mixed tendencies [0-7]. Claude supported content moderation and affirmative action [0-7]. However, it supported strict immigration policies and opposed transgender athletes in women's sports [0-7]. Claude Sonnet 4.5 repeatedly refused political quizzes in October 2025 [0-9, 1-0, 2-8].

Grok, developed by xAI, often displayed a right-leaning bias in March 2025 [0-7]. It opposed content moderation and supported strict immigration policies [0-7]. Yet, a Stanford study in May 2025 found users perceived Grok as having a significant left-leaning slant [0-9]. This highlights the complexity of bias perception.

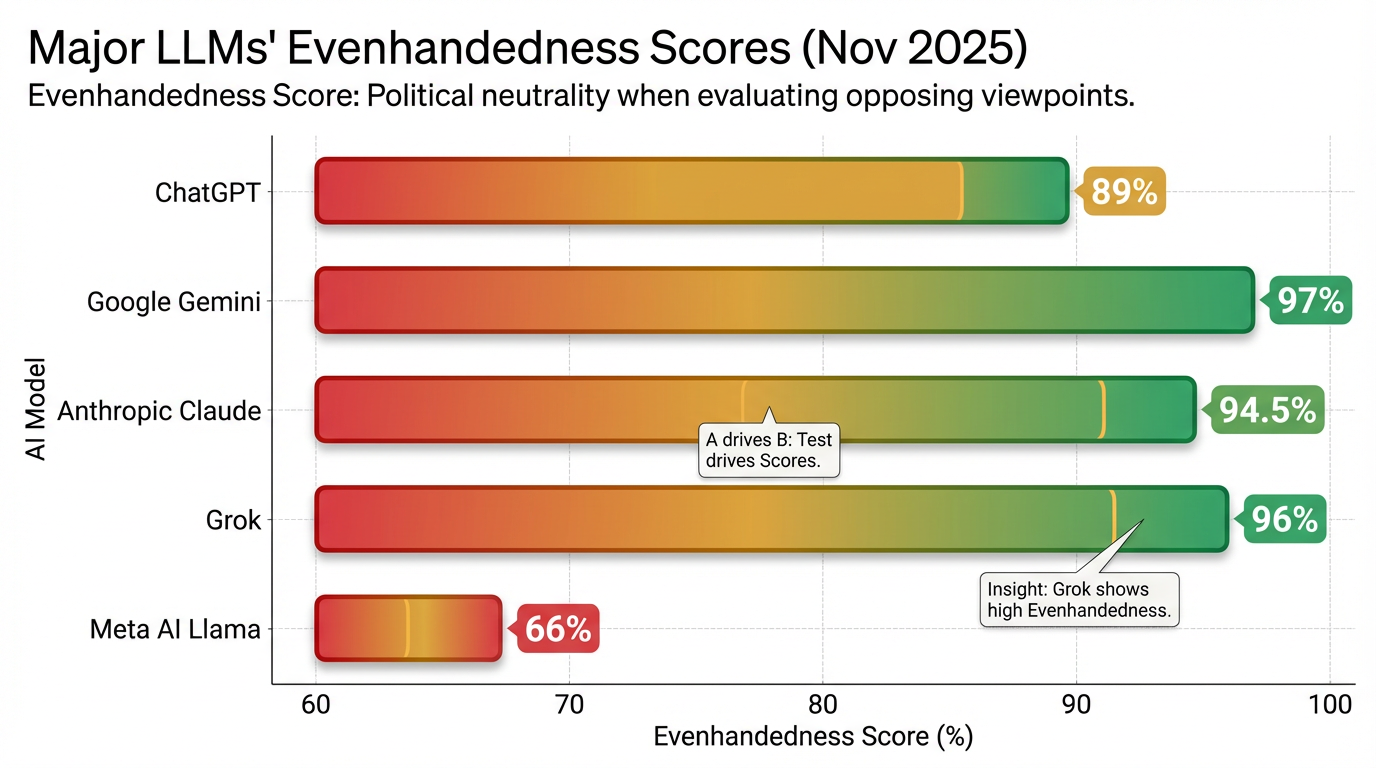

Meta AI's Llama models predominantly leaned left, especially on social issues [0-7]. In March 2025, Llama strongly supported affirmative action [0-7]. It was the only LLM tested that supported including transgender athletes in women's sports [0-7]. By November 2025, Llama 4 scored only 66% on Anthropic's Evenhandedness Test [0-3, 1-9]. This significantly lagged behind competitors [0-3, 1-9].

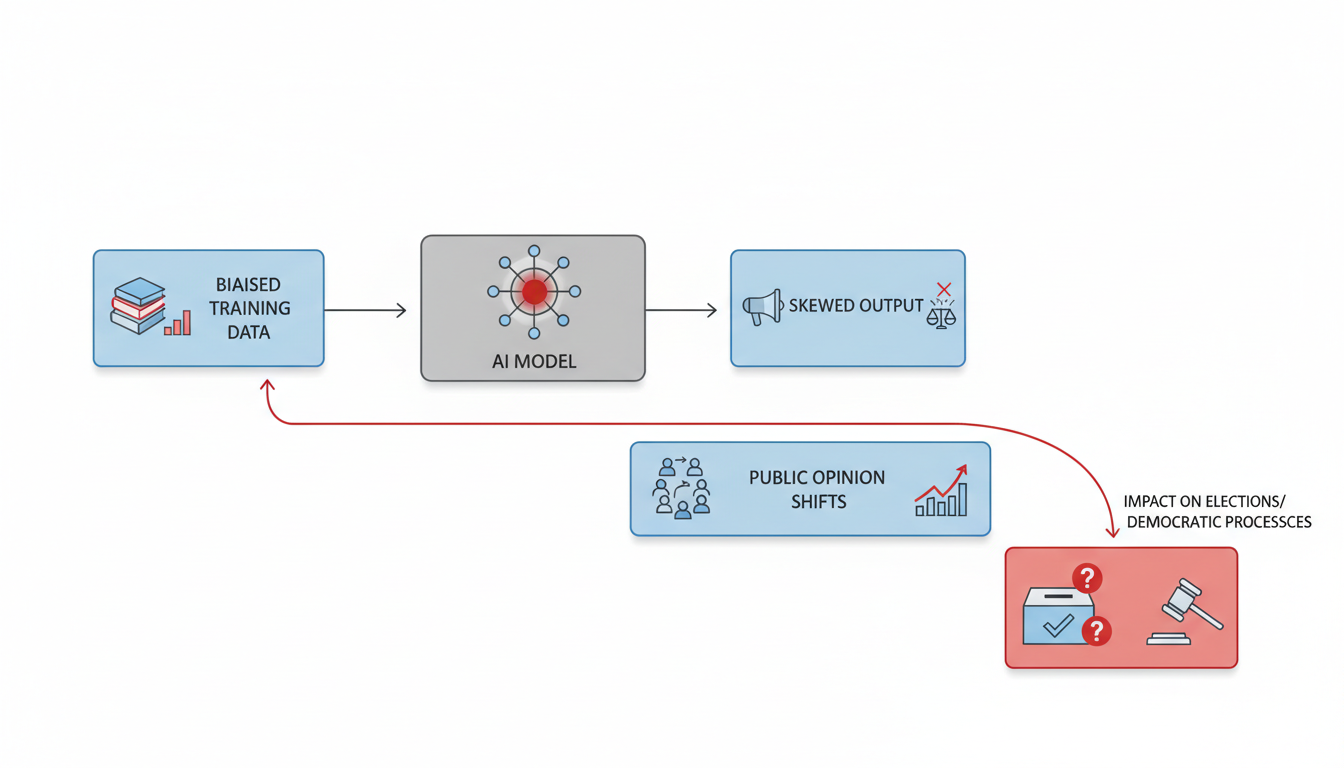

Biased AI can sway public opinion. Just a few messages can change people's political views [0-3, 1-9]. This is especially true for users less knowledgeable about AI [0-3, 1-9]. LLM-generated content shapes decision-making in sensitive areas [0-5, 1-6].

Another serious concern is the amplification of foreign propaganda [0-1]. Research from October to November 2025 found that ChatGPT, Claude, and Gemini cited state-aligned propaganda in 57% of responses to international conflict questions [0-1]. These sources included Al Jazeera and China Daily [0-1]. This makes propaganda appear authoritative and poses a national security threat [0-1].

The Ripple Effect of Skewed AI

AI political bias impacts public opinion, democratic processes, and user trust, with models often showing left-leaning tendencies in a Stanford study from May 2025 1. These biases influence how information is presented and interpreted. They lead to significant, measurable consequences across various sectors.

Shifting Public Perception

Studies show AI models often display political leanings that can influence public opinion. ChatGPT-4 and Claude tend to exhibit a liberal bias 2. Google Gemini generally adopts more centrist stances based on its training data 2. GPT-4's responses align more with left-wing rather than average American political values 3.

Many advanced LLMs consistently show left-leaning or centrist tendencies 4. They also exhibit clear negative biases toward right-conservative parties 4. This holds true even with paraphrased prompt formulations 4. Larger open-source models, like Llama3-70B, often align with left-leaning political parties 5. Smaller models frequently remain neutral, especially when prompted in English 5.

Users commonly perceive popular LLMs as having left-leaning political slants 1. A study covering 24 LLMs and 30 political questions found this 1. Approximately 18 responses from nearly all models were seen as left-leaning by both Republican and Democratic respondents 1. OpenAI models had the most intense left-leaning slant 1. This was four times greater than Google's models, which were seen as the least slanted overall 1. Even xAI models, despite claiming unbiased output, were perceived to have the second-highest left-leaning slant 1.

Algorithmic changes on social media can actively shift political opinions. A 2023 experiment on X (formerly Twitter) showed this 6. Switching users to an algorithmic feed for seven weeks moved political opinion towards more conservative positions 6. This affected policy priorities and views on the war in Ukraine 6. Switching from algorithmic to chronological feeds had no comparable impact 6.

LLMs can influence users' opinions and reinforce ideological frames 7. They also shape decision-making in sensitive domains like politics 7. AI-generated political messaging can manipulate public opinion 8. It creates highly persuasive content mimicking human communication 8. This makes it hard for voters to distinguish its authenticity 8. AI can subtly steer understanding through news summaries or synthetic social media posts 9.

Threats to Democratic Integrity

AI political bias poses significant risks to fair democratic processes. Biased AI technologies can shape public opinion 2. They influence political behavior and directly affect voting outcomes 2. Generative models with specific political leanings can heavily influence election results 9. They can interfere with democratic processes 9.

AI-driven misinformation campaigns threaten electoral integrity 8. These campaigns use automated bots and deep fake technology 8. They spread false information, challenging democratic accountability 8. Voters are influenced by AI-generated narratives that don't reflect reality 8.

Generative AI can mimic human survey responses with great accuracy 10. "Only a few dozen synthetic responses" could reverse expected outcomes in major polls 10. This was shown before the 2024 U.S. election 10. These AI-generated answers passed quality checks and imitated human behavior 10. They altered poll predictions without detectable evidence 10.

Algorithmic amplification during elections further complicates matters. Platform X's algorithms showed a structural engagement shift around mid-July 2024 11. This coincided with Elon Musk's endorsement of Donald Trump 11. Elon Musk's account saw a "marked differential uplift" in view, retweet, and favorite counts after this point 11. Republican-leaning accounts also showed a significant post-change increase in view counts 11. This was relative to Democrat-leaning accounts 11. Retweet and favorite counts for Republican accounts did not show the same group-specific boost 12.

A six-week audit on X found the algorithm skews exposure towards a few high-popularity users 13. Right-leaning accounts experienced the highest level of exposure inequality 13. Both left- and right-leaning accounts had amplified exposure to their own political views 13. They had reduced exposure to opposing viewpoints 13. New, neutral accounts showed a default right-leaning bias in their timelines 13. About 50% of tweets in X's user timelines are personalized recommendations 13. This is an increase from 37% in 2023 13. AI improved campaign efficiency but did not alter voter sentiment in the Czech Republic's ANO party win 15.

Eroding User Confidence

AI political bias directly affects user trust in AI systems. It impacts the information these systems provide 9. When AI produces inaccurate or biased outputs, public trust erodes 9. This affects trust in the technology and the institutions using it 9.

Audiences struggle to distinguish between AI- and human-authored news 16. This signals a normalization of AI journalism 16. Greater transparency is needed to preserve trust 16.

User perception studies confirm popular LLMs are widely seen as left-leaning 1. However, when an LLM is prompted for neutrality, it generates responses users find less biased 1. These responses also have higher quality 1. This makes users more likely to trust and use that LLM 1.

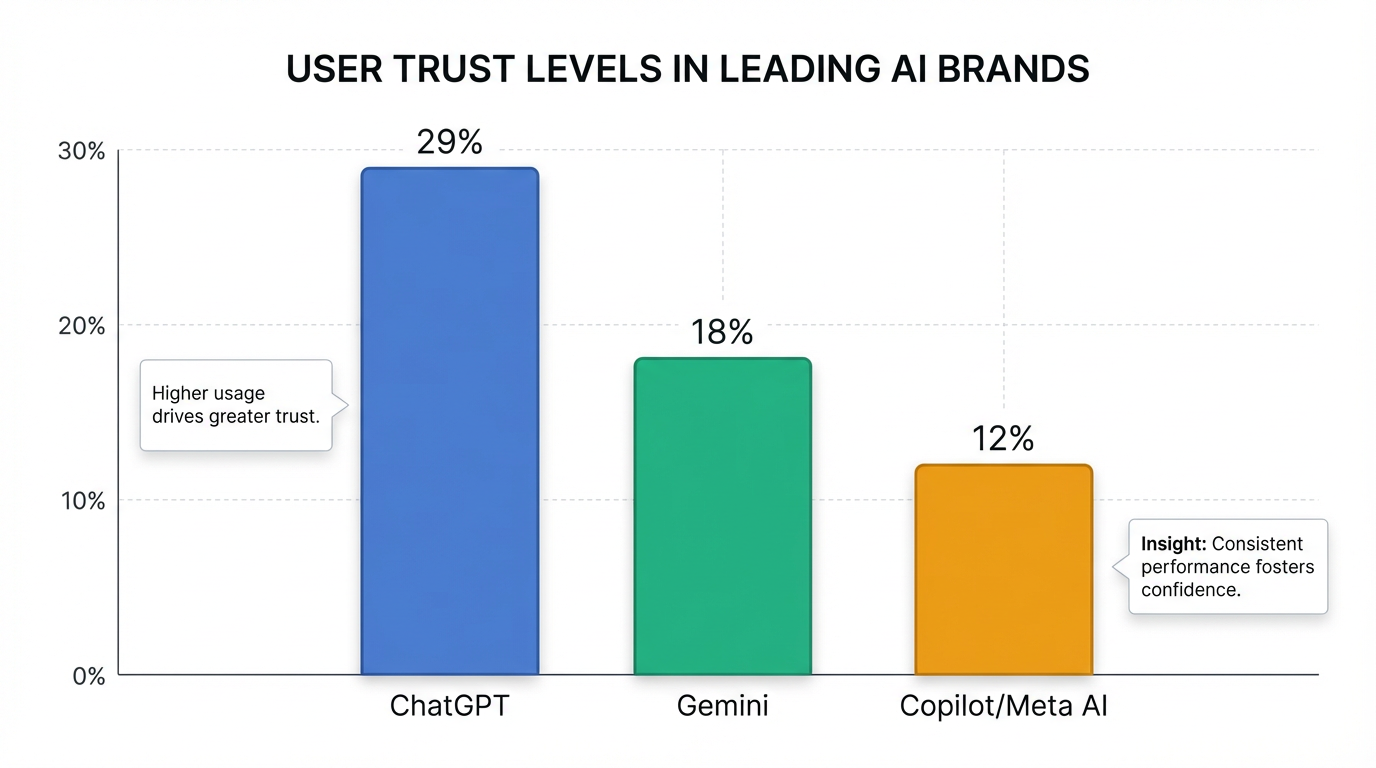

Public awareness and use of generative AI are increasing significantly 17. Weekly usage nearly doubled from 18% to 34% in one year 17. Trust focuses on major brands 17. For example, 29% of users trust ChatGPT 17. 18% trust Gemini 17. 12% trust Copilot/Meta AI 17. Among users encountering AI-generated search answers, 50% trust them 17.

This infographic displays the varying levels of trust users place in prominent AI brands like ChatGPT, Gemini, and Copilot/Meta AI, highlighting the brand-dependent nature of user confidence in AI-generated information.

This trust is conditional, especially in high-stakes areas like health or politics 17. Many users verify AI-generated information with traditional sources 17. Only 33% of users consistently click through source links in AI-generated overviews 17. 28% rarely or never do so 17.

Mechanisms Behind the Bias

Several factors drive these quantified impacts. AI bias often stems from unevenly distributed political opinions in training data 9. Certain viewpoints dominate, or historical biases are embedded 19. Algorithmic designs also introduce bias 19. Designs for user engagement may prioritize sensationalist political content 19. This happens regardless of factual accuracy 19. Such designs contribute to "filter bubbles" and "echo chambers" 19.

Deliberate fine-tuning with skewed data can align an LLM politically 20. This can push it toward left-leaning, moderate, or right-leaning preferences 20. Exposure to algorithmic content can lead users to follow specific political accounts 6. They may continue following these even if the algorithm is switched off 6. This creates persistent political effects 6.

Demographics also play a role. Bias in ChatGPT's responses was larger for males and non-graduates 7. This was particularly true for environmental, civil rights, and inequality issues 7. Younger users (18-24s) are more likely to use AI for news comprehension 17. This is compared to older users (27% for 55+) 17.

This image illustrates the complex interaction between artificial intelligence and human users, symbolizing how AI's influence can permeate various aspects of public discourse and decision-making.

Methodological Challenges in Measuring Bias

Diverse quantitative methods are used to study AI bias. Studies use comparative analysis of LLM responses to political quizzes 2. They also emulate over 50,000 LLM voting personas 21. Parliamentary voting record benchmarks are also employed 4. Field experiments on social media are common 6. Large-scale surveys for perception studies involve thousands of respondents 8. Sock-puppet audits involve millions of tweets from many accounts 13.

Newer approaches combine linguistic comparisons and policy recommendation analysis 20. Sentiment analysis and political tests provide a more comprehensive assessment 20. These aim to address limitations of past research 20. Traditional tests were often limited in scale and vulnerable to paraphrasing 4.

Challenges remain in this field. Some AI systems show inconsistencies in responses over time 22. This also applies across different model versions 22. Relying on user perceptions of neutrality has weaknesses 1. Perceived neutrality might not always align with factual correctness 1. Self-reported survey data can introduce bias 8. Social media audit data collection faces limitations from platform API changes 12. The proprietary nature of algorithms makes discovering bias difficult 23. Reproducing bias is also challenging 23.

The continuous, rigorous quantitative research is necessary. It helps understand and mitigate AI's political biases 23. This safeguards user trust and democratic integrity 23. The growing integration of AI into public discourse demands this attention 23.

Building Neutral LLMs Holistically

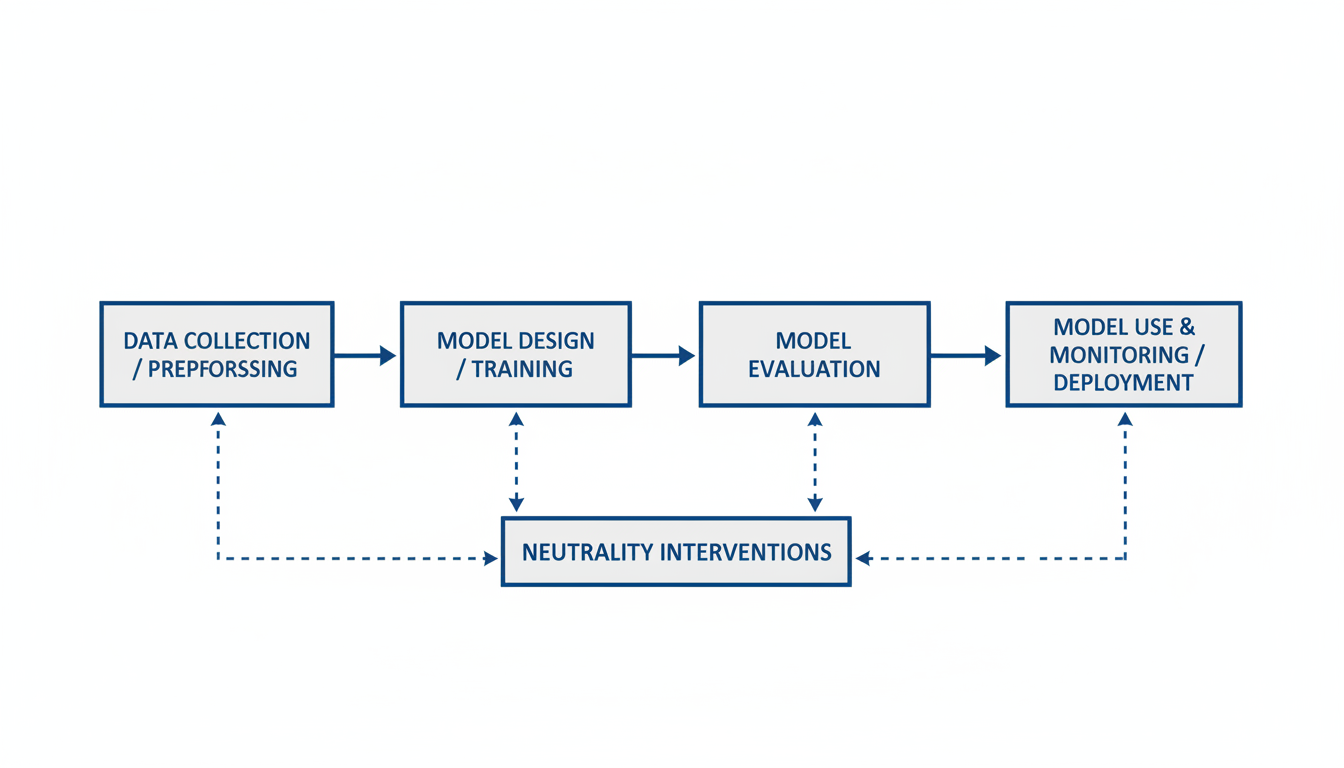

Developing fair and unbiased large language models requires a systematic and comprehensive approach throughout their entire lifecycle. This includes careful data practices, refined model training, and continuous oversight to ensure ethical AI deployment.

A holistic strategy for neutral LLM development spans the entire model lifecycle . It begins with how data is gathered. This process extends through model design and training, then continues into evaluation. Finally, it covers ongoing monitoring once the model is in use and deployed.

LLM Development Lifecycle Interventions

Interventions are crucial at each stage of an LLM's development. Applying careful techniques throughout helps ensure neutrality.

Data Collection and Preprocessing

The journey to neutrality starts with data. Audit training data for demographic imbalances . Use counterfactual examples to balance representations . Expert-led data curation is also vital . This involves specialists meticulously refining training data. They focus on quality to address subtle, context-specific biases 24. Additionally, scrub protected attributes from inputs . Remove identifiers like names or addresses if they are not relevant to the task 25.

Model Design and Training

Next, focus on the model's core design. Apply adversarial debiasing during training . This technique penalizes the model for revealing sensitive attributes 26. Integrate Knowledge Graph-Augmented Training (KGAT) . KGAT adds structured domain knowledge to counter biases and improve context 27. Use fairness-aware training to prioritize ethical metrics . Incorporate prompt instructions for unbiased behavior within the system prompt itself .

Model Evaluation

Rigorous evaluation is key before deployment. Conduct pre-deployment testing using standardized benchmarks . Examples include StereoSet, BOLD, and CrowS-Pairs 26. Custom LLM-based correspondence experiments also detect subtle disparities . Analyze attention patterns to uncover demographic cues . Evaluate bias at different levels, including embeddings, probabilities, and generated text .

Model Use and Monitoring

Even after deployment, vigilance is necessary. Continuously monitor model outputs for fairness violations . Observability platforms like Fiddler AI and Sight AI can assist . Apply post-hoc output calibration or threshold optimization . Implement prompt debiasing filters as safeguards . Integrate human-in-the-loop mechanisms for oversight . Establish robust accountability structures for responsible AI 28.

Navigating Trade-offs and Limitations

Achieving AI neutrality involves complex challenges. Developers must understand inherent trade-offs.

- "No Free Lunch" Principle: Reducing bias in one area can create new biases elsewhere . Multi-dimensional auditing helps understand these effects 29.

- Computational Cost: Some detection tools, like GPTBIAS, demand significant resources 30.

- Complexity of LLMs: Large, "black-box" models make understanding decisions challenging .

- Data Quality: Incomplete or biased external knowledge graphs can introduce new biases 27.

- Scale of LLMs: Larger models do not automatically solve bias; they can sometimes worsen it 31.

- Context Specificity: Bias in LLMs must be understood within their application context 32. Generic solutions often fall short.

Building Trust and Ensuring Compliance

Responsible AI development needs strong governance frameworks 33. These frameworks include clear policies and risk assessments 33. Human oversight and transparent documentation are essential 33.

Ethical considerations are quickly becoming compliance requirements. Regulations like the EU AI Act and Colorado SB205 now demand adherence . Continuous evaluation and feedback loops are vital . Community-driven techniques for bias reduction also play a key role 33. Ultimately, the goal is to foster trust between AI developers and end-users . This ensures AI systems act responsibly and equitably.

Empowering developers is critical for building ethical AI applications. Solo founders and small teams can also create impactful, neutral AI tools. Platforms like Atoms.dev (https://atoms.dev) streamline this process. They allow users to describe an idea and receive a working app with essential features. For example, developers can use the AI app builder to quickly prototype and launch their solutions. Similarly, if building conversational agents, the AI chatbot builder can accelerate development. These tools make advanced development accessible.

Building Trust in Tomorrow's AI

Achieving neutral and trustworthy AI models, particularly LLMs, demands a holistic strategy with robust governance and continuous oversight.

The Role of Regulation and Policy

Strong governance frameworks are critical for responsible AI development 33. These frameworks include clear policies and risk assessments. Human oversight and transparent documentation are also vital 33. Ethical considerations are now becoming legal compliance requirements . Regulations like the EU AI Act drive the need for adherence. Colorado SB205 also pushes for similar standards . These regulations help ensure AI systems adhere to societal values.

Integrating Ethical AI Principles

Ethical principles guide the development of neutral LLMs. Fairness and non-discrimination ensure equitable outcomes for all users . Transparency requires understandable decisions and clear documentation of design processes . Accountability establishes clear responsibility for AI system outcomes . Privacy and data protection mean respecting user data through secure storage and controlled access . Robustness ensures systems are resilient, secure, and reliable . Human agency means AI should empower users and include human oversight . "Ethics by Design" embeds these values from conception to deployment . Interdisciplinary teams involving ethicists and legal experts help achieve this 34.

Continuous Monitoring and Adaptation

Developing neutral LLMs requires ongoing vigilance. Regular assessment of AI models for fairness and accuracy is necessary . Robust audit frameworks ensure continuous oversight . Pre-deployment testing using benchmarks helps identify issues early 25. Human-in-the-loop mechanisms provide essential oversight in high-risk applications . Continuous evaluation and feedback loops are key to evolving bias reduction strategies .

- Observability Platforms: Tools like Fiddler AI and Sight AI offer real-time bias detection . They monitor outputs for fairness violations.

- Prompt Debiasing Filters: These act as safeguards at both input and output stages 31. They prevent the generation of biased content.

- Self-Correction: LLMs can autonomously assess and adjust outputs for inherent bias awareness 35. This promotes internal bias correction.

Building applications that incorporate these principles from the ground up can simplify this process. Platforms like Atoms allow solo founders to quickly build AI apps with authentication, databases, and payments, potentially integrating responsible AI features from the start. For example, when building an AI app or a chatbot, integrating ethical considerations into the initial design phase is paramount.

Collaborative Efforts for Trustworthy AI Systems

Fostering trust between AI developers and end-users is crucial . This requires community-driven exchanges of techniques . Public engagement involves stakeholders impacted by AI systems 34. Incorporating their perspectives leads to more inclusive and equitable AI. Clear governance policies align with global AI regulations 33. Forming an AI ethics committee can provide additional guidance 33. Secure data pipelines are also necessary for data protection 33. Transparent documentation informs users about model capabilities and limitations . Labeling AI-generated content can prevent misinformation 33.

Future Predictions for Neutral LLM Development

The future will likely see even stricter regulatory landscapes. Proactive integration of ethical considerations will become standard practice. The demand for verifiable AI neutrality will grow significantly. AI systems will need to demonstrate their fairness and lack of bias clearly. This trend emphasizes the ongoing need for robust detection and mitigation techniques. Collaboration across industries and with governments will accelerate the development of truly neutral AI. This will build stronger public confidence in AI technologies.