AI Privacy & Ethics: A Founder's Guide to Building Trust and Compliance

Introduction

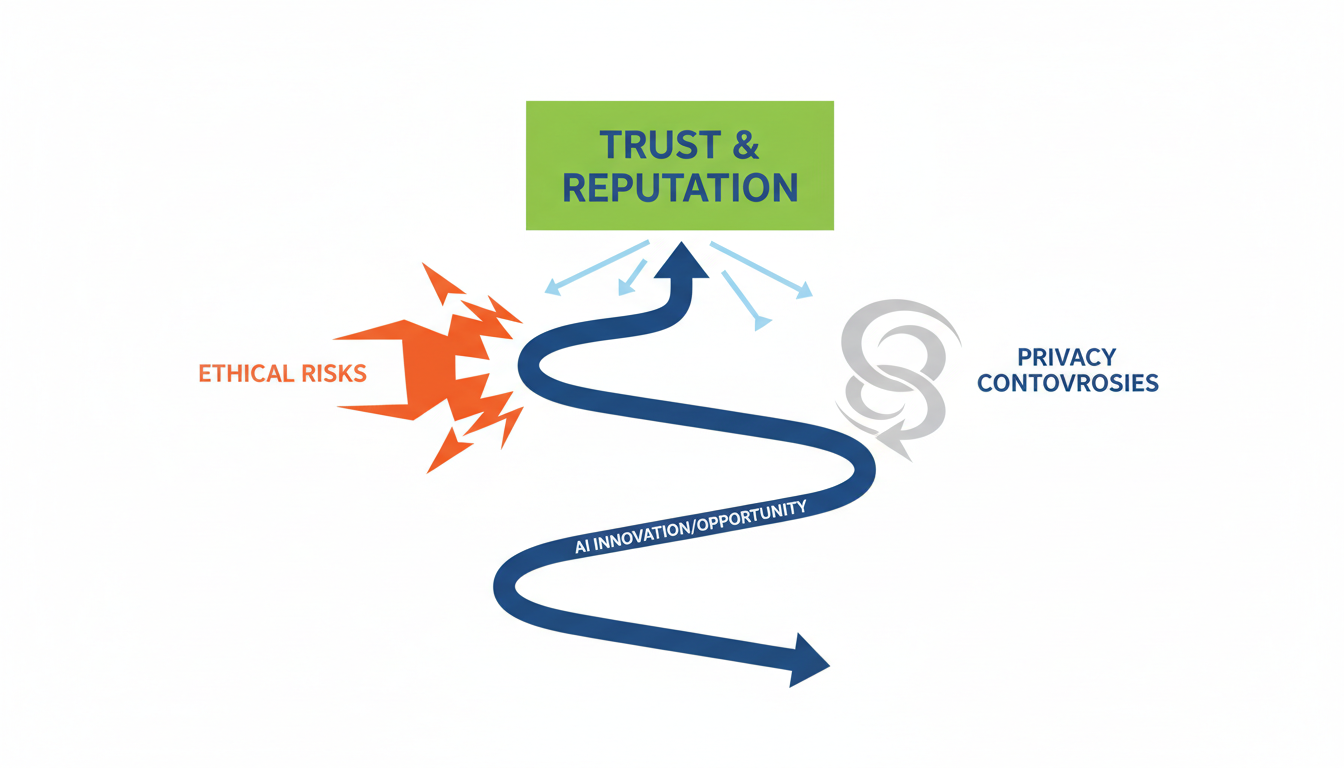

The past few years have been a whirlwind of innovation, with AI adoption surging across every sector imaginable. Yet, this rapid technological advancement has brought with it an unwelcome companion: a significant rise in AI Privacy and Ethics controversies and ethical missteps, posing substantial risks, particularly for early-stage companies. Consider the recent, striking incident where a car dealer's AI chatbot mistakenly offered a brand-new vehicle for a mere $1, highlighting the real-world financial and reputational implications of AI malfunctions for businesses operating with limited resources 1. Such errors underscore that while AI offers immense opportunities, the ethical and privacy challenges can be devastating for companies.

This era marks a profound shift where privacy and ethical considerations are no longer optional "afterthoughts," but fundamental requirements for AI development and deployment 2. Failing to integrate privacy by design and responsible AI practices from the outset can lead to severe consequences, from crippling legal liabilities and fines to irreparable reputational damage, especially challenging for new brands striving to establish trust and gain market traction . The environment is complex, with rapidly evolving global regulations, increasing consumer distrust in AI systems, and a growing "ethics gap" within organizations that often lack specialized compliance expertise .

For AI startup founders, developers, and product managers, navigating this intricate landscape is paramount. This article aims to serve as a practical guide, illustrating how proactive engagement with AI privacy and ethics can build foundational trust, ensure compliance, and foster sustainable innovation in a competitive and heavily scrutinized market. Understanding these challenges and implementing robust safeguards is not just about avoiding pitfalls; it's about shaping the future of responsible AI.

Core Strategy: Building Trust Through Design

For early-stage AI startups, integrating trust and ethics isn't just about compliance; it's a foundational business strategy. In an era where data privacy is paramount, entrepreneurs must embed these principles into their core architecture from day one, rather than treating them as an afterthought. This proactive stance, known as Privacy by Design (PbD), ensures that AI systems are secure by default and limit data usage to the minimum necessary for their stated purpose, building an immediate competitive advantage .

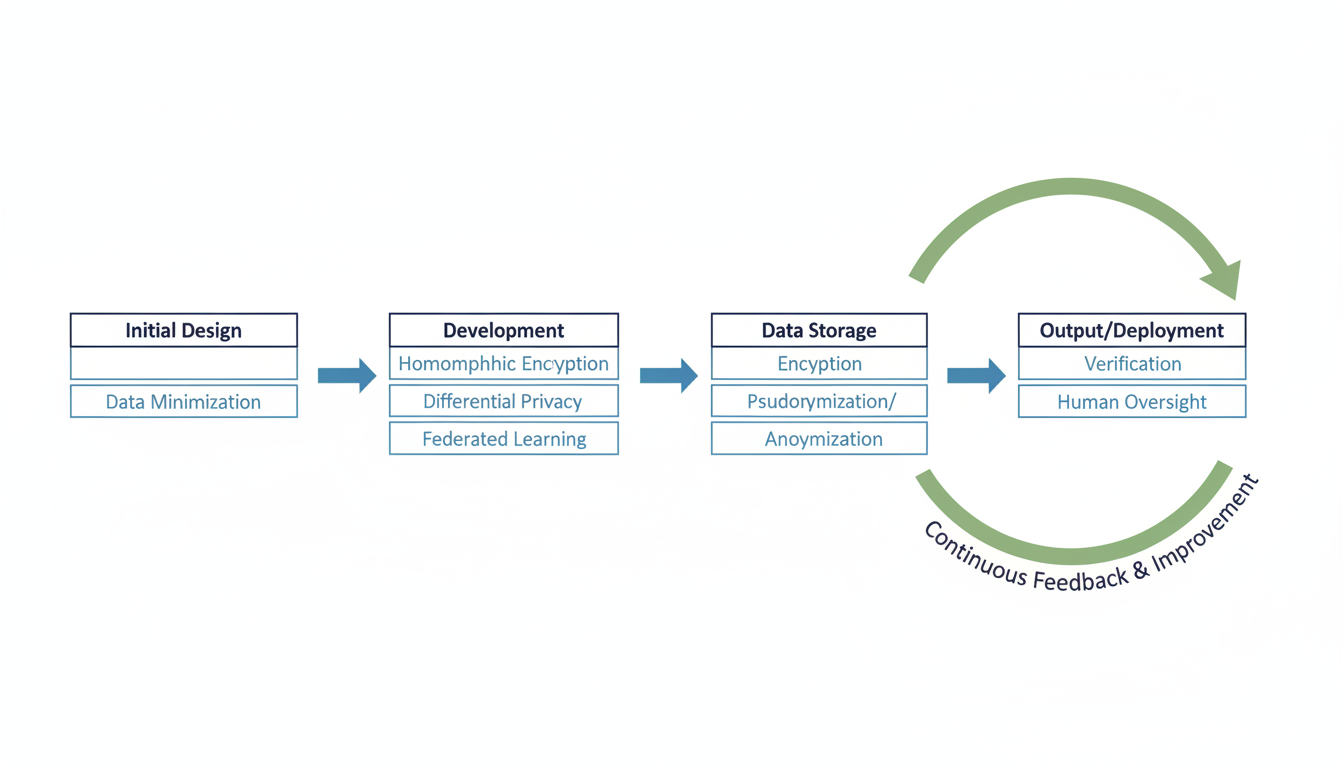

Implementing PbD begins with rigorous data minimization and purpose limitation. This means startups must clearly define the absolutely essential data required for their AI model's functionality, discarding or avoiding the collection of anything non-essential 3. Strict data retention policies are crucial, ensuring data is promptly deleted once its purpose has been served, preventing excessive collection and mitigating privacy risks . Every piece of data collected should demonstrate a contextual necessity, proportionate to the problem the AI is solving 4.

Beyond minimizing data, startups must integrate built-in security through Privacy-Enhancing Technologies (PETs). These are not optional extras but core components of a secure AI system, protecting data confidentiality throughout its lifecycle . Homomorphic Encryption (HE), for instance, allows computation directly on encrypted data, keeping sensitive information confidential even during processing for collaborative AI training . Differential Privacy (DP) adds calculated noise to data, making it mathematically rigorous to quantify privacy loss and prevent individual inference from aggregate statistics . For decentralized training, Federated Learning (FL) enables multiple parties to train a model without sharing raw data, exchanging only aggregated parameters . Additionally, Secure Multi-Party Computation (MPC) distributes processing across servers, safeguarding input privacy 5, while standard encryption protects all data at rest and in transit 3.

Another powerful PbD technique is anonymization and pseudonymization. Transforming identifiable data to obscure individual identities significantly reduces privacy risks before data is used for training or processing . Pseudonymization replaces direct identifiers with artificial ones, maintaining linkage for internal use without direct identification . Anonymization goes a step further, completely removing or altering identifiable information to prevent indirect identification, with techniques like face-blurring in image processing serving as practical examples . For testing, startups can leverage synthetic data generation, creating artificial datasets that mimic real data statistically but contain no actual personal information .

Beyond privacy, establishing ethical AI frameworks is crucial, especially for early-stage companies often strapped for resources and driven by rapid growth 6. While comprehensive frameworks exist, Digital Catapult's AI Ethics Framework and the Four Critical Guardrails for Ethical AI in Agile offer adaptable entry points. The latter, in particular, provides a lightweight, actionable framework designed for integration into existing Agile workflows, directly addressing common developer concerns like data privacy and output reliability .

These Four Critical Guardrails provide practical boundaries for AI use by development teams:

- Data Privacy & Compliance: Implement data classification (e.g., Public, Internal, Confidential, Restricted) and clear protocols for sanitizing inputs before sharing with AI tools to prevent accidental leakage of sensitive information .

- Human Value Preservation: Clearly delineate AI-optimal and human-optimal activities, positioning AI as an enhancer rather than a replacement. This preserves the unique human elements of agile roles .

- Output Validation: Establish verification protocols for different types of AI outputs and practices for critically assessing AI-generated content .

- Transparent Attribution: Document AI involvement in generating content or decisions to ensure intellectual property protection and stakeholder trust . These guardrails are designed to be integrated naturally into agile practices, with Scrum Masters often playing a key role in establishing these ethical compasses without creating bureaucratic overhead .

Proactive adoption of these privacy and ethical AI practices delivers substantial business value. It builds customer trust and loyalty, as consumers increasingly prioritize discerning data use . This also ensures compliance and regulatory readiness, protecting startups from hefty fines and reputational damage from evolving regulations like GDPR or the EU AI Act . Such practices lead to enhanced data quality and AI performance by focusing on relevant, consented data 7 and mitigates risks from cyberthreats, data breaches, and algorithmic biases that can lead to costly failures . Ultimately, championing ethical AI provides a distinct competitive advantage, attracting discerning investors, partners, and top talent in a crowded market 6. While challenges like computational overhead with advanced PETs exist , beginning with simplified, agile-integrated guardrails allows startups to incrementally build robust ethical AI governance.

Real Example: A Startup's Ethical Journey

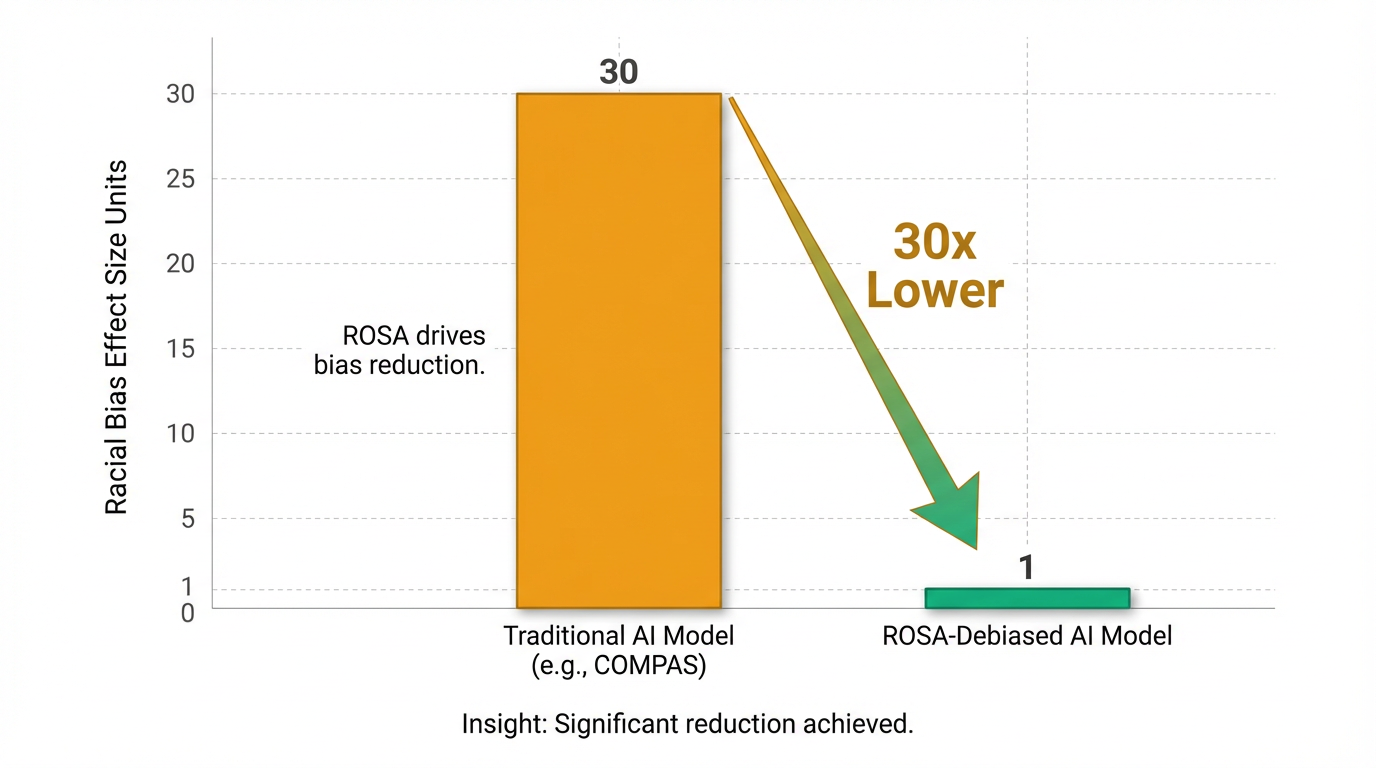

While the principles of ethical AI are clear, seeing them put into practice by a lean startup truly brings the concept to life. Enter illumr, a company directly tackling one of the most insidious challenges in artificial intelligence: algorithmic bias 8. Their product, ROSA, was specifically engineered to identify and neutralize these embedded biases within datasets, which commonly lead to unfair and discriminatory outcomes from AI systems. From critical decisions in credit risk and criminal justice to hiring processes and insurance pricing, historical human biases frequently find their way into AI models, perpetuating societal inequalities based on race, age, and gender 8. A particularly stark example is the racial bias uncovered in criminal risk assessment tools like COMPAS, which often overstate reoffending probabilities for specific demographic groups 8. This problem not only erodes trust but also makes it incredibly difficult for organizations to comply with evolving regulations such as GDPR's anti-bias provisions and the EU's mandates for gender-neutral insurance pricing 8.

illumr's approach with ROSA is unique because it intervenes at the earliest possible stage: pre-training bias removal 8. Instead of attempting to correct biases after an AI algorithm has already been trained, ROSA processes raw datasets to identify and eliminate inherent biases before they can even influence the learning phase of an AI model 8. The tool is designed for seamless integration into an organization's existing data analytics pipeline, often requiring nothing more than a simple one-line API call 8. It operates in a fully automated manner, removing the need for manual intervention by a data scientist to alter the algorithm itself 8. Furthermore, illumr addresses the "black box" problem of AI by providing mechanisms to explain exactly how the bias removal process occurred 8. To bolster transparency and compliance, ROSA even leverages a distributed ledger, meticulously recording when and how the de-biasing process took place for auditors 8.

The tangible results of ROSA's efforts are compelling. In a case study involving a criminal risk assessment tool functionally similar to COMPAS, illumr demonstrated a profound impact 8. While traditional models showed significant racial bias, the model de-biased using ROSA achieved a remarkable 30 times lower effect size for racial bias compared to the COMPAS model 8. Crucially, this bias reduction did not come at the expense of performance; ROSA's approach actually led to more accurate predictions overall 8. This case study successfully debunked the common concern that removing bias necessitates compromising the AI system's predictive power 8.

By proactively tackling bias, ROSA empowers organizations to comply with global anti-discrimination regulations, ensuring that the data used for AI training does not unwittingly replicate human biases 8. Beyond compliance, a commitment to fairness and explainability helps companies build crucial customer trust and mitigates the significant reputational and legal risks associated with biased AI 9. illumr, through ROSA, showcases a powerful example of how startups can lead the charge in building a more equitable and trustworthy AI future.

Next Steps: Your Action Plan for Responsible AI

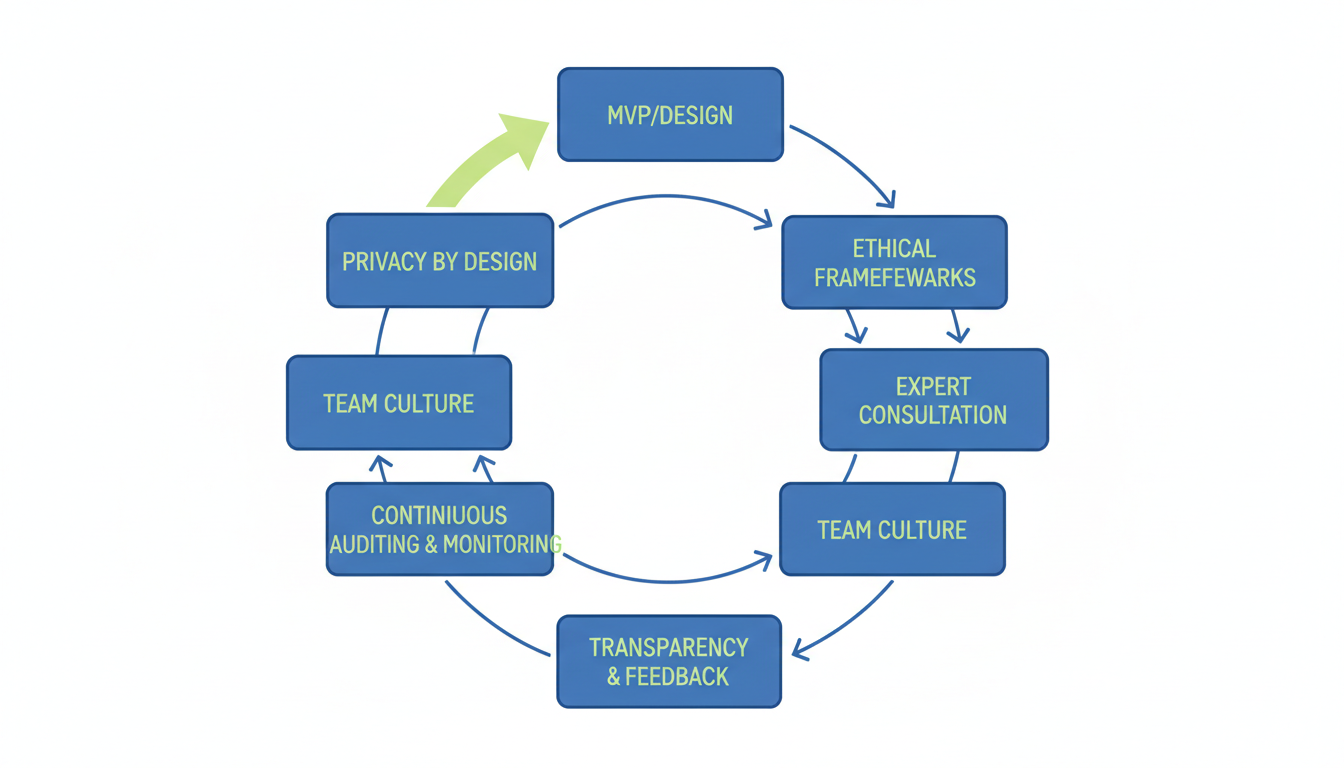

In today's fast-paced AI landscape, early-stage companies face a critical juncture. The rapid adoption of artificial intelligence brings immense opportunity, but it's equally accompanied by a surge in privacy controversies and ethical missteps, posing substantial risks for those who fail to act proactively1. Navigating this complex terrain is not merely about compliance; it's about building enduring trust with users, safeguarding your brand's reputation, and ensuring the long-term viability of your innovations in a heavily scrutinized market2. The choice to integrate privacy and ethics from day one isn't just a best practice—it’s a fundamental requirement for sustainable growth.

As you embark on your AI journey, commit to integrating privacy and ethical considerations directly into your Minimum Viable Product (MVP) and every subsequent development phase. Think of "Privacy by Design" not as an add-on, but as the foundational blueprint for your AI systems. This means applying principles like data minimization, collecting only essential data, and embedding robust security measures such as encryption and privacy-enhancing technologies like homomorphic encryption or differential privacy from the outset. By baking these principles into your core architecture, you proactively limit data usage, mitigate risks, and save significant resources that would otherwise be spent on costly retrofits or addressing breaches later on.

Cultivating an ethical culture within your team is just as vital as technical safeguards. This isn't solely the responsibility of a few specialists; it's a shared commitment that permeates every decision and line of code. Encourage open dialogue about the potential societal impacts of your AI, fostering an environment where ethical dilemmas are discussed, and responsible choices are the default4. Given that a mere 6% of organizations have hired AI ethics specialists, fostering this internal awareness and accountability becomes a powerful differentiator for early-stage companies looking to lead with integrity2.

Proactively seeking expert advice on the rapidly evolving compliance and legal frameworks for AI is also non-negotiable. The regulatory landscape is a minefield of potential liabilities, from the EU AI Act's strict requirements to the myriad of new state privacy laws emerging in the U.S. Engaging with legal and compliance experts early helps you navigate these complexities, understand your obligations, and build systems that are future-proof, effectively avoiding the substantial fines and legal repercussions that can cripple nascent businesses. Remember, regulators are increasingly moving towards proactive spot checks, demanding demonstrable evidence of controls10.

Finally, prioritize transparency in all your AI operations and actively solicit user feedback on privacy and ethical concerns. Users are increasingly discerning, with a significant majority distrusting companies using AI and deeply concerned about their data handling. Implement clear UX design patterns that explain how AI functions, how data is used, and offer granular user controls over their personal information. By making your AI systems explainable and responsive to user input, you not only build invaluable trust but also gain critical insights that can drive continuous auditing, monitoring, and improvement of your AI's ethical performance and privacy compliance.

The journey to building successful AI products is intertwined with the responsibility to build trustworthy ones. By proactively embedding privacy and ethics into your startup's DNA, you don't just mitigate risk; you unlock a powerful competitive advantage, attract discerning talent, and cultivate enduring customer loyalty. Embrace these principles, and you'll not only innovate but also lead the way in shaping an AI future that is both transformative and profoundly humane.