Ethical AI Content Creation: Building Trust and Authenticity for Indie Hackers

Introduction: The Unavoidable Dawn of AI in Content – And Its Shadow

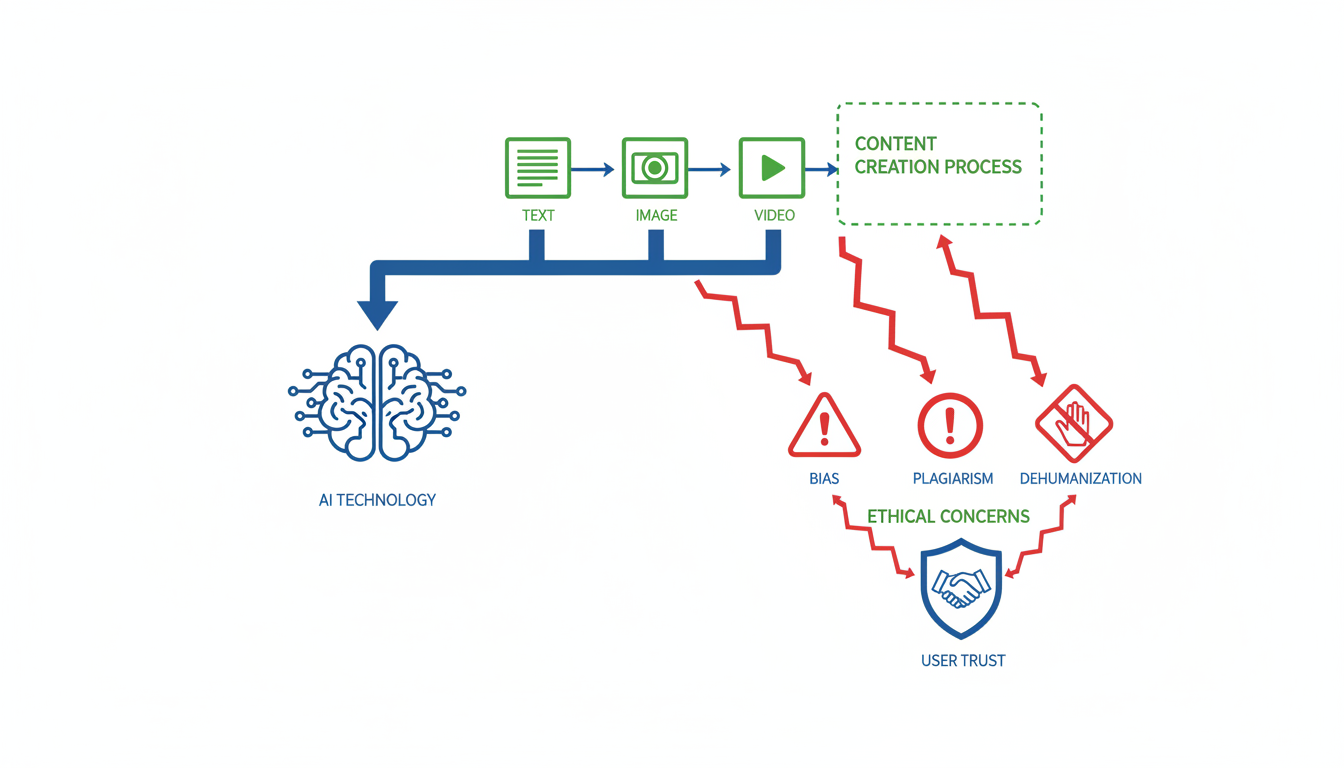

In the past two to three years, Artificial Intelligence (AI) has rapidly woven itself into the fabric of content creation, transforming how we generate, consume, and interact with digital information. This pervasive integration, while offering unprecedented efficiencies, has simultaneously cast a long shadow, giving rise to a complex array of ethical concerns that demand immediate attention for anyone involved in AI-driven content. The Ethics of AI in Content Creation is no longer a fringe discussion but a central challenge, impacting everything from authenticity and copyright to transparency, misinformation, deepfakes, and even inherent biases 1.

For content creators, marketers, and especially SaaS founders and indie hackers building tools in this space, navigating these challenges responsibly is paramount. The stakes are incredibly high; the market for AI content is projected to soar to an impressive $80.12 billion by 2030, underscoring the urgency of establishing robust ethical frameworks now 3. Ignoring these foundational issues risks not only regulatory backlash but also a profound erosion of consumer trust, which is the lifeblood of any sustainable brand.

This report will delve into the critical ethical dilemmas spawned by AI's foray into content, offering actionable insights and real-world examples. Our goal is to equip you with the knowledge to balance AI's transformative potential with ethical responsibility, ensuring you can innovate confidently while upholding truth, trust, and human values in an increasingly automated world. Building a trusted brand in the AI era hinges on proactively addressing these concerns, turning potential pitfalls into pathways for responsible growth and innovation.

Core Strategy: Cultivating Trust in an AI-Augmented World

Navigating the brave new world of AI-driven content demands a proactive strategy, not just a reactive one. For indie hackers and AI entrepreneurs, cultivating trust isn't just a nice-to-have; it's the bedrock of sustainable growth and authentic community building. This involves a deliberate commitment to transparency, robust human oversight, and an unwavering dedication to authenticity. These three pillars serve as a framework for balancing the immense efficiency AI offers with the critical human elements that foster genuine connection and credibility.

Enhance Transparency in AI Use

Transparency is paramount in the evolving landscape of AI in content creation. When companies are open about their AI tools, customer trust and satisfaction measurably increase. This isn't about hiding AI, but about embracing its role openly and honestly. Content creators should explicitly label AI-generated content, perhaps with a clear disclaimer at the top or bottom of a piece, setting audience expectations from the outset. For instance, a blog post could carry a small tag like "AI-assisted outline; sources checked and final judgment is mine." Such transparency is especially vital for content where authenticity is expected, like personal stories, or when synthetic media might be mistaken for reality. Beyond labeling, communicating internal AI policies and disclosing the specific AI models used—like "Generated using GPT-4"—fosters open dialogue. This approach also requires candidly explaining AI's limitations, such as its struggles with nuanced context, potential for factual errors, or inherent biases, providing clear information about data collection and usage in AI applications. Finally, establishing feedback mechanisms allows users to flag issues, demonstrating a commitment to accuracy and continuous improvement.

Implement Robust Human Oversight

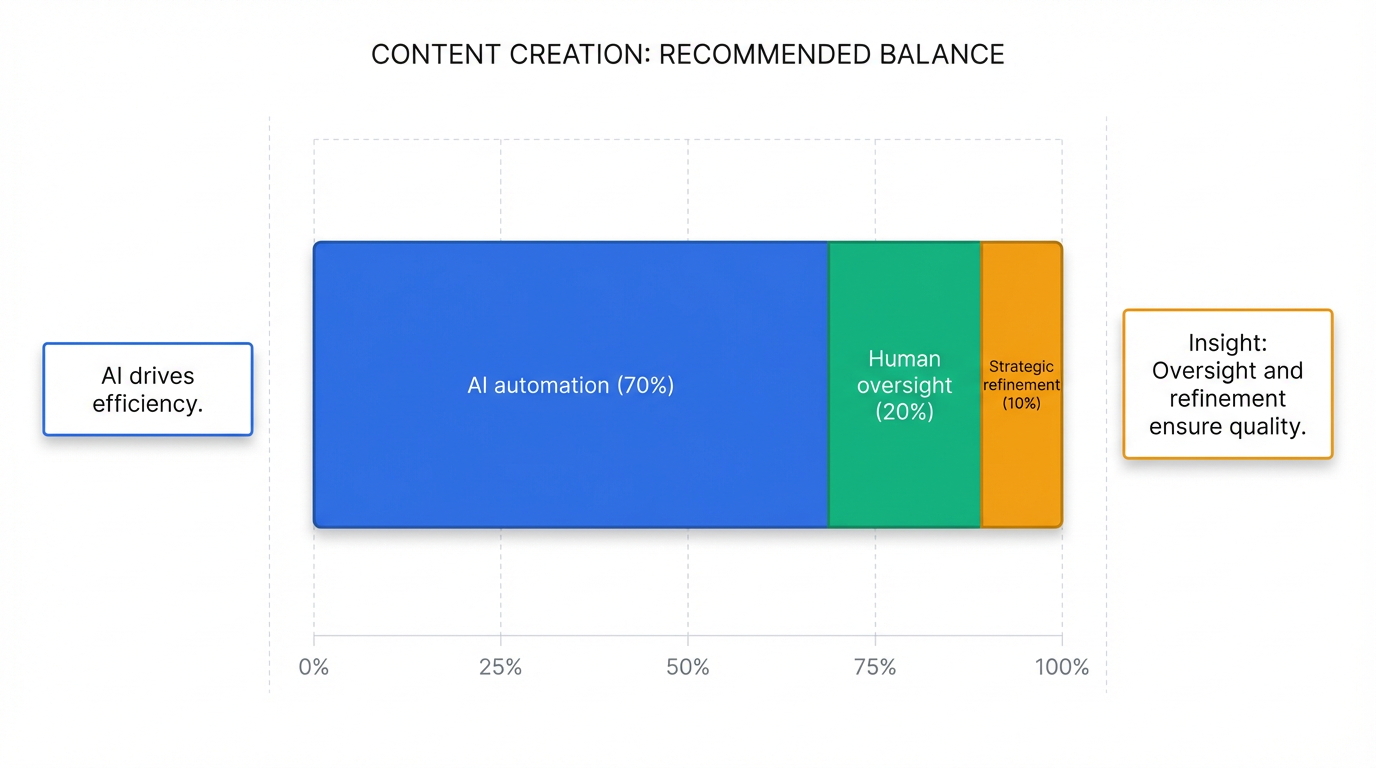

While AI excels at pattern recognition and data processing, human judgment, creativity, and contextual understanding remain irreplaceable. Therefore, effective AI integration necessitates robust human oversight, preventing AI from operating in an unchecked vacuum. This is where the "human-in-the-loop" approach becomes essential. For instance, a marketing team might use AI to generate multiple ad copy variations, but a human editor then reviews, refines, and selects the best options, ensuring alignment with brand voice and ethical guidelines. Such oversight also serves as a crucial safeguard against AI "hallucinations," where the tool produces factually incorrect or misleading outputs. AI should augment human capabilities, freeing up resources for higher-value activities like strategic planning and creative ideation, rather than replacing critical decision-making. Incorporating thorough fact-checking processes against reputable sources is non-negotiable. Additionally, conducting bias and fairness scans helps identify and mitigate language biases or stereotypes in AI outputs, especially when addressing diverse audiences. Assigning clear roles for managing AI tools and outputs, alongside risk-tiering content, ensures accountability and appropriate levels of human review for different stakes. A recommended framework suggests an optimal balance: 70% AI automation, 20% human oversight, and 10% strategic refinement.

Uphold Authenticity and Navigate Complexities

Maintaining authenticity is a long-term play, fundamental for building enduring trust and safeguarding brand reputation. This means an unwavering commitment to truthfulness and integrity. Under no circumstances should AI be used to invent quotes, case studies, examples, credentials, or statistics without rigorous human verification against credible sources. Similarly, publishing promotional claims that could mislead audiences must be strictly avoided. While AI can capably mimic various tones, human review is crucial to preserve a unique brand voice and prevent content from becoming generic, ensuring it genuinely resonates with the target audience. Creative teams should be incentivized for originality, helping distinguish their content in an increasingly AI-generated landscape. A critical aspect of authenticity is proactively addressing misinformation. This involves identifying and mitigating stereotyping, unfair framing, or potential harm in AI-generated outputs. For sensitive topics like health, finance, or legal advice, AI content should only be used as a preliminary draft and must undergo thorough verification by subject-matter experts, given AI's propensity to produce misinformation. Furthermore, navigating copyright is complex; in the U.S., AI-generated content is generally not copyrightable without significant human authorship. Creators must ensure their AI outputs do not infringe upon existing intellectual property and understand that copyright protection applies to human creative contributions. Organizations must educate their staff on the ethical use of AI and proper attribution practices to navigate these evolving complexities responsibly.

Real Example: The Indie Hacker's Blueprint for Ethical AI Content

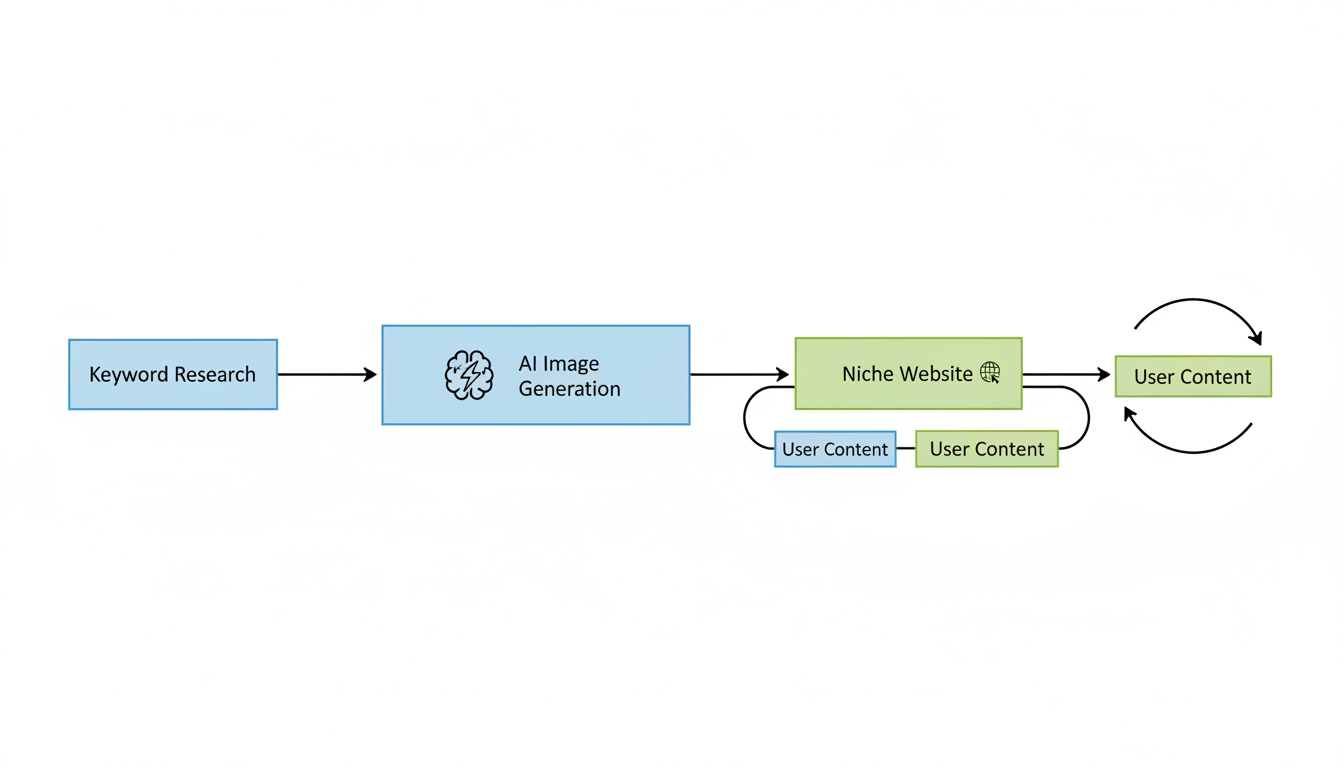

Having explored core strategies for integrating AI ethically, let's look at a concrete example of an indie hacker who successfully put these principles into practice. Danny Postma, known for founding Headshot Pro, embarked on an intriguing content project centered around "AI tattoos" that serves as a masterclass in ethical AI adoption for rapid growth 4. His approach demonstrates how transparency and authenticity can be maintained even when AI drives significant content generation.

Postma leveraged AI extensively to produce content at scale for his niche website. He utilized tools like Ahrefs for efficient keyword research, identifying high-potential topics within the AI tattoo space 4. For the actual content, primarily images, he relied on generative AI models such as Stable Diffusion and Midjourney, enabling him to rapidly create hundreds of thousands of distinct visual pieces 4. This strategy allowed for an unparalleled pace of content creation, showcasing AI's potential to accelerate market entry.

One of the most compelling aspects of Postma's project was its inherent transparency. The very name, "AI tattoos," clearly communicated that the content was AI-generated, setting audience expectations from the outset 4. Furthermore, he developed a tool that empowered users to generate their own content and display it directly on the website, fostering user-generated contributions 4. This not only built a community but also deepened the implicit understanding of the content's AI origins.

Authenticity, in this context, was baked directly into the project's core. The niche itself, "AI-generated art," meant that the content was authentic to its stated purpose; it wasn't trying to pass off AI output as human creation where it wasn't appropriate 4. Postma prioritized a "first to market" approach within this specific, AI-native content space, ensuring that his project was true to the emerging capabilities of generative AI 4. The focus on user interaction further reinforced a sense of genuine engagement with AI output.

The tangible results of Postma's "AI Tattoos" project underscore the viability of his ethical approach. Before its eventual sale, the project successfully achieved an impressive $10,000 in Monthly Recurring Revenue (MRR) 4. Beyond financial success, the rapid and transparent content generation strategy yielded significant audience reception, with Postma noting that "Google loves it, traffic pours in," indicating strong search engine performance and user engagement 4. This case study highlights that speed and scale, when coupled with thoughtful transparency and authentic application, can lead to remarkable outcomes for indie hackers.

Ethical AI Implementation: Practical Tools and Workflows for Indie Hackers

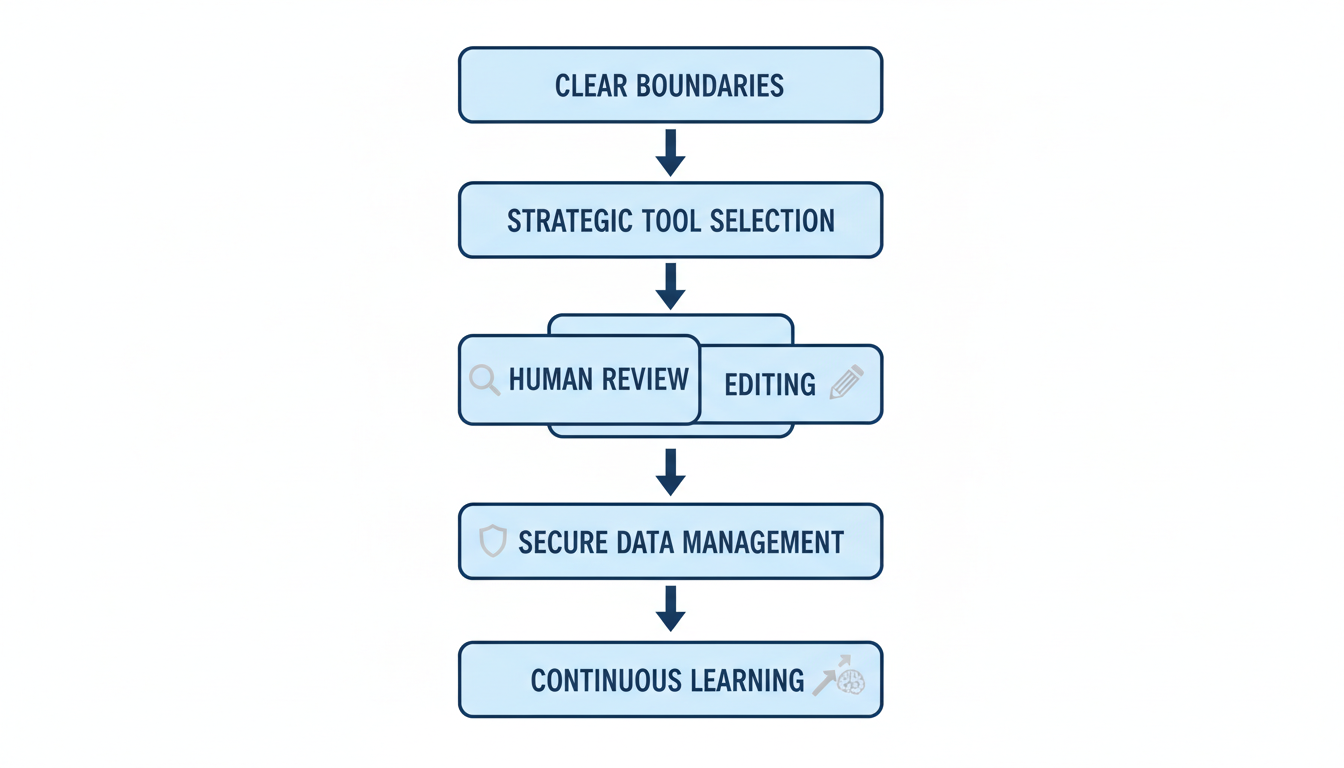

For indie hackers navigating the dynamic landscape of digital content, integrating AI tools ethically isn't just about compliance; it's about building sustainable trust and authenticity. The shift from theoretical ethical considerations to practical implementation requires a deliberate approach to tool selection and workflow design. By defining clear boundaries for AI use, particularly for low-risk, high-leverage tasks like brainstorming headlines or summarizing content, indie hackers can harness efficiency without compromising integrity . Conversely, highly sensitive areas such as crisis communications or legal materials should remain outside AI's purview, demanding human discretion 5.

Strategic tool selection is paramount, beginning with a thorough evaluation of privacy policies. Indie hackers should prioritize tools that offer opt-outs for model training or local-only processing, protecting sensitive project details from inadvertent data leakage . Features that enhance transparency, such as labeling AI-generated parts, or robust data privacy controls should be key ethical considerations when adopting new technologies 6. For cost-conscious indie hackers, platforms offering custom GPTs can provide specialized humanization or technical content processing more affordably than dedicated SaaS solutions 7.

When it comes to writing, AI can streamline literature reviews and initial drafting, but human oversight remains indispensable for voice preservation and factual accuracy . Tools like "ZeroGPT - AI Content Detector & Humanizer GPT" or "GPTZero - AI Detector & Text Humanizer" are designed to refine AI outputs, ensuring content retains a unique human voice and avoids generic, "AI-smell" characteristics 7. Every statistic or quote from AI-generated research, especially for high-risk topics, must undergo rigorous human fact-checking against authoritative sources . This mandatory review process ensures brand alignment and mitigates the risk of propagating inaccuracies or biases .

In design, tools like v0 by Vercel enable indie hackers to rapidly generate UI from text prompts, reducing the need for extensive design skills and accelerating product development 8. Similarly, Midjourney and other image generation tools allow for quick visual content creation, lowering barriers to entry for creative assets . For code generation, tools such as Cursor, GitHub Copilot, and Amazon CodeWhisperer accelerate development by generating boilerplate code and assisting with debugging . However, indie hackers must treat AI-generated code as potentially influenced by open-source licenses, requiring diligence in tracing snippet origins and documenting integration to manage IP concerns 6.

Furthermore, protecting confidentiality is crucial, meaning sensitive information should never be fed into general AI tools without explicit contractual assurances . Consistent ethical prompt engineering, coupled with an awareness of the data provenance powering AI models, helps guide outputs towards desired ethical standards . By implementing internal guidelines for AI use and providing ongoing training, indie hackers can cultivate a culture of responsible experimentation and continuous learning, ensuring their practices evolve with AI advancements .

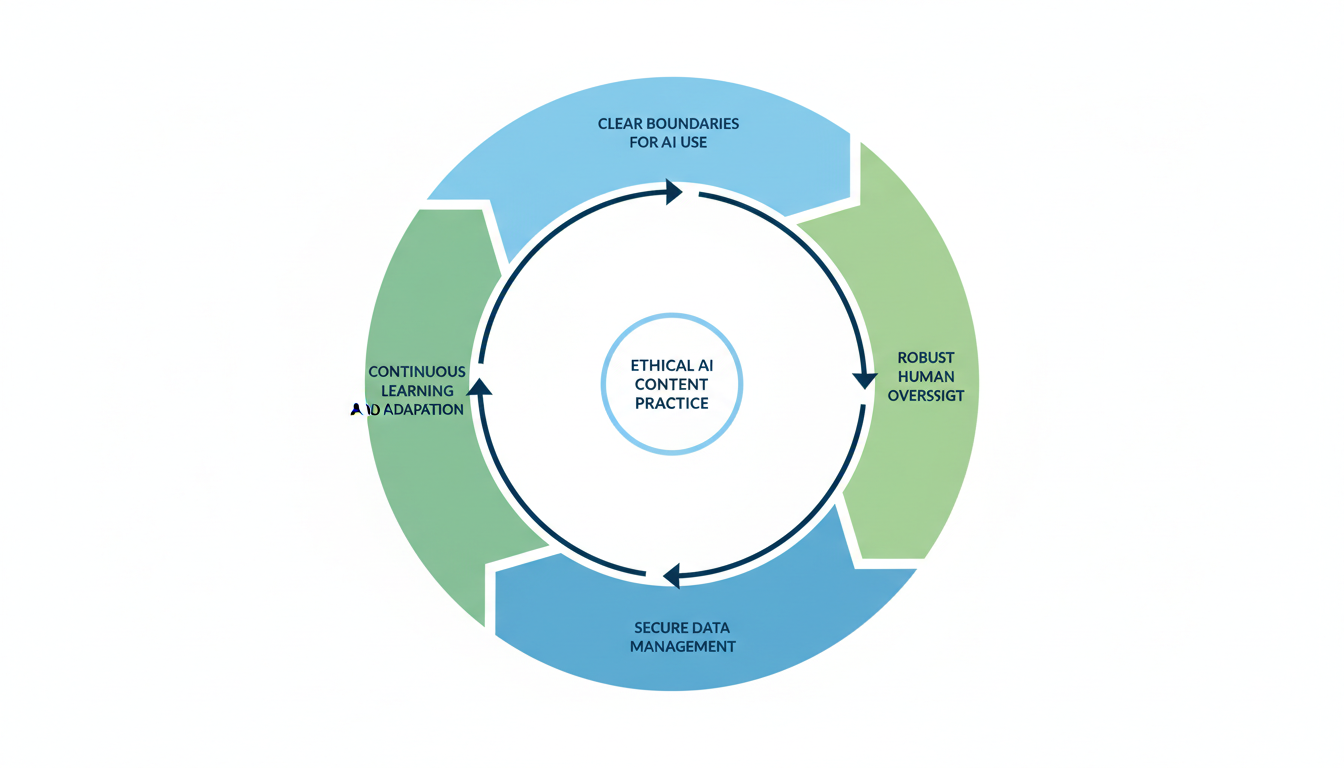

Next Steps: Building an Ethically Sound AI Content Practice

As indie hackers and AI entrepreneurs navigate the rapidly evolving landscape of AI-driven content creation, the path forward isn't just about leveraging powerful tools—it's about doing so responsibly. The benefits of AI in terms of efficiency are undeniable, yet the risks to trust and authenticity are equally significant. To truly thrive, integrating AI ethically isn't a mere afterthought; it's a strategic imperative that builds trust and fosters sustainable growth.

The first crucial step involves implementing clear boundaries for AI use within your workflows. Treat AI as a powerful assistant, not a replacement for human judgment. Delegate low-risk, high-leverage tasks such as brainstorming headlines, summarizing lengthy content, or refining tone for specific platforms . Conversely, always keep AI out of sensitive areas like crisis communications, executive messaging, or any content requiring profound original thought or deep ethical consideration 5. A deliberate "human-AI handoff" model, where human intervention is mandated at critical junctures, ensures accountability and maintains a vital connection to your brand's unique voice 5.

Next, it's paramount to maintain robust human oversight and review of all AI-generated content. AI excels at data processing and pattern recognition, but human experts provide the indispensable elements of judgment, creativity, and contextual understanding 9. This "human-in-the-loop" approach means that every piece of AI-generated content must undergo review, editing, and verification by a human before publication . Establish rigorous fact-checking processes to verify claims against reputable sources and conduct bias scans to mitigate language biases or stereotypes within AI outputs . This isn't just about quality control; it's about safeguarding your brand's reputation and ensuring truthfulness 10.

Furthermore, prioritizing secure data management and ethical prompt engineering is non-negotiable. Be acutely aware that many AI tools collect user prompts and telemetry, which can inadvertently expose sensitive project details or violate privacy regulations 6. Never input confidential information, trade secrets, or personal identifiable information (PII) into general AI tools without explicit contractual assurances and robust security controls . When crafting prompts, be specific and align them with your brand voice and ethical guidelines, actively considering ethical prompt engineering to avoid biased outputs from the outset .

Building on these foundations, establish continuous learning and internal governance frameworks for AI. Develop clear internal guidelines that define acceptable AI practices, outline review processes, and assign accountability within your team . Offer hands-on training to empower your team with AI tools and foster ethical usage, encouraging responsible experimentation 5. The AI landscape is perpetually shifting, so staying updated on advancements, tool updates, and evolving industry standards is crucial to adapt your policies and practices effectively . This proactive approach ensures your operations remain compliant and competitive.

Finally, recognize that leveraging ethical AI practices offers a distinct competitive advantage and builds invaluable brand trust. In an age of increasing skepticism toward online content, transparency and authenticity are your strongest assets . Organizations that prioritize responsible AI are projected to see significantly higher customer satisfaction and improved loyalty 11. By being transparent about AI use, implementing robust human oversight, and upholding ethical principles, you not only mitigate risks but also enhance brand trust, reduce regulatory exposure, and attract top talent who value integrity . Embracing ethical AI isn't just the right thing to do; it's a strategic investment in the longevity and credibility of your venture.