Gemini 3.1 Pro: Unveiling Its Advanced Model-Making Prowess

Introduction: The Arrival of Gemini 3.1 Pro

Google officially introduced Gemini 3.1 Pro on Thursday, February 19, 2026, making it available in preview across various platforms 1. This significant announcement came through official Google channels, including Google's "The Keyword" blog and statements from Google DeepMind and Vertex AI product management 2.

This release marks Google's first ".1" increment for a Gemini model, signaling a focused intelligence upgrade rather than a broad feature expansion, and indicating a strategic shift towards more frequent, incremental updates 3. Gemini 3.1 Pro is positioned as a substantial advancement in core reasoning and problem-solving capabilities, designed to be smarter and more capable, particularly for complex tasks where simple answers are insufficient 1. It builds upon the Gemini 3 series, incorporating the advanced reasoning engine from Gemini 3 Deep Think 2.

Key Enhancements: Beyond the Surface

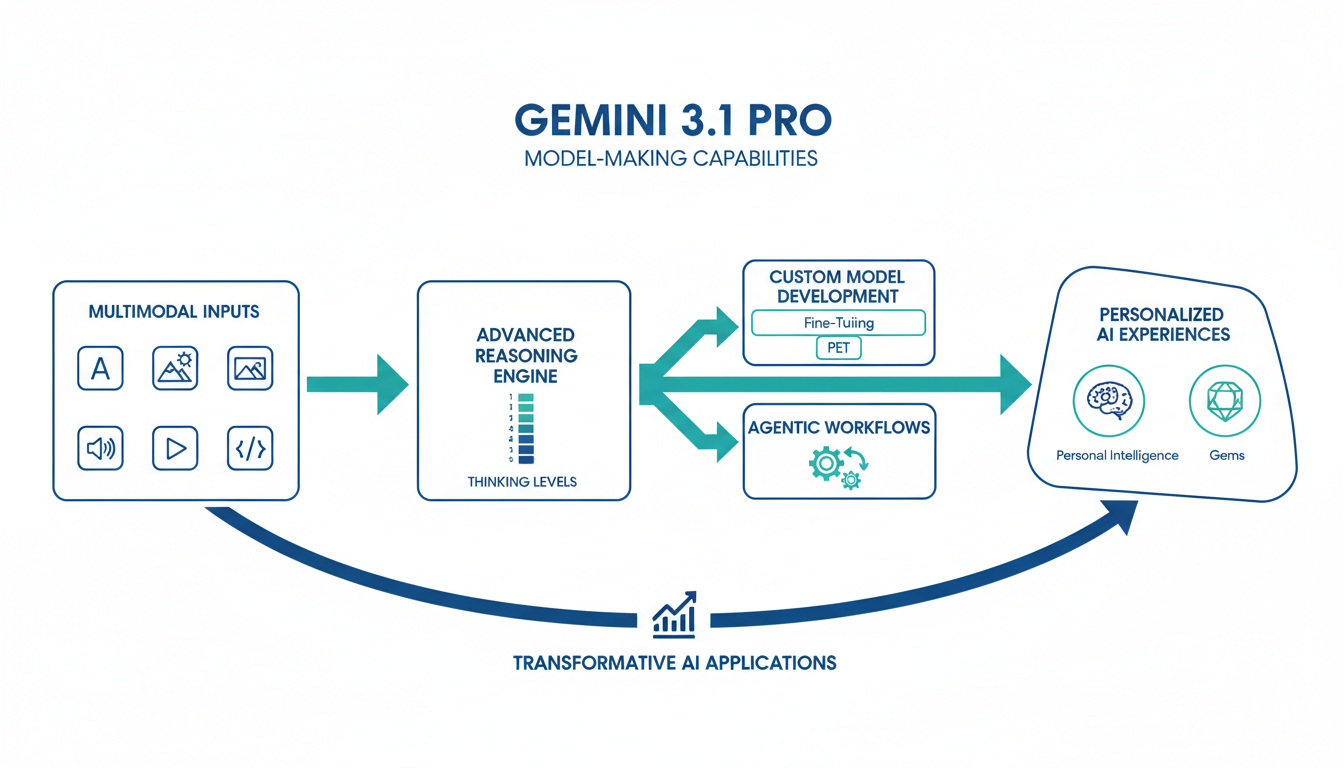

Gemini 3.1 Pro, a significant update in Google's flagship AI model series, introduces enhanced reasoning, advanced multimodal capabilities, and improved agentic workflows, building on the foundation of the Gemini 3 series . Announced in February 2026, this iteration focuses on deepening intelligence for complex problem-solving and is considered Google's most advanced model for such tasks at its release . Its design is geared towards bridging complex APIs and user-friendly interfaces, supporting agentic tooling improvements .

Advanced Reasoning Capabilities and Configurable Thinking Levels

Gemini 3.1 Pro is described as a "noticeably smarter, more capable baseline for complex problem-solving" 2, excelling at long-horizon, multi-step workflows and deep logical interpretation 4. A significant new feature is the introduction of configurable thinking levels (low, medium, high), allowing developers and users to control the computational effort the model applies to balance quality, latency, and cost . The high setting enables the model to behave like a "mini version of Gemini Deep Think," capable of multi-minute reasoning for complex mathematical or logical problems . These advancements are attributed to building upon the Deep Think research line and incorporating reinforcement learning (RL) techniques, with Deep Think using iterative reasoning to explore multiple hypotheses effectively .

Enhanced Multimodal Understanding and Processing

The model natively understands and processes a diverse range of data inputs, including text, images, videos, audio, and code . This capability allows for bringing disparate data into a single view and visualizing complex topics efficiently . Specific improvements include multimodal function calling, which enables tools to return images for native model processing 5.

Optimized Agentic Workflows and Code Generation

Gemini 3.1 Pro is optimized for production agents, demonstrating improved tool calling, planning, and execution for autonomous or semi-autonomous tasks . It exhibits strong performance in competitive coding and real-world agentic tasks 4. A notable capability is its ability to generate website-ready, animated SVGs directly from text prompts, producing code-based animations that maintain crispness at any scale with small file sizes . Furthermore, the model aids in complex system synthesis, creative coding, and interactive design .

Expanded Context Window and Efficiency Improvements

Gemini 3.1 Pro supports an extensive context window of up to 1 million input tokens and 64,000 output tokens . This expanded capacity facilitates the effective processing of long documents, multi-file coding, and complex queries . The model also shows improved token usage in complex workflows, which contributes to reduced costs and faster processing times . Notably, the API pricing remains consistent with its predecessor, at $2 per million input tokens and $12 per million output tokens .

Deep Dive: Exceptionally Strong Model-Making Capabilities

Gemini 3.1 Pro, the latest iteration in Google's Gemini 3 series, represents a significant advancement in AI capabilities, particularly tailored for agentic AI markets. Its design empowers users to build and adapt models for specialized applications through robust custom model creation, advanced fine-tuning, and personalized experiences 6. This section delves into the specific tools, APIs, methodologies, and inherent strengths that make Gemini 3.1 Pro's model-making capabilities exceptionally strong.

Core Strengths Driving Model-Making

Gemini 3.1 Pro's prowess is distinguished by its focus on agentic workflows, advanced reasoning, and multimodal capabilities, signifying a shift from traditional conversational models to highly operational ones .

- Agentic AI Focus: Gemini 3.1 Pro is positioned as a foundational engine for autonomous agents, capable of handling complex tasks such as navigating file systems, executing code, and performing scientific reasoning .

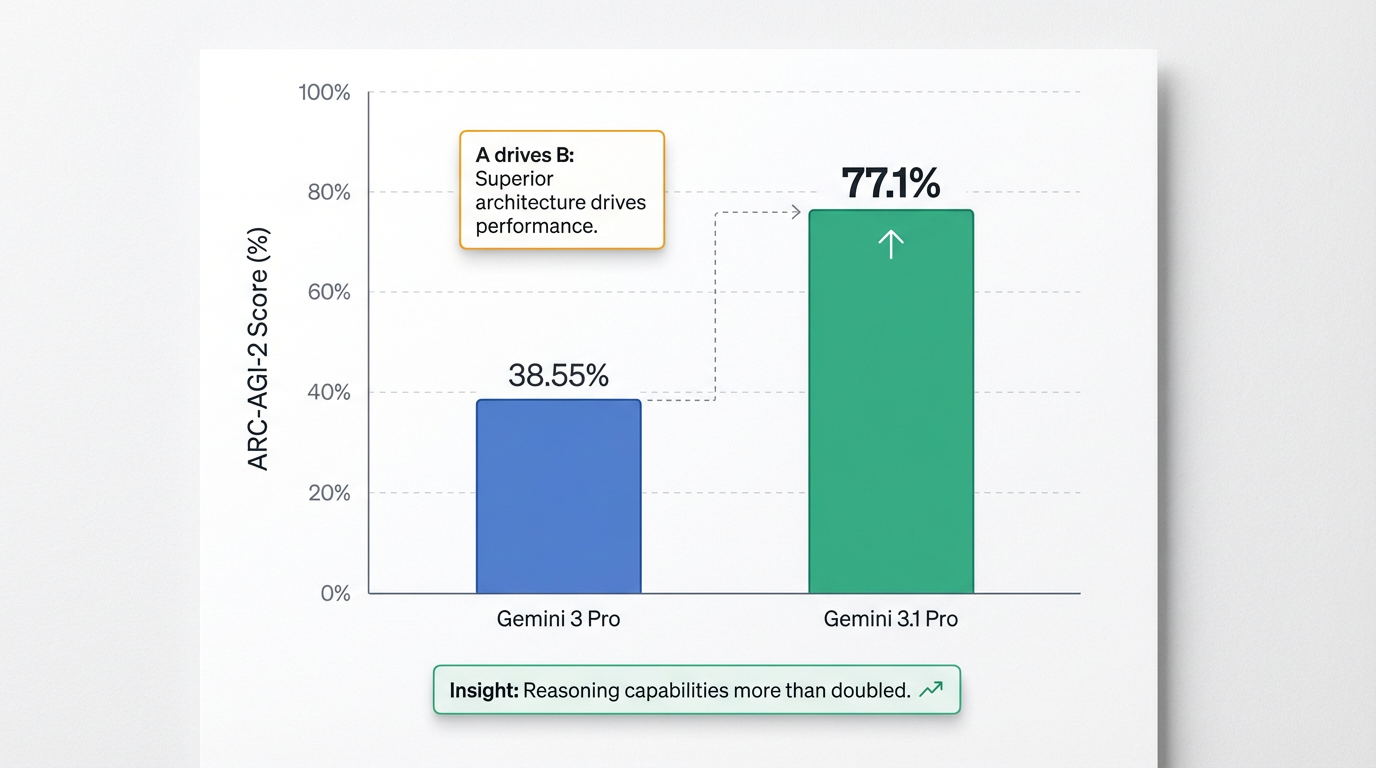

- Superior Reasoning Performance: The model achieved a verified score of 77.1% on the ARC-AGI-2 benchmark, which more than doubles the reasoning performance of its predecessor. This demonstrates a robust capacity for solving novel logic patterns beyond mere pattern matching . Furthermore, it exhibits expert-level performance on graduate-level scientific reasoning, scoring 94.1% on GPQA Diamond 6.

- Massive Multimodal Context Window: Featuring an industry-leading 1 million token input context window, Gemini 3.1 Pro can process extensive data, including entire code repositories or thousands of pages of text, in a single pass. It natively supports diverse inputs such as text, images, audio, video, PDFs (up to 1,000 pages), and code .

- Granular Reasoning Control: The introduction of the

thinking_levelparameter offers unprecedented control over the model's internal reasoning depth (low, medium, high), allowing developers to optimize for response quality, reasoning complexity, latency, and cost. "Medium" is a new addition to 3.1 Pro . - Persistent Context with Thought Signatures: Encrypted "thought signatures" are critical for maintaining context and ensuring reliability in multi-turn agentic workflows by preserving the model's intermediate reasoning states .

- Efficiency and Cost-Effectiveness: Google positions Gemini 3.1 Pro as an "efficiency leader," offering competitive pricing, especially for prompts under 200k tokens. Fine-tuning with techniques like LoRA can significantly reduce input token costs and latency for high-volume operations .

- Creative Coding and Synthesis: The model excels at "vibe coding," translating high-level aesthetic or thematic instructions into functional, production-ready code. Examples include generating animated SVGs directly from text prompts, building live aerospace dashboards from telemetry, or creating 3D interactive simulations .

- Enhanced Multimodal Understanding: Its 1M token window allows for simultaneous ingestion and cross-referenced analysis of complex multimodal data, transforming knowledge work in fields like legal review and academic research .

Tools, APIs, and Methodologies for Custom Model Development

Gemini 3.1 Pro offers a comprehensive suite of tools and APIs for custom model development, facilitating the creation of sophisticated AI agents and specialized applications:

- Dedicated Endpoints: The

gemini-3.1-pro-preview-customtoolsAPI endpoint is optimized for developers who combine bash commands with custom functions, specifically tuned to prioritize custom tools likeview_fileorsearch_code, making it a reliable backbone for autonomous coding agents . - Development Platforms & Access Points: The Gemini API provides general access for developers and natively supports multi-modal inputs . Developers can access 3.1 Pro in preview via Google AI Studio, offering an intuitive interface for model interaction and tuning . Vertex AI is available for enterprise users, providing robust features for model deployment and management . Google Antigravity serves as Google's dedicated agentic development platform, deeply integrated with Gemini 3.1 Pro . Additional avenues for developer interaction include Gemini CLI and Android Studio 7. Firebase AI Logic offers client SDKs (Swift, Kotlin/Java, JavaScript, Dart, Unity) for direct calls to the Gemini API from mobile and web applications, offering security features like App Check and integration with Firebase services (e.g., Cloud Storage, Cloud Firestore) 8. Genkit, Firebase's open-source framework, supports server-side AI development with advanced AI features and local tooling 8.

- Built-in Capabilities & Tooling: The Gemini API includes managed tools such as Google Search, Google Maps, Code Execution, URL Context, Computer Use (Preview), and File Search 9. Developers can define Custom Tools (Function Calling) using OpenAPI schemas, which the model can then invoke autonomously . Structured Outputs enable generating model responses that adhere to specific schemas, useful for rendering custom UIs, and can be combined with built-in tools 9. Gemini also integrates with leading open-source agent frameworks 9.

- Architectural Features for Customization: Beyond the

thinking_leveland Thought Signatures, developers benefit from Media Resolution Controls for efficient handling of multimodal inputs, where they can specifymedia_resolution_high(1,120 tokens per image) ormedia_resolution_low(70 tokens per frame), aiding in token budget management . Multimodal Function Responses can now incorporate objects like images and PDFs, in addition to text . Streaming Function Calling improves user experience by allowing partial function call arguments to be streamed during tool use .

Advanced Fine-Tuning Techniques

Gemini models support various sophisticated fine-tuning strategies to adapt them for specialized applications with high precision and efficiency:

- Parameter Efficient Tuning (PET): Available through Google AI Studio and Vertex AI, PET (specifically Low-Rank Adaptation, or LoRA, on Vertex AI) significantly reduces the complexity, cost, and data requirements of traditional fine-tuning. This technique produces high-quality customized models with lower latency and can be effective with as few as a few hundred (recommended 100+) data points .

- Supervised Fine-Tuning (SFT): This technique improves model performance by training it on a labeled dataset that demonstrates desired behaviors or tasks . SFT is suitable for tasks such as classification, sentiment analysis, entity extraction, summarization, and generating domain-specific queries 10. It is supported by Gemini 2.5 Pro, 2.5 Flash, 2.5 Flash-Lite, 2.0 Flash, and 2.0 Flash-Lite models . Crucially, data quality, emphasizing relevance, diversity, and accuracy, is paramount, with data preprocessing, particularly deduplication, vital to prevent memorization and improve generalization . Adding system or instance-level instructions helps the model condition its output and generalize to unseen instructions 11.

- Preference Tuning: This technique builds on SFT by incorporating human feedback data, enabling models to learn subjective user preferences that are difficult to define with explicit labels. This is available for Gemini 2.5 Flash and 2.5 Flash-Lite 10.

- Continuous Tuning: Allows for continued refinement of an already tuned model or checkpoint by introducing more epochs or additional training examples 10. Supported models include Gemini 2.5 Pro, 2.5 Flash, and 2.5 Flash-Lite 10.

- Tuning Checkpoints: Provides the ability to save tuning progress, compare performance, and select the most effective checkpoint 10.

- Hyperparameter Management: For Gemini 3, a lower learning rate (0.05-0.1) is recommended to preserve pre-trained reasoning, and typically 3-5 epochs are used to prevent overfitting 12.

- "Reasoning Protection" Check: During fine-tuning, it is advised to include approximately 20% of training data with Chain-of-Thought reasoning in the output to prevent "Catastrophic Forgetting" and ensure the model retains its "Deep Think" capabilities 12.

- Multi-modal Fine-Tuning: Leveraging Gemini 3's native multi-modal capabilities, fine-tuning can extend to images (e.g., for visual quality control in manufacturing) and video (e.g., for automated video-to-social repurposing) 12.

| Tuning Technique | Purpose | Applicable Gemini Models |

|---|---|---|

| Parameter Efficient Tuning (PET) (LoRA) | Reduces complexity, cost, and data requirements for fine-tuning; produces high-quality customized models with lower latency; effective with 100+ data points. | Gemini models (via Google AI Studio, Vertex AI) |

| Supervised Fine-Tuning (SFT) | Improves model performance by training on labeled datasets demonstrating desired behaviors or tasks (e.g., classification, sentiment analysis, entity extraction, summarization, query generation). | Gemini 2.5 Pro, 2.5 Flash, 2.5 Flash-Lite, 2.0 Flash, 2.0 Flash-Lite |

| Preference Tuning | Builds on SFT by incorporating human feedback data, enabling models to learn subjective user preferences. | Gemini 2.5 Flash, 2.5 Flash-Lite |

| Continuous Tuning | Allows for continued refinement of an already tuned model or checkpoint by introducing more epochs or additional training examples. | Gemini 2.5 Pro, 2.5 Flash, 2.5 Flash-Lite |

Personalization Options and Mechanisms

Gemini 3.1 Pro enhances personalization through features designed to securely integrate user-specific contexts, offering tailored and private experiences:

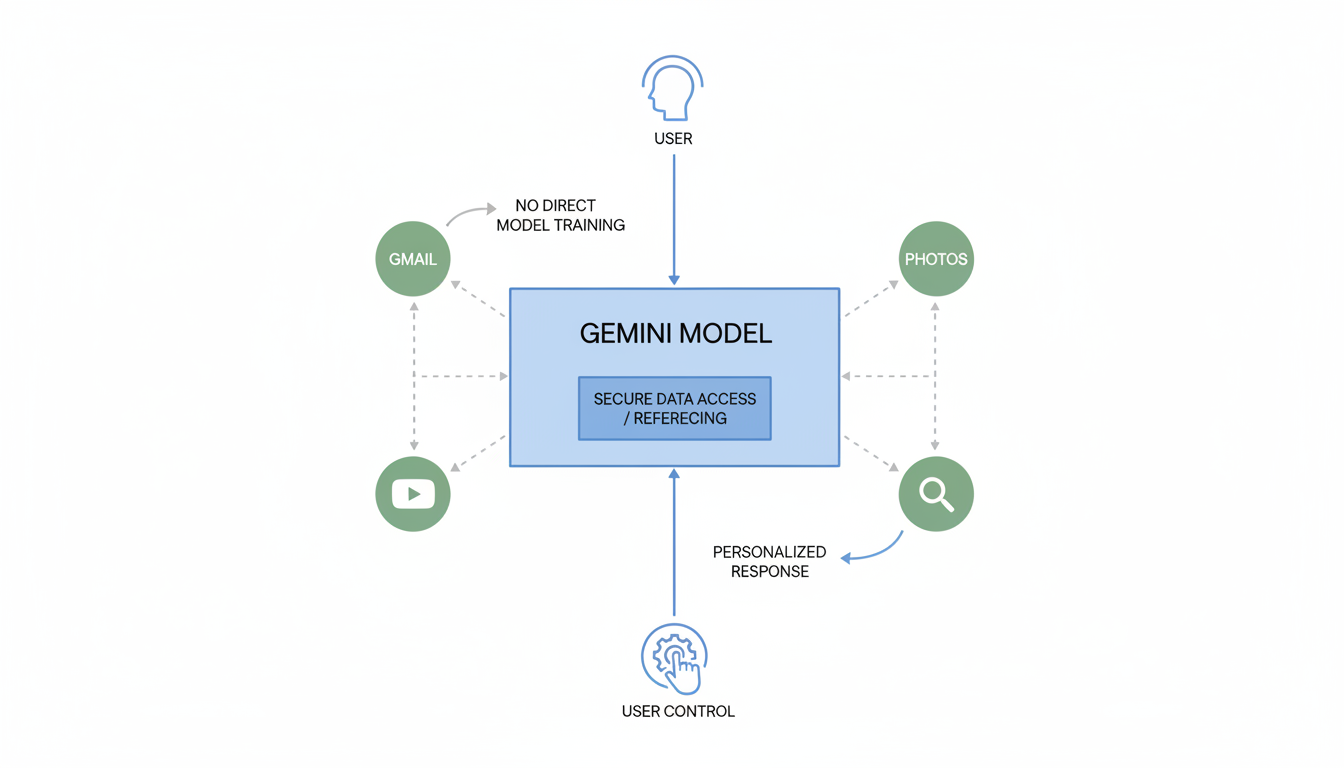

- Personal Intelligence: This beta feature, available to Google AI Pro/Ultra subscribers in the U.S., securely connects Gemini to a user's Google apps, such as Gmail, Photos, YouTube, and Search . Gemini accesses data from linked apps to fulfill specific requests, like finding tire sizes based on travel photos or summarizing emails 13. Crucially, this data is referenced to provide personalized replies but is not directly used to train the underlying model, ensuring privacy . Users have full control over which apps to link and can disable personalization at any time, with Gemini attempting to reference its sources for transparency . This enables context-aware assistance for tasks such as travel planning based on past preferences or organizing emails .

- Gems: Users can create custom versions of Gemini, called Gems, which can be personalized to act as experts on any topic (e.g., a learning coach or brainstorming partner) without requiring coding .

- Custom Instructions/Preferences: Users can instruct Gemini to remember specific interests and preferences, leading to more relevant and helpful responses over time 14.

- Conversation History: Gemini utilizes past chat history to craft responses, allowing for seamless continuation of discussions and summarization of previous topics 14.

- NotebookLM: Offers an enhanced experience with personalized notebook customization, advanced sharing, and usage analytics 15.

Key Examples and Use Cases

The advanced model-making capabilities of Gemini 3.1 Pro translate into a diverse array of powerful examples and use cases:

- Autonomous Coding Agents: The

gemini-3.1-pro-preview-customtoolsendpoint is specifically designed for developers building agents that require precise tool usage, such as viewing files or searching code, enabling more reliable autonomous coding workflows . - Refactoring and Testing Code: Gemini 3.1 Pro can refactor Python functions to handle missing keys, ignore negative amounts, log invalid entries, include type hints and docstrings, and generate comprehensive unit tests for edge cases 16.

- Complex Problem Solving: The model demonstrates proficiency in multi-step logical reasoning with constraint handling, solving intricate problems like assigning analysts to projects while adhering to multiple rules 16.

- Dynamic Asset Generation: It can create production-ready animated SVGs directly from text prompts, ensuring crisp scaling and minimal file sizes. It can also build interactive 3D simulations (e.g., starling murmurations with hand-tracking) and real-time aerospace dashboards .

- "Vibe Coding" for UI/UX: Gemini 3.1 Pro translates abstract concepts, such as literary themes (e.g., "Wuthering Heights"), into functional code for modern portfolio websites, reflecting atmospheric tones in design elements like color palettes and typography .

- Long-Context Analysis: Its 1M token context window allows it to ingest and synthesize information simultaneously from video transcripts, slides, code repositories, and research papers, facilitating cross-referenced questions and detailed synthesis reports .

- Personalized Assistance: With Personal Intelligence, Gemini can plan travel itineraries based on past trips, summarize emails, or provide contextual information from linked Google apps without direct data training .

- Custom AI Experts (Gems): Users can create specialized AI personas, such as a learning coach or brainstorming partner, for specific tasks and topics without requiring coding .

Performance and Impact: What This Means for Users

Gemini 3.1 Pro, the latest iteration in Google's flagship AI model series, signifies a substantial leap in artificial intelligence, focusing on enhanced reasoning, advanced multimodal capabilities, and improved agentic workflows . Released in February 2026, it is engineered for complex problem-solving and stands as Google's most advanced model for such tasks at its release . This powerful model is set to redefine how developers, enterprises, and consumers interact with AI, translating its advanced capabilities into tangible benefits and transformative potential across various domains.

Key Performance Benchmarks and Improvements

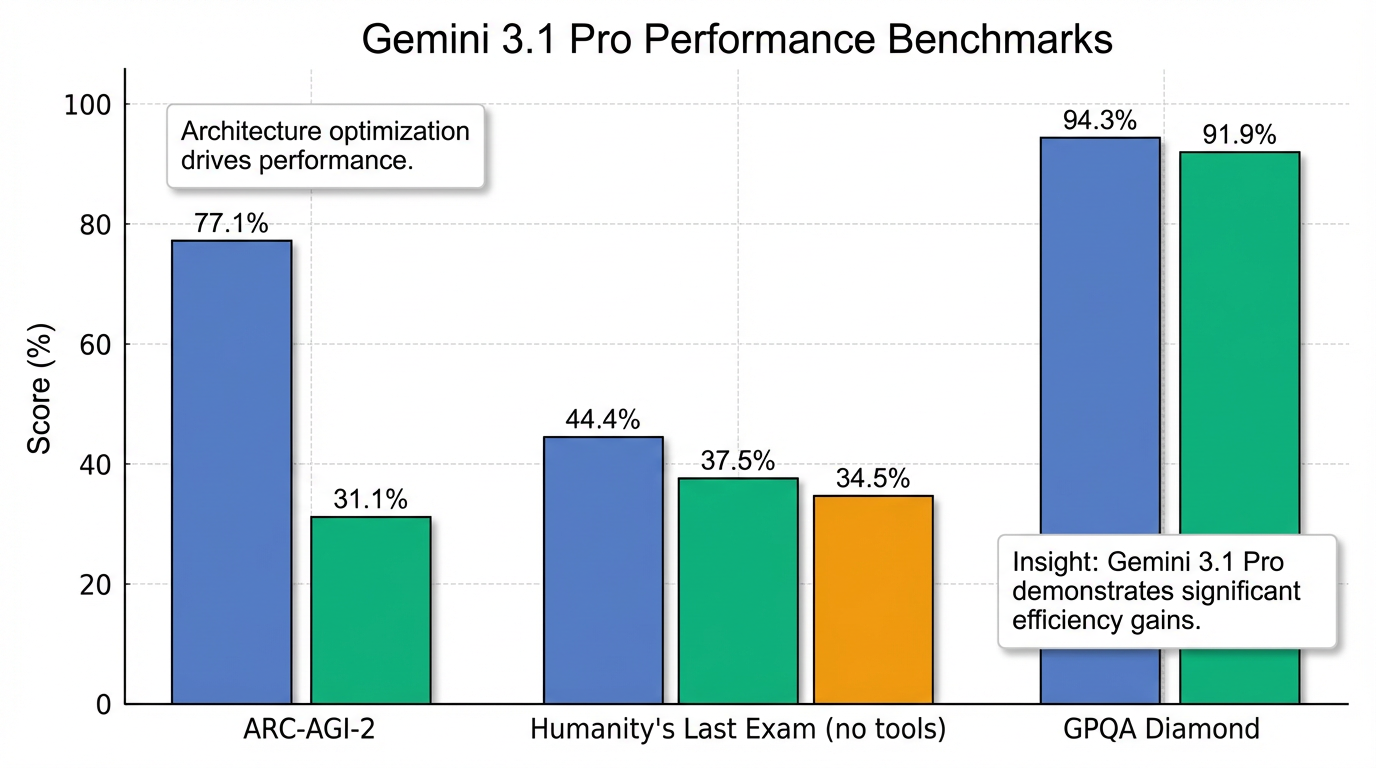

Gemini 3.1 Pro demonstrates significant performance advancements over its predecessors and competitors, particularly in reasoning and complex problem-solving:

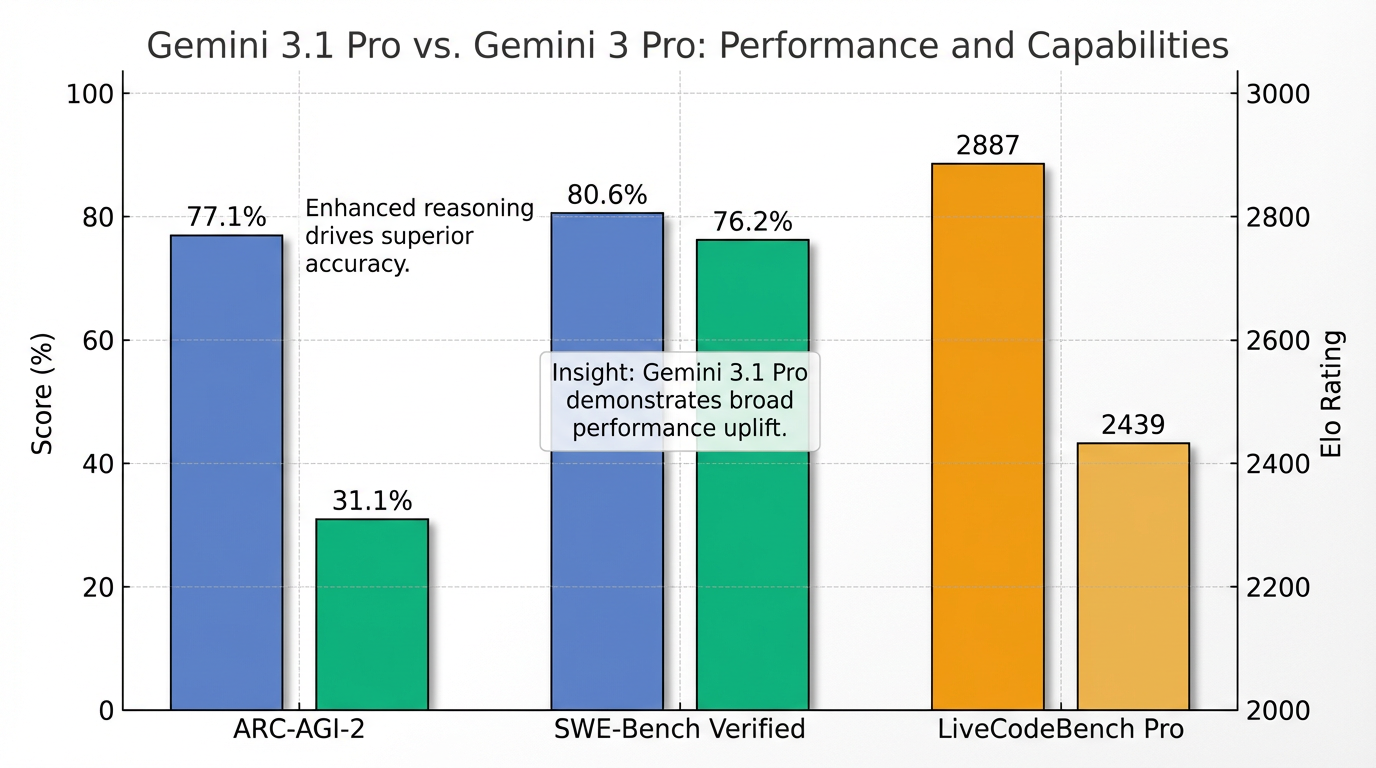

- ARC-AGI-2 Benchmark: It achieved a verified score of 77.1%, more than doubling Gemini 3 Pro's 31.1% score . This indicates a profound improvement in the model's ability to acquire new skills and discern novel logic patterns .

- Humanity's Last Exam: The model scored 44.4% without tools, an improvement over Gemini 3 Pro's 37.5%, and surpassed OpenAI's GPT-5.2 (34.5%) . With tools enabled, it reached 51.4%, closely trailing Claude Opus 4.6 (53.1%) 17.

- SWE-Bench Verified (Agentic Coding): Gemini 3.1 Pro achieved 80.6%, outperforming Gemini 3 Pro's 76.2% . However, on SWE-Bench Pro (Public), GPT-5.3-Codex (56.8%) slightly edged out Gemini 3.1 Pro (54.2%) 17.

- GPQA Diamond (Scientific Knowledge): It scored an impressive 94.3%, surpassing Gemini 3 Pro's 91.9% .

- LiveCodeBench Pro: The model achieved an Elo rating of 2887, a significant increase from Gemini 3 Pro's 2439 .

- Agentic Benchmarks: Showed substantial improvements, with scores of 68.5% on Terminal-Bench 2.0 (vs. 56.9% for 3 Pro), 69.2% on MCP Atlas (vs. 54.1% for 3 Pro), and 85.9% on BrowseComp (vs. 59.2% for 3 Pro) 18.

While Gemini 1.5 Pro is recognized for its breakthrough 2 million token long-context window, Gemini 3.1 Pro distinguishes itself by focusing on deeper reasoning within its 1 million token context, prioritizing raw intelligence and multimodal understanding as a refinement of the Gemini 3 series .

Practical Applications and Use Cases Across Industries

Gemini 3.1 Pro is designed for tasks where superficial answers are insufficient, necessitating advanced reasoning and deep contextual understanding . Its extensive capabilities open doors to a wide array of practical applications:

- Code Generation and Debugging: The model can analyze large code repositories (up to 30,000 lines), suggest modifications, debug complex codebases, and refactor Python functions to handle edge cases and generate comprehensive unit tests .

- Dynamic Asset Creation: It excels at "vibe coding," generating website-ready animated SVGs directly from text prompts, producing code-based animations that remain crisp at any scale with small file sizes . It can also build live aerospace dashboards from telemetry, interactive 3D simulations, or create user interfaces based on abstract literary themes .

- Complex Problem Solving: Gemini 3.1 Pro demonstrates proficiency in multi-step logical reasoning with constraint handling, capable of solving intricate problems such as assigning analysts to projects while adhering to multiple rules 16.

- Multimodal Analysis and Synthesis: With its 1 million token input context window, it can ingest and synthesize information from video transcripts, slides, code repositories, PDFs (up to 1,000 pages), and research papers simultaneously, enabling cross-referenced analysis and detailed synthesis reports . This transforms knowledge work in fields like legal review and academic research .

- Personalized Assistance: The "Personal Intelligence" feature, in beta for Google AI Pro/Ultra subscribers, securely connects Gemini to a user's Google apps (Gmail, Photos, YouTube, Search) to fulfill specific requests without training the underlying model on private data . This enables context-aware assistance for tasks like travel planning based on past preferences or summarizing emails . Users can also create "Gems," which are custom versions of Gemini personalized to act as experts on any topic without coding .

Tangible Benefits for Developers, Enterprises, and Consumers

Gemini 3.1 Pro's advancements translate into significant benefits for its diverse user base:

- For Developers: The model serves as a foundation for building advanced AI applications, agentic systems, and integrations within Integrated Development Environments (IDEs) . Its optimized agentic workflows, improved tool calling, planning, and execution are crucial for autonomous or semi-autonomous tasks . The introduction of configurable thinking levels (

low,medium,high) allows developers to fine-tune the model's computational effort, balancing quality, latency, and cost according to project needs . - For Enterprises: Businesses can leverage Gemini 3.1 Pro to solve tougher problems, synthesize large datasets, conduct complex data visualization, automate financial processes, enhance spreadsheet applications, and perform deep research . Its capacity for intricate system synthesis and creative coding aids in innovation and efficiency .

- For Consumers: Users of the Gemini app and NotebookLM benefit from enhanced capabilities for complex queries, with higher usage limits available for Google AI Pro and Ultra subscribers . Features like "Personal Intelligence" and "Gems" offer deeply personalized and contextual AI assistance, making daily tasks more efficient and tailored .

Efficiency and Cost-Effectiveness of Gemini 3.1 Pro

Efficiency is a core aspect of Gemini 3.1 Pro's design. The model shows improved token usage in complex workflows, contributing to reduced costs and faster processing times . Its API pricing remains competitive, unchanged from its predecessor at $2 per million input tokens and $12 per million output tokens . Google positions Gemini 3.1 Pro as an "efficiency leader," offering competitive pricing, especially for prompts under 200k tokens 6. Furthermore, advanced fine-tuning techniques like Parameter Efficient Tuning (PET) using LoRA can significantly reduce input token costs and latency for high-volume operations . Developers can also manage token budgets efficiently using media resolution controls for multimodal inputs .

Despite its advanced capabilities, it is worth noting that Gemini 3.1 Pro does not universally dominate all benchmarks, trailing some competitors like Claude Opus 4.6 in specific text-based tasks or GPT-5.3-Codex in certain coding benchmarks . Additionally, customer feedback suggests occasional frustrations with the model's "process" during development, including tendencies to get stuck in loops or use tools inefficiently compared to models like Claude Opus 19. However, these are minor trade-offs against its overall transformative potential, particularly in advanced reasoning and agentic applications.

Conclusion: The Future with Gemini 3.1 Pro

Gemini 3.1 Pro marks a pivotal advancement in Google's AI trajectory, solidifying its position as a transformative force, particularly for operational and agentic systems 6. Officially introduced in February 2026, this iteration signifies a shift towards deeper intelligence and more frequent, incremental updates, building upon the advanced reasoning engine of Gemini 3 Deep Think .

The model's key advancements coalesce around enhanced reasoning, native multimodal understanding, and superior agentic capabilities. Gemini 3.1 Pro is designed to be noticeably smarter and more capable for complex problem-solving, excelling at tasks requiring deep context, planning, and synthesizing disparate data . Its innovative three-tier thinking_level system (low, medium, high) provides developers with granular control over the model's internal reasoning depth, balancing latency, cost, and output quality, with the "high" setting emulating a "mini version of Gemini Deep Think" . This deep logical interpretation is complemented by its native multimodal understanding, processing text, audio, images, video, and entire code repositories, thereby bringing diverse data into a single coherent view .

The model's true potential is perhaps most evident in its agentic capabilities and optimized software engineering workflows. Gemini 3.1 Pro is explicitly optimized for autonomous agents, tool usage, and multi-step execution, demonstrating robust performance in competitive coding and real-world agentic tasks . Its exceptional performance on benchmarks such as ARC-AGI-2 (77.1%), Humanity's Last Exam (44.4%), and SWE-Bench Verified (80.6%) underscores its capacity for fluid intelligence, complex problem-solving, and advanced coding .

Beyond its raw intelligence, Gemini 3.1 Pro offers exceptionally strong model-making capabilities, empowering users to build and adapt models for specialized applications. This includes a comprehensive suite of tools and APIs for custom development, such as dedicated endpoints like gemini-3.1-pro-preview-customtools for autonomous coding agents, accessible through platforms like Google AI Studio, Vertex AI, and Google Antigravity . Architectural features like "Thought Signatures" further enhance reliability by maintaining context in multi-turn agentic workflows . The model supports advanced fine-tuning techniques such as Parameter Efficient Tuning (PET/LoRA) and Supervised Fine-Tuning (SFT), allowing for the creation of high-quality customized models with fewer data points and lower costs . Furthermore, "Reasoning Protection" is advised during fine-tuning to preserve its "Deep Think" capabilities 12. Personalization options, such as "Personal Intelligence" for secure integration with a user's Google apps and "Gems" for creating custom AI experts, further tailor the AI experience without compromising privacy .

In essence, Gemini 3.1 Pro is positioned to empower developers to build sophisticated AI applications, enable enterprises to tackle complex business challenges, and provide consumers with highly tailored and intelligent solutions . Its focus on agentic AI, combined with robust customization through dedicated tools, advanced fine-tuning, and personalization mechanisms, positions it as a cornerstone for the next generation of AI innovation. The future, with Gemini 3.1 Pro at its core, promises an era of more capable, adaptable, and deeply integrated artificial intelligence.