OpenAI's Ambitious $600 Billion Target by 2030: An In-Depth Analysis

Introduction

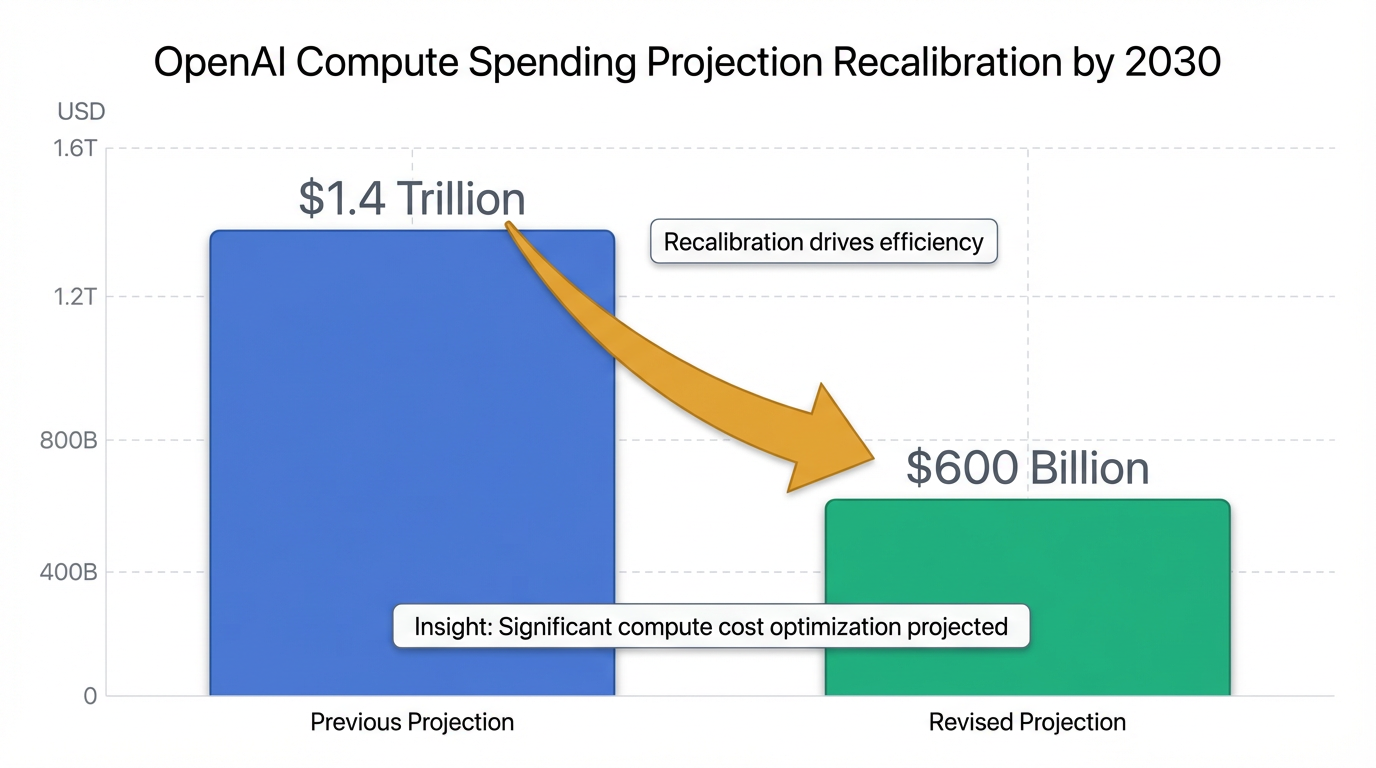

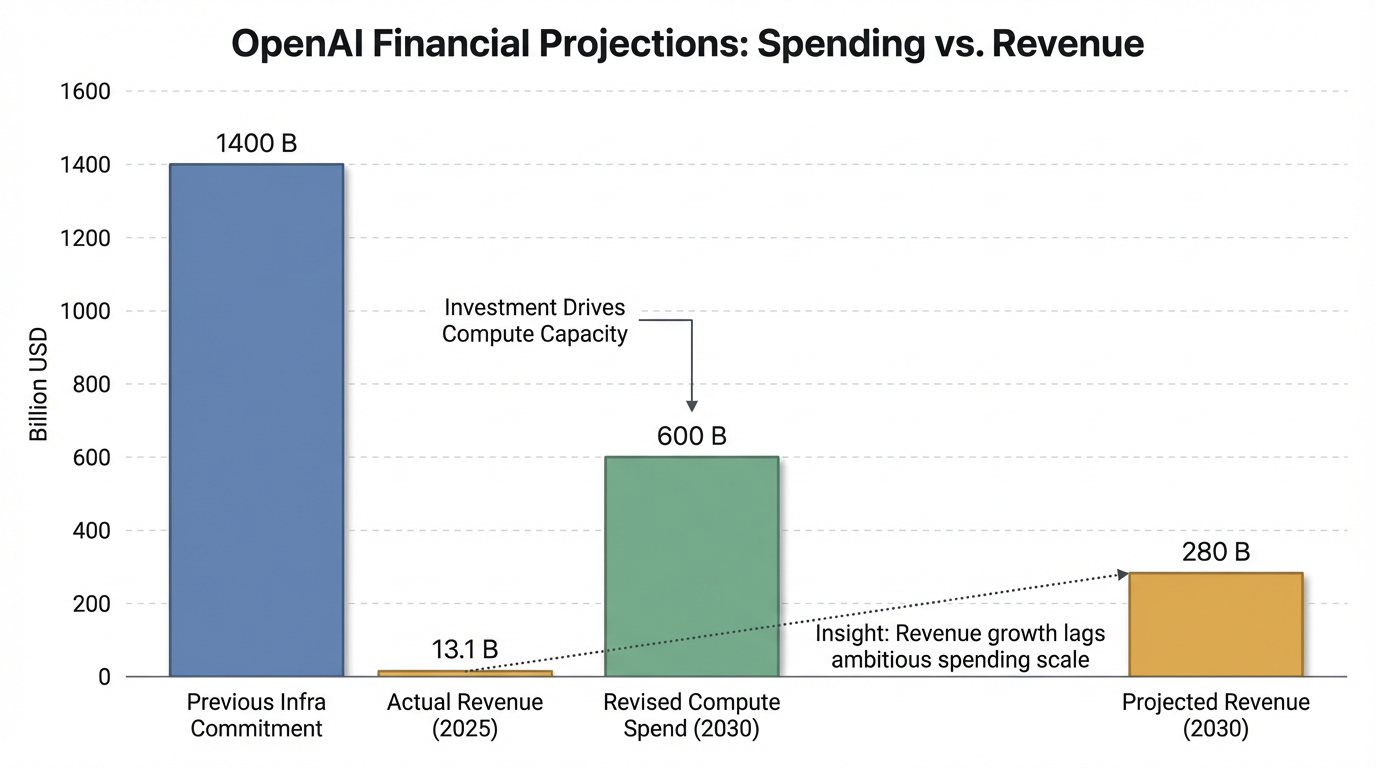

OpenAI, a pivotal player in the rapidly evolving artificial intelligence landscape, recently announced a significant recalibration of its ambitious spending projections, now targeting approximately $600 billion in total compute spend by 2030 1. This staggering figure, reported by CNBC on February 20, 2026, represents a strategic adjustment from CEO Sam Altman's previously touted $1.4 trillion in infrastructure commitments and comes with a more defined timeline 1. The company's influential position is underscored by the widespread adoption of its products, such as ChatGPT, which supports over 900 million weekly active users, and Codex, with over 1.5 million weekly active users 1. This analysis aims to explore the implications, feasibility, and potential industry reactions to this monumental financial commitment, setting the stage for a deeper dive into OpenAI's revised strategic direction.

Understanding the $600 Billion Vision

OpenAI is strategically recalibrating its financial trajectory, now targeting approximately $600 billion in "total compute spend" by 2030, a figure that specifically denotes infrastructure expenditure rather than revenue 1. This revised projection represents a substantial reduction from the $1.4 trillion in infrastructure commitments previously touted by CEO Sam Altman, and it comes with a more defined timeline, reflecting a pragmatic shift in the company's long-term financial planning 1.

The primary drivers behind this colossal spending target are the significant infrastructure requirements essential for advancing artificial intelligence. OpenAI has been actively engaged in a "flurry of multibillion-dollar infrastructure deals," partnering with leading chipmakers and cloud companies 1. This expenditure primarily encompasses critical components such as chip procurement and the extensive development of data center and cloud infrastructure 1. These investments are crucial for the continuous training and deployment of OpenAI's advanced AI models, which are at the core of its product offerings and future innovations 1.

The strategic goals tied to this $600 billion target are multifaceted. Firstly, the adjustment is a direct response to growing investor concerns that OpenAI's initial expansion ambitions might have been disproportionate to its potential revenue generation 1. By resetting to a lower, more defined spending target, the company aims to establish a more sustainable growth path that aligns directly with its expected revenue growth 1. OpenAI anticipates achieving more than $280 billion in total revenue by 2030, with roughly equal contributions from its consumer and enterprise divisions, providing a clearer financial roadmap 1.

Secondly, this investment underscores OpenAI's commitment to maintaining market leadership amidst an intensely competitive landscape. The company declared a "code red" internally in December to accelerate efforts on improving ChatGPT, acknowledging escalating competition from rivals such as Google and Anthropic 1. This strategic re-evaluation and significant investment in compute infrastructure are therefore critical for fostering accelerated development of advanced AI models, including the pursuit of Artificial General Intelligence (AGI), and for solidifying its dominance in the AI sector by enhancing its product capabilities and user engagement 1. As evidence of its current market position, ChatGPT supports over 900 million weekly active users, having rebounded to record highs despite previous growth dips, and its coding product, Codex, boasts over 1.5 million weekly active users 1.

Implications for the AI Ecosystem and Beyond

OpenAI's recalibrated compute spend target of $600 billion by 2030, a significant reduction from previous projections, marks a pivotal moment for the AI industry 1. This strategic adjustment not only reflects a re-evaluation of financial sustainability but also carries broad implications across technological infrastructure, the competitive landscape, and economic and societal considerations.

Technological & Infrastructure Implications

The pursuit of advanced AI models necessitates a massive investment in underlying technological infrastructure, driving demand for specialized components, pushing data center expansion to unprecedented levels, and challenging global supply chains.

Semiconductor Demand and Supply Constraints

The global AI chip market is experiencing explosive growth, projected to reach $323.14 billion by 2030 from $56.82 billion in 2023, exhibiting a Compound Annual Growth Rate (CAGR) of 28.9% 2. Another estimate forecasts growth from $118 billion in 2024 to $293 billion by 2030, at a CAGR of 16.37% 3. Generative AI chips alone are expected to generate nearly $500 billion in revenue by 2026, accounting for roughly half of global chip sales 4. AMD CEO Lisa Su estimates the total addressable market for AI accelerator chips in data centers could reach $1 trillion by 2030 4.

GPUs dominated the AI chipset market with a 30.9% revenue share in 2023, while Application-Specific Integrated Circuits (ASICs) are projected to be the fastest-growing segment, particularly for cloud and edge AI applications 2. This demand is largely driven by the need for high-performance computing to train and deploy complex AI models like large language models 5.

However, this booming demand is straining the semiconductor supply chain, with bottlenecks shifting from GPU and foundry capacity to Hardware Memory (HBM) supply, advanced packaging, and EUV lithography tools by early 2026 6. Prices for HBM memory products are expected to increase by 5-10% for 2025 due to high demand and limited supplier capacity, and capacity expansions are lagging demand, especially for sub-10 nm chips 7. The design of high-performance chip architectures also entails substantial research and development costs, with the U.S. semiconductor industry investing $59.3 billion in R&D in 2023 and $62.7 billion in 2024 5.

Data Center Expansion and Energy Demands

AI workloads are fundamentally transforming data centers, necessitating massive expansion, surging energy demands, and evolving cooling requirements. An estimated $6.7 trillion investment will be required in data centers by 2030, potentially making it the largest infrastructure investment cycle in modern history 8. Global data center capacity is expected to triple by 2030, with AI workloads driving approximately 70% of this expansion 8.

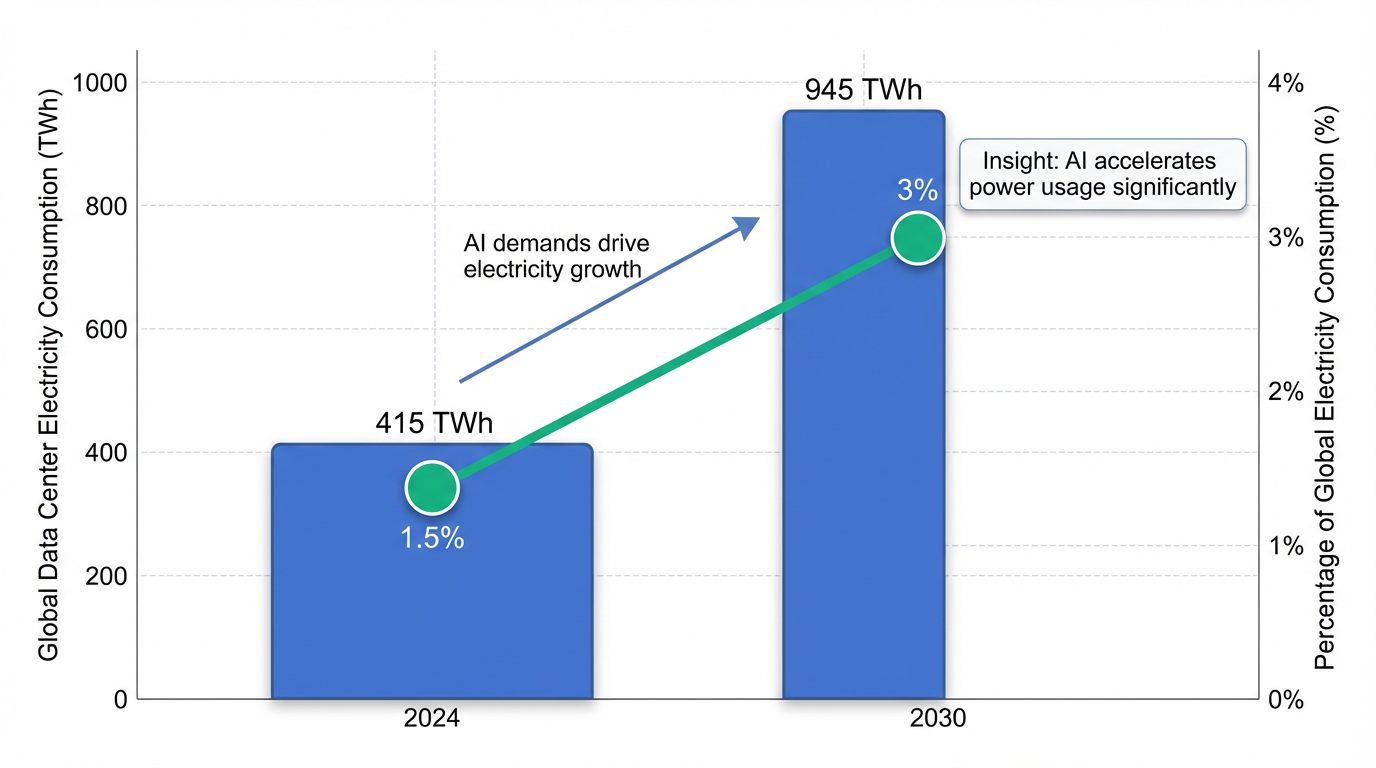

Data centers consumed about 1.5% of global electricity in 2024, growing at 12% per year since 2017 9. This is projected to more than double to 945 terawatt-hours by 2030, representing just under 3% of total global electricity consumption, primarily driven by AI . AI currently uses about 14% of global data center power and is expected to reach 27% by 2027 8. AI data centers require significantly more power per rack (50-150 kilowatts) compared to traditional data centers (10-15 kilowatts), with modern GPUs consuming 700-1,200 watts per chip 10. This high power density necessitates advanced cooling systems, including liquid cooling, which can account for 35-40% of a hyperscaler's energy consumption . The immense demand is also straining electricity grids, with approximately 20% of planned data center projects at risk of delays due to grid connection issues 9.

Impact on Global Supply Chains

The AI-driven demand profoundly impacts global supply chains for hardware components, requiring increased specialized logic, high-performance memory, and advanced packaging 11. The concentration of semiconductor equipment investment in Asia, exceeding 70% through 2030, highlights both the region's importance and its exposure to geopolitical and logistics risks 11. While vulnerable, AI itself is becoming critical for enhancing supply chain resilience through data-driven intelligence for managing component scarcity, optimizing resource utilization, and improving demand forecasting . However, challenges such as poor data quality and inconsistent methodologies hinder AI adoption for sustainability within supply chains 12.

Competitive Landscape

OpenAI's massive compute investment significantly influences the competitive dynamics of the AI industry, impacting market leaders and emerging players alike.

Influence on Major Players and Market Dynamics

OpenAI's ambitious spending strategy has forced a "code red" internally to intensify efforts against rivals like Google and Anthropic 1. Despite this competition, ChatGPT has rebounded to record highs, supporting over 900 million weekly active users, and its coding product, Codex, boasts over 1.5 million weekly active users, competing directly with Anthropic's Claude Code 1.

The investment highlights an industry-wide struggle for talent and resources, likely leading to further market consolidation. Companies like Microsoft, through significant investment and strategic partnerships, are reinforcing their positions . Many perceive OpenAI's competitive "moat" as residing in its vast access to compute resources and proprietary data, which are difficult for competitors to replicate . However, major tech companies such as Google and Meta possess comparable or even superior resources in data, compute, and talent, posing substantial competitive challenges .

Skepticism and Open-Source Threat

Despite the large investments, skepticism persists within the tech community, with many viewing OpenAI's valuation as highly speculative and potentially leading to an "AI bubble burst" . Concerns center on OpenAI's unsustainable burn rate and the lack of a clear revenue model sufficient to justify its valuation, especially given the high cost of inference and potential for competition to drive down pricing . The rapid advancements in open-source AI models are seen as a significant threat, potentially commoditizing foundational AI capabilities and eroding the pricing power of commercial entities like OpenAI . This raises questions about whether compute and data truly constitute a sustainable and unassailable competitive "moat" against open-source advancements and other tech giants .

Economic & Societal Considerations

The scale of investment projected by OpenAI has profound implications for the global economy and society, from financial expenditure to environmental impact and ethical dilemmas.

Economic Impact and Capital Expenditure

OpenAI's revised target of $600 billion in total compute spend by 2030, a substantial reduction from the $1.4 trillion previously touted, aims to align more directly with expected revenue growth 1. This ambition is underscored by a current funding round that could exceed $100 billion, potentially valuing OpenAI at $730 billion pre-money, with strategic investors like Nvidia considering a $30 billion investment 1.

The broader economic implications include a massive capital expenditure for AI infrastructure. OpenAI CEO Sam Altman reportedly discussed creating a $7 trillion fund for generative AI investment before 2030, with an aggressive scenario estimating cumulative capital spending for generative AI infrastructure could reach $2.35 trillion by 2030 13. The infrastructure market is expected to exceed $1 trillion in yearly spending by 2030, requiring organizations to invest $5.2 trillion in AI-ready data centers 8. This financial commitment is largely driven by the speculative promise of Artificial General Intelligence (AGI), which, if achieved, could unlock unprecedented economic value and market dominance, often described as an "infinite money glitch" by some .

Environmental Footprint

The burgeoning AI infrastructure expansion brings significant environmental concerns. Data centers are projected to consume nearly 3% of global electricity by 2030 14. This increased energy consumption, along with the substantial water usage required for cooling these high-density facilities, represents a growing environmental challenge 15. Moreover, the carbon footprint of AI itself, particularly the energy-intensive training of large models, is an increasing concern .

Ethical, Regulatory, and Accessibility Concerns

Societal concerns revolve around the ethical implications of AGI development, including questions about its safety and control . OpenAI's hybrid non-profit/for-profit structure and rapid pursuit of high valuations have raised questions about its original mission and ethical priorities . The potential for increasing government regulation, antitrust scrutiny, or policy changes impacting AI development and deployment is also an acknowledged future challenge . The immense costs associated with building and maintaining AI infrastructure could lead to a concentration of power and resources among a few players, impacting the broader accessibility and equitable distribution of AI's benefits 13.

Market Reception and Challenges

OpenAI's recalibrated target of approximately $600 billion in total compute spend by 2030, a significant reduction from CEO Sam Altman's previously touted $1.4 trillion, has been met with a predominant sentiment of skepticism and concern within the tech community 1. This revised figure, which aims to align more directly with expected revenue growth, comes as a direct response to growing investor anxieties that OpenAI's expansion plans might have been too ambitious relative to its potential revenue generation 1. Most commentators view the $600 billion valuation as highly speculative, detached from current financial realities, and reminiscent of historical market bubbles, with very little overt optimism .

Financial Feasibility Concerns

A major challenge highlighted by the market is OpenAI's financial feasibility, particularly concerning its ability to achieve profitability given its massive operational costs. Concerns include the company's substantial burn rate, especially for training and running large language models (LLMs), which raises questions about how such expenditures can lead to sufficient profitability to justify a $600 billion valuation . Many also question OpenAI's capacity to generate adequate revenue streams, projected at more than $280 billion by 2030, especially given the high cost of inference and the potential for competition to drive down pricing 1. The difficulty in translating impressive current capabilities into widespread, monetizable enterprise or consumer applications is a significant hurdle . The investment is frequently compared to "dot-com bubble" scenarios, suggesting a speculative bubble where value is based on future, unproven breakthroughs rather than current product or profit, often driven by a "fear of missing out" (FOMO) among investors .

Technical Scalability Challenges

The technical demands for achieving OpenAI's vision, particularly the immense computational resources required, pose considerable scalability challenges. The reliance on ever-increasing computational resources is viewed as a bottleneck and a significant cost factor that may not scale sustainably or profitably . A substantial portion of the skepticism stems from doubts about the imminent arrival or even the ultimate feasibility of Artificial General Intelligence (AGI) . While the $600 billion target is largely perceived to be driven by the hyper-speculative belief in achieving AGI and its perceived transformative economic impact, there is a strong sentiment that current LLMs, though impressive, are not AGI, and the path to achieving and controlling it is fraught with unknown technical hurdles . Current AI models still exhibit limitations such as hallucinations, reasoning flaws, and a lack of true general intelligence, further undermining the AGI-based valuation premise for many .

Resource Acquisition Issues

The ambitious spending plan also faces significant hurdles in resource acquisition, particularly concerning AI chips and data center infrastructure. The primary constraint in AI system shipments has shifted from GPU and foundry capacity to Hardware Memory (HBM) supply, advanced packaging capacity, and EUV lithography tools 6. Prices for HBM memory products are expected to increase by 5-10% for 2025 due to high demand and limited supplier capacity 7. OpenAI's $600 billion "total compute spend" is primarily driven by the need for significant infrastructure, involving "multibillion-dollar infrastructure deals" for chip procurement and extensive data center and cloud infrastructure development 1. This demand is part of a larger trend where data centers are projected to require an estimated $6.7 trillion investment by 2030, which could represent the largest infrastructure investment cycle in modern history 8. The global data center capacity is expected to triple by 2030, with AI workloads accounting for approximately 70% of this expansion 8.

Furthermore, the surge in AI infrastructure places immense strain on energy grids. Data centers accounted for about 1.5% of global electricity consumption in 2024 and are projected to more than double to 945 TWh by 2030, representing nearly 3% of total global electricity consumption, largely driven by AI . AI data centers require significantly more power per rack (50-150 kilowatts) compared to traditional data centers (10-15 kilowatts), necessitating advanced cooling systems and straining existing electricity grids . Approximately 20% of planned data center projects are at risk of delays due to grid connection issues, and an additional $720 billion in grid spending through 2030 may be needed to support data center power demands .

Impact of the Competitive Landscape

The intensely competitive landscape presents another significant challenge for OpenAI. The company declared a "code red" in December to intensify efforts on improving ChatGPT amid escalating competition from rivals like Google and Anthropic 1. The rapid advancements in open-source AI models are seen as a significant threat, potentially commoditizing foundational AI capabilities and eroding pricing power for commercial players like OpenAI . Additionally, major tech companies such as Google and Meta possess comparable or superior resources in terms of data, compute, and talent, posing a substantial competitive challenge that questions the long-term "moat" of OpenAI .

Implicit Challenges and Future Hurdles

Beyond the explicit financial, technical, and resource challenges, OpenAI faces implicit challenges related to the broader operational environment. While not the primary focus, the potential for increasing government regulation, antitrust scrutiny, or policy changes impacting AI development and deployment is an acknowledged future challenge . The unique hybrid non-profit/for-profit structure of OpenAI and its rapid pursuit of high valuations also raise questions about its original stated mission and ethical priorities . Furthermore, the intense competition for top AI talent and the high costs associated with retaining them represent an ongoing challenge for growth and innovation in the highly competitive AI sector .