Seedance 2.0 Alternatives: A Comprehensive Scientific Review and Comparative Analysis

Key Features and Core Functionalities of Seedance 2.0

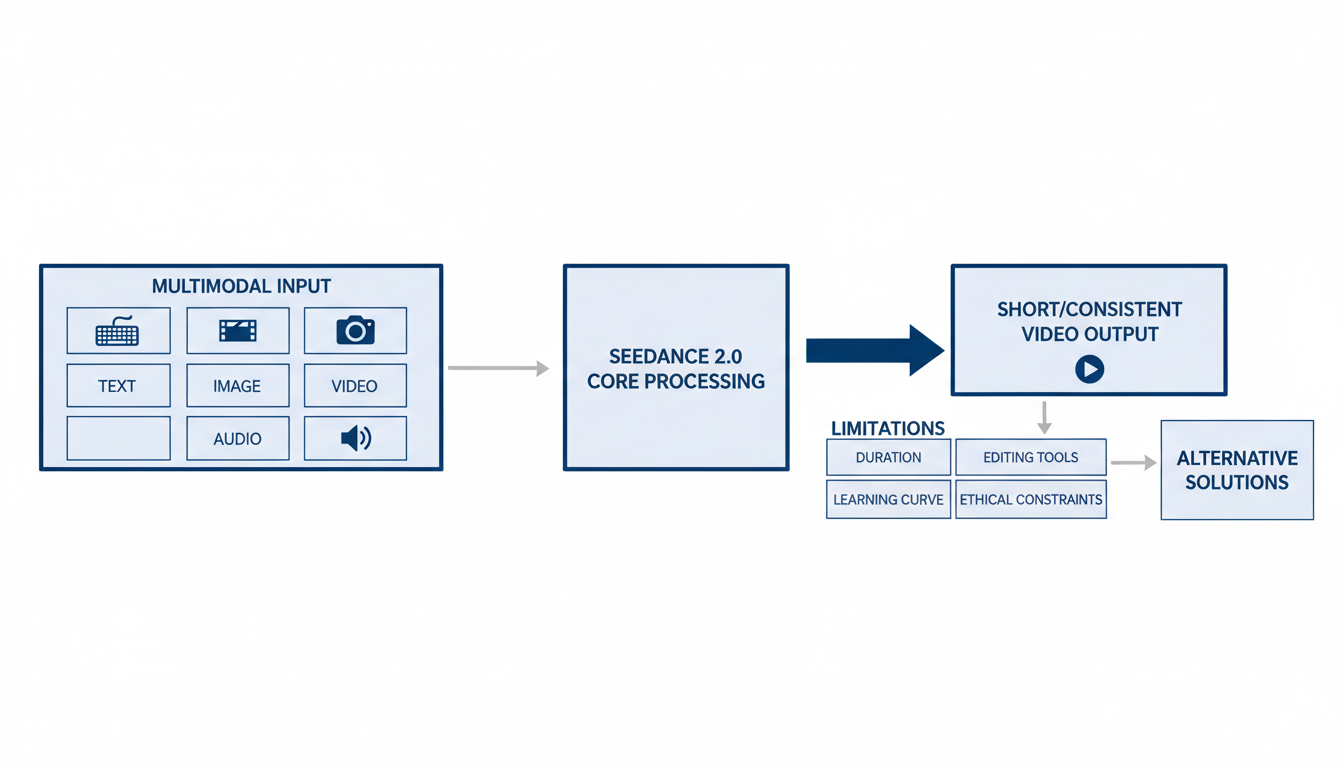

Seedance 2.0 represents a significant architectural and qualitative advancement over its predecessors, Seedance 1.0 and 1.5 Pro, transforming video generation from experimental demos into production-ready systems . It primarily addresses the "uncontrollability" pain point in AI video generation, aiming to provide precise control over video output for commercial workflows across various industries . The model emphasizes control, coherence, and creative intent, positioning itself as a tool that augments rather than replaces human creativity 1.

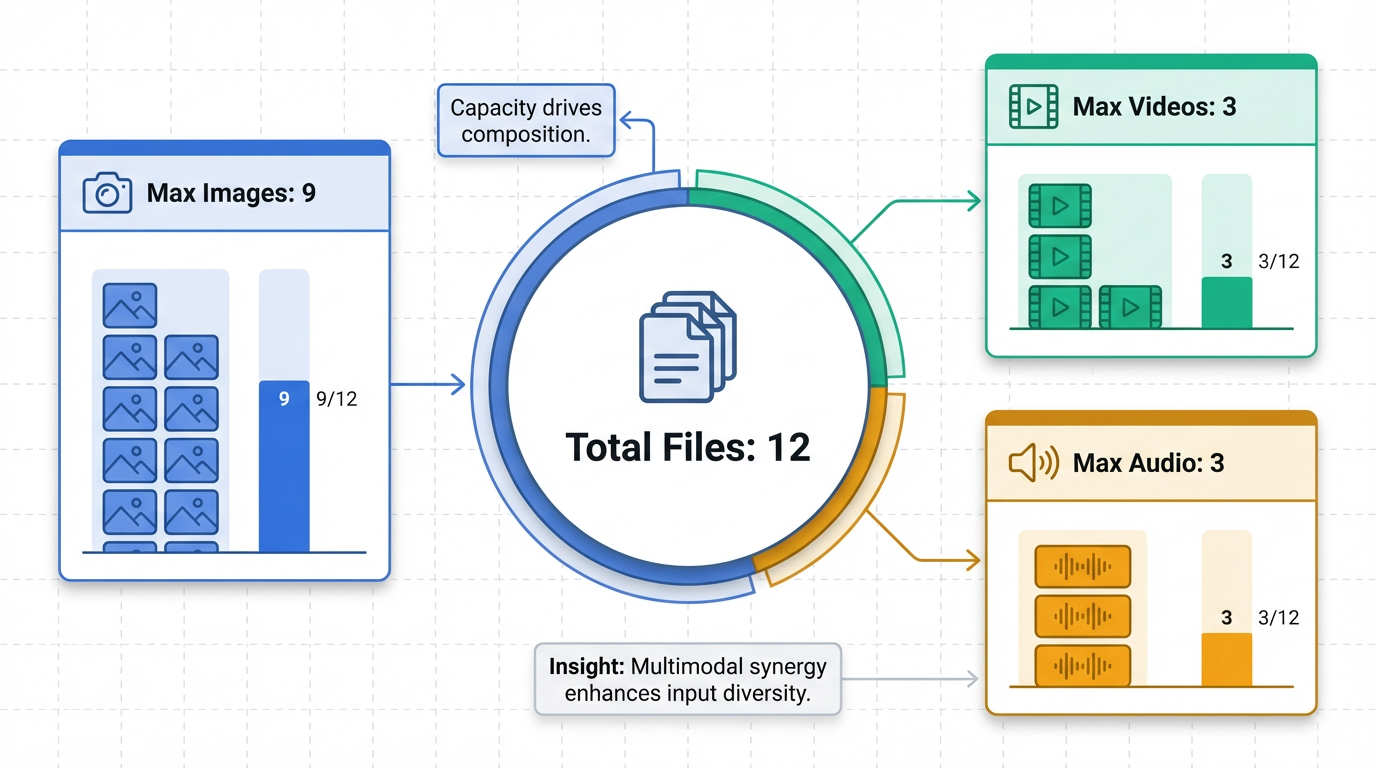

Multimodal Input Support with Explicit @ Mention Reference System

One of Seedance 2.0's standout features is its robust multimodal input support, allowing users to combine images, videos, audio, and text prompts for richer expression and more controllable results . It accepts up to 12 files simultaneously, with specific limits of up to 9 images, 3 videos (maximum 15 seconds total), and 3 audio files (maximum 15 seconds total) . A unique "@ mention reference system" allows users to explicitly instruct the model on how to utilize each asset, for example, "@Image1 for character appearance, @Video1 for camera motion, @Audio1 for rhythm" . This paradigm shifts video creation from "prompt guessing" to "precise replication" .

Extreme Consistency & Stability

Seedance 2.0 significantly enhances the understanding of physical laws and instructions, maintaining high uniformity throughout the generated clip . This is crucial for preserving facial features, clothing details, and overall visual style, particularly vital for "Character IP continuity" and commercial advertisements . The model effectively solves common issues like "character morphing" and flickering scenes, and intelligently addresses physical laws such as gravity, momentum, and collision .

Advanced Video Editing & Infinite Extension Capabilities

The model offers powerful editing capabilities, including support for character replacement and the addition or deletion of content within existing videos . It also facilitates smooth video extension and concatenation based on prompts, which helps in saving rendering time and computing costs .

Native Audio-Visual Sync & Audio Generation with Phoneme-Level Lip-Sync

Seedance 2.0 natively supports audio input, enabling the generation of visuals based on rhythm, complex camera movements synchronized with background music, and character lip movements or actions derived from reference audio . Through its Dual-Branch Diffusion Transformer architecture, the model generates audio and video simultaneously, ensuring perfectly synchronized sound effects and natural ambient audio . It also supports phoneme-level lip-sync in over 8 languages 2.

Director-Level Control with Multi-Shot Logic and Automated Camera Language

Demonstrating "director-level" thinking, Seedance 2.0 understands complex narrative logics and automatically schedules camera language, incorporating techniques like pushing, pulling, panning, and tilting . It introduces multi-shot logic, effectively acting as a storyboard artist to break down prompts into distinct camera shots and orchestrate their generation with shared consistency data .

High-Precision Replication of Creative Templates and Styles

The tool exhibits a high capacity for precision, capable of replicating creative templates, complex effects, and various shooting styles directly from reference materials provided by the user 3.

High-Quality Output Specifications

Seedance 2.0 delivers high-quality output specifications, producing 2K resolution videos (2048×1080) with durations ranging from 4 to 15 seconds . Generation speed is notably 30% faster than previous versions, with HD video renders completing in 2-5 seconds . It supports a wide array of aspect ratios, including 16:9, 4:3, 1:1, 3:4, and 9:16 3.

Underlying Mechanism: Diffusion Transformer and Quad-Modal Encoder System

At its core, Seedance 2.0 is built on an advanced Diffusion Transformer (DiT) architecture, which replaces the traditional U-Net backbone with a transformer, enabling superior scalability and attention mechanisms for handling spatial and temporal relationships 4. It further utilizes a quad-modal encoder system to process diverse inputs—text, images, video clips, and audio—into a unified language of latent vectors, facilitating comprehensive multimodal understanding and generation 5.

Introduction: Understanding Seedance 2.0 and the Search for Alternatives

Seedance 2.0, ByteDance's multimodal video generation model, officially launched around February 10, 2026, representing a significant architectural and qualitative leap over its predecessors, Seedance 1.0 and 1.5 Pro . This model aims to transform video generation from experimental demos into production-ready systems by specifically tackling the challenge of "uncontrollability" in AI video .

It provides precise control over video output, emphasizing control, coherence, and creative intent, positioning itself as a tool to enhance creativity for real commercial workflows across advertising, entertainment, and digital commerce . Its core functionalities and unique selling points include multimodal input support, accepting up to 12 files simultaneously (images, videos, audio, text prompts) and utilizing an "@ mention reference system" for precise asset utilization . Seedance 2.0 is also lauded for its extreme consistency and stability, ensuring "Character IP continuity" and solving issues like "character morphing" , as well as powerful video editing capabilities, native audio generation with perfect audio-visual sync, and "director-level" control with multi-shot logic for complex narratives .

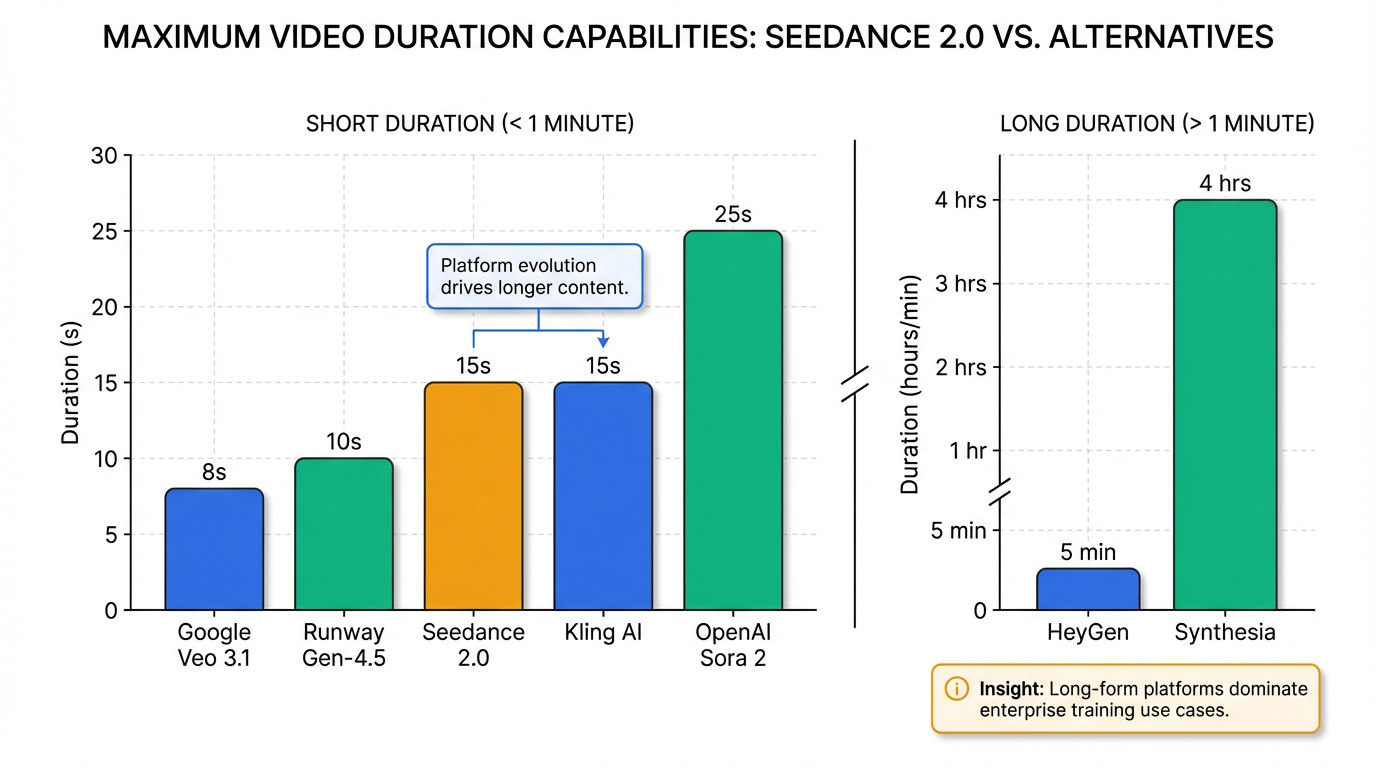

Despite these powerful advancements, Seedance 2.0 presents certain limitations that lead users and creators to explore alternative solutions. The model is primarily optimized for short, controlled clips, typically generating videos between 4 to 15 seconds . This focus makes longer single-pass storytelling challenging and often requires manual stitching of multiple clips, which can sometimes result in visible "seams" and affect overall continuity .

Furthermore, Seedance 2.0 does not offer a built-in suite of advanced editing tools for post-production tasks like masking, compositing, or inpainting, necessitating the export of generated content to external software for further refinement 6. While its multimodal input system with "@" command syntax is powerful, it can present a steep learning curve, and vague or conflicting references may lead to unpredictable blending of styles or unexpected outputs . Although its consistency is generally strong, occasional drift can still occur on very long or exceptionally complex multi-shot sequences, or for longer shots beyond 6 seconds, manifesting as subtle stylistic wobbles or minor shifts in visual elements .

Hardware demands for running advanced capabilities, especially "World Model" features, are likely to push users towards cloud solutions due to massive processing loads . Crucially, ethical and privacy concerns have led to the suspension of the highly advanced "photo-to-voice" feature and the prohibition of using real human faces as reference material, placing significant constraints on creators who rely on such inputs . Other considerations include generation time, which at 60-180+ seconds per clip, is not real-time , strict API input constraints (e.g., maximum 12 reference files, videos up to 15 seconds) 7, and a comparatively smaller English-language community providing fewer tutorials and templates than more established Western AI video platforms 6. The credit-based pricing model can also lead to unexpected costs, particularly for high-volume production requiring multiple iterations .

Therefore, while Seedance 2.0 represents a significant advancement in AI video generation, transforming it from a "toy" into a "tool" that promises to inflate content production and restructure industries , its specific strengths and inherent constraints, coupled with evolving market demands and ethical policy restrictions, compel users to evaluate alternative solutions based on their unique creative and production requirements. This sets the stage for a comparative analysis of other models in the burgeoning AI video landscape.

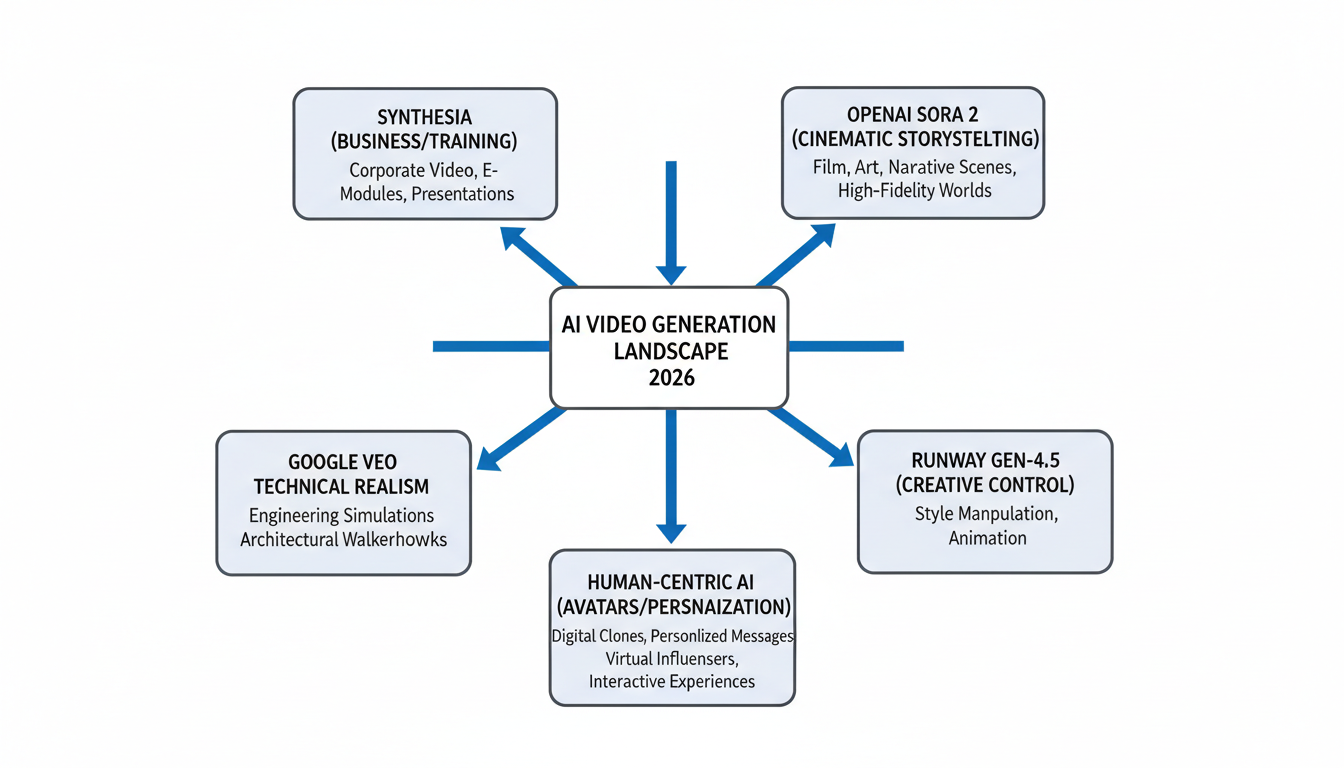

Top Seedance 2.0 Alternatives (2026 Overview)

In 2026, the AI video generation landscape offers a robust set of alternatives to Seedance 2.0, each distinguishing itself with unique strengths, core features, and target audiences. While Seedance 1.5 is recognized for its capabilities in world-building and generating longer cinematic sequences with strong image-to-video continuity, its motion physics are considered slightly less stable compared to leading competitors 8. Earlier versions, such as seedance-1-0-pro-250528, demonstrated high motion energy and imaging quality in benchmarks 9. Seedance v3 models are also accessible through platforms like WaveSpeedAI 10. The leading alternatives often excel through advanced realism, creative control, specialized avatar generation, or efficiency for specific content types.

1. Synthesia

Synthesia stands out as a leader for business and training applications, enabling the creation of polished presenter-led videos from scripts without requiring cameras or extensive editing skills. Its predictable and controlled output makes it an ideal tool for non-technical teams 8. Synthesia supports over 140 languages, offers real-time collaboration, and features realistic AI avatars, including Express-2 with actions and customization options for backgrounds and outfits 11. It also provides multilingual voice cloning with voice persistence and controls, multicam avatars, and AI dubbing in over 130 languages 11. Interactive elements like quizzes, branching scenarios, and calls-to-action (CTAs) are supported 11. Its editor includes dynamic captions, various visual effects (pop, shake, blink, zoom, pan), a swap shot feature, storyboard functionality, and an AI video assistant for layout replacement and script-to-video generation 11. Additionally, it supports PowerPoint-to-video conversion with editable text and image features 11. Videos can be up to 4 hours long, catering specifically to training needs 12. Primarily targeting corporate training, onboarding, internal communications, and enterprises requiring consistent, professional, avatar-driven videos, Synthesia is positioned for category leadership in 2026, with continuous enterprise-focused enhancements . It integrates with powerful AI Playground models like Sora 2 and Veo 3.1 11 and offers integration capabilities such as a Synthesia Excel Add-in, LMS exports, and enterprise-grade security features like SCIM and SSO . Synthesia anticipates continued rapid innovation in 2026, building on 127 new features introduced in 2025, including advanced avatars, AI dubbing, and interactivity 11.

2. OpenAI Sora 2

OpenAI Sora 2 is highly recognized for its unmatched narrative intelligence, emotional storytelling capabilities, and cinematic quality. It excels at understanding complex scenes and physics simulation . Core features include the generation of character-driven scenes and multi-scene narratives with native dialogue and lip-sync 8. Sora 2 provides complex scene understanding, temporal consistency, advanced physics simulation, and extended video durations of up to 20 seconds (25 seconds on Pro plans) at 1080p resolution . It also supports storyboards and reusable character cameos 13. This platform is ideal for filmmakers, storytellers prioritizing depth over raw realism, and those engaged in research or experimental long-form content . It targets creative professionals who need cinematic quality and have the budget for higher tiers 13. Videos include watermarks and C2PA provenance metadata 12, with access primarily through ChatGPT Plus or Pro subscriptions 13. Native audio generation is currently experimental 13. While noted for being slower and pricier than some alternatives 8, Sora 2 handles complex object interactions with high accuracy 12. Released in late 2025, Disney integration for licensed characters is anticipated in 2026 13.

3. Google Veo 3.1 / Veo 3.2

Google Veo is celebrated for its film-grade lighting, physics, and integrated audio, excelling in physical realism and technical fidelity . It achieves high overall scores in benchmarks for consistent and balanced performance 9. Veo produces videos with natural lighting, coherent motion physics, and high-quality integrated audio, offering native 4K output, built-in dialogue, sound effects, and music generation 13. It demonstrates excellent prompt understanding, particularly with technical and cinematic language, and features improved real-world motion accuracy 13. Clip lengths are typically up to 8 seconds, with extensions available 13. Geared towards projects demanding high production value, such as trailers and branded films 8, Veo is the technical leader for professional productions that require 4K output, precise camera control, and integrated audio, making it suitable for advertising and broadcast work 13. Developed by Google DeepMind 13, leveraging advanced generative AI 14, access is available through Gemini and Google Cloud Vertex AI, with limited clips for Canva Pro users 13. It integrates well into "stitch and iterate" workflows, particularly with Google Flow 12. Veo leads in resolution, audio integration, and physical accuracy 13. Veo 3.1 and 3.2 are current versions detailed in 2026 guides .

4. Runway Gen-4.5

Runway is known as the "Artist's Model" due to its focus on creative control, expressive camera choreography, and a rich creative toolset, allowing for highly "directable" editing that empowers filmmakers and VFX artists . It currently holds the top position in some AI video benchmarks for its specialized capabilities 13. Core features include an advanced motion brush for precise control over specific regions, cinematic quality outputs, and a user-friendly interface . Key functionalities encompass Director Mode, image-to-video capabilities with depth and motion, and premium style transfer . It generates high-fidelity text-to-video content and supports experimental creative outputs . Videos can be up to 10 seconds, extendable, with 1080p native resolution and 4K upscaling 13. Runway is preferred by filmmakers, video editors, and creative professionals seeking artistic control 10. It serves directors and designers experimenting with shot language, cinematic rhythm, and multi-tool workflows, as well as those working on music videos and short films . While its physical realism may lag behind some competitors 8, its creative control is considered unmatched 13. Generation time is "pretty reasonable" 15. Runway Gen-4.5 continues to be a prominent model in 2026, leading in creative flexibility .

5. Avatar and Human-Centric AI Video Generators

Kling AI (Kling 2.6 / Kling 3.0)

Kling AI offers a balanced solution for realism, stability, and cost-efficiency, producing reliable and scalable outputs. It is particularly strong for realistic human characters, offering best-in-class face consistency and natural movements . It delivers stable motion physics, good lighting realism, and high fidelity outputs . Kling AI excels in face stability across frames, natural lip movements, believable expressions, and lip-syncing 13. Video lengths range from 3 to 15 seconds at 1080p resolution , and a free tier is available 13. This platform is suited for commercial advertising, branded visuals, and content creators focusing on human subjects, such as talking-head videos, social media content, and user-generated content (UGC) style ads . Developed by Kuaishou 13, Kling 2.0 models are exclusively accessible via WaveSpeedAI 10. While generation can be slower (5-30 minutes) and less creative with non-human subjects, its environmental/background quality may lag behind Sora or Veo 13. Kling 3.0 is a current model discussed in 2026 guides 12.

HeyGen (Avatar IV)

HeyGen specializes in creating personalized and translated videos at scale, making it an excellent tool for sales, marketing, and businesses needing customized video messages for global audiences 15. It is considered the standard for stable AI avatars and localization in business training 12. HeyGen offers AI-powered video translation into multiple languages with impressive accuracy, custom avatar creation with voice cloning, and interactive avatars 15. It supports video lengths from 1 to 5 minutes (Avatar IV) 12, and features Brand Kits, Sub-Workspaces, generative fill, and object swap 16. Widely used by sales teams, marketers, businesses, and agencies for influencer ads, personalized video campaigns, corporate communications, client pitches, and training , HeyGen employs the Avatar IV model 12. While offering scalability, some test results indicated less realistic avatars and unnatural movements 15. Its interface is clean and user-friendly 15. HeyGen is expected to drive significant growth in marketing, training, and personalized video creation in 2026 .

| Name | Unique Selling Points | Core Features | Target Audience | Max Video Length |

|---|---|---|---|---|

| Synthesia | Leader for business and training applications; predictable, controlled output for non-technical teams; polished presenter-led videos from scripts without cameras/extensive editing. | 140+ languages, real-time collaboration, realistic AI avatars (Express-2, customization), multilingual voice cloning/dubbing (130+ languages), multicam avatars, interactive features (quizzes, CTAs), dynamic captions, visual effects, swap shot, storyboard, AI video assistant, PPT-to-video conversion. | Corporate training, onboarding, internal communications, enterprises requiring consistent, professional, avatar-driven videos. | Up to 4 hours |

| OpenAI Sora 2 | Unmatched narrative intelligence, emotional storytelling, cinematic quality; excels at complex scene understanding and physics simulation. | Character-driven scenes, multi-scene narratives with native dialogue/lip-sync, complex scene understanding, temporal consistency, advanced physics simulation, storyboards, reusable character cameos. | Filmmakers, storytellers prioritizing depth over raw realism, research/experimental long-form content, creative professionals with budget for higher tiers. | Up to 20 seconds (25 seconds on Pro plans) at 1080p |

| Google Veo 3.1 / 3.2 | Film-grade lighting, physics, and integrated audio; excels in physical realism and technical fidelity; high overall scores in benchmarks. | Natural lighting, coherent motion physics, high-quality integrated audio, native 4K output, built-in dialogue, sound effects, and music generation, excellent prompt understanding. | Projects demanding high production value (trailers, branded films), professional productions requiring 4K output, precise camera control, and integrated audio (advertising, broadcast work). | Typically up to 8 seconds (with extensions available) |

| Runway Gen-4.5 | “Artist's Model” for creative control, expressive camera choreography, rich creative toolset; highly “directable” editing; empowers filmmakers/VFX artists; top position in some AI video benchmarks. | Advanced motion brush for precise control, cinematic quality outputs, user-friendly interface, Director Mode, image-to-video with depth/motion, premium style transfer, high-fidelity text-to-video. | Filmmakers, video editors, creative professionals seeking artistic control; directors/designers experimenting with shot language/cinematic rhythm/multi-tool workflows, music videos, short films. | Up to 10 seconds (extendable), 1080p native, 4K upscaling |

| Kling AI | Balanced solution for realism, stability, and cost-efficiency; reliable/scalable outputs; strong for realistic human characters, best-in-class face consistency/natural movements. | Stable motion physics, good lighting realism, high fidelity outputs; excels in face stability across frames, natural lip movements, believable expressions, and lip-syncing. | Commercial advertising, branded visuals, content creators focusing on human subjects (talking-head videos, social media content, UGC-style ads). | 3 to 15 seconds at 1080p |

| HeyGen | Specializes in creating personalized and translated videos at scale; excellent for sales/marketing/businesses needing customized messages for global audiences; standard for stable AI avatars/localization in business training. | AI-powered video translation (multiple languages, accurate), custom avatar creation with voice cloning, interactive avatars, Brand Kits, Sub-Workspaces, generative fill, object swap. | Sales teams, marketers, businesses, and agencies (influencer ads, personalized video campaigns, corporate communications, client pitches, training). | 1 to 5 minutes (Avatar IV) |

Other Notable Platforms and Developments in 2026

The AI video generation landscape in 2026 also features several other significant players and overarching trends:

- WaveSpeedAI: This platform functions as an industry-leading aggregator, offering unparalleled and exclusive access to over 600 AI models, including cutting-edge technologies like Kling 2.0, Seedance v3, and WAN 2.6 10. Its unified API simplifies integration for businesses requiring diverse video generation capabilities, ensuring industry-leading speed and broadcast-quality output 10.

- Luma AI (Dream Machine / Ray3.14): Luma AI focuses on photorealistic video generation for realistic scenes and atmospheric visuals, making it ideal for product videos and marketing content . Ray3.14 is specifically optimized for physics simulation and speed, catering to social media creators 12.

- Pika Labs 2.0 / Pika 2.5: A social-first generator, Pika Labs is designed for creative and trend-driven content, offering video-to-video transformation, style transfer, and fast generation speeds, particularly beneficial for social media creators and quick iterations .

- Brightcove: While not primarily a generative AI video tool like Seedance 2.0, Brightcove's 2026 roadmap emphasizes AI innovation within its video engagement platform 17. This includes next-generation AI captions, AI-powered accessibility, smarter monetization with AI-driven advertising, and automated video intelligence such as auto-generated chapters and AI-assisted live clipping 17. This reflects broader trends of AI integration across video platforms 17.

The overall AI video generator market is projected to reach USD 2.34 billion by 2030, demonstrating a Compound Annual Growth Rate (CAGR) of 32.78% from 2025-2030 18. This growth is fueled by the demand for personalized video content, the explosive growth of video consumption, and the rise of virtual influencers . Generative AI is revolutionizing production by enabling high-quality, scalable, and cost-effective content creation 19. This landscape in 2026 is characterized by a strong push towards higher resolution (up to 4K), longer video durations, improved motion coherence, multi-modal capabilities (text, image, video inputs), specialized models, and accessible APIs 10. The emphasis is shifting from merely generating content to creating high-performance, engaging videos with clear commercial impact, measured by metrics like Hook Rate and Velocity-Adjusted Return On Ad Spend (ROAS) 20.

Comparative Analysis: Choosing Your Best Fit

Seedance 2.0, launched by ByteDance around February 10, 2026, marks a significant architectural and qualitative advancement in multimodal video generation . Positioned to transform video creation from experimental demonstrations to production-ready systems, it directly addresses the critical pain point of "uncontrollability" in AI video output . Its unique value proposition centers on providing precise control, exceptional coherence, and a strong emphasis on creative intent, thereby enhancing rather than replacing human creativity 1. The model is accessible across various platforms, including Atlas Cloud, Dreamina AI, Dzine AI, Doubao, WaveSpeedAI, and ImagineArt, with an official REST API anticipated via Volcengine (Volcano Ark) around February 24, 2026 .

Core Functionalities and Market Positioning

Seedance 2.0's strength lies in its sophisticated multimodal input system, allowing up to 12 files—including images, videos, audio, and text prompts—to be combined simultaneously . This system uses an "@ mention reference system" to guide the model explicitly, shifting video creation from "prompt guessing" to "precise replication" . This level of detailed control, coupled with "extreme consistency" in maintaining visual style, character IP, and understanding physical laws, is crucial for commercial applications and stands out against previous models that struggled with character morphing or flickering scenes .

Furthermore, Seedance 2.0 offers native audio generation through a Dual-Branch Diffusion Transformer architecture, ensuring perfect audio-visual synchronization and phoneme-level lip-sync in over eight languages . Its "director-level control" encompasses understanding complex narrative logics and automatically scheduling camera movements like pushing, pulling, panning, and tilting, effectively acting as a storyboard artist with multi-shot logic . These features collectively target professionals who require scalable, repeatable, and precisely controllable video production for advertising, entertainment, and digital commerce .

Comparative Overview of AI Video Generation Models (2026)

The AI video generation landscape in 2026 is dynamic, with several powerful alternatives to Seedance 2.0, each carving out specific niches based on their strengths, core features, and target audiences. The table below provides a concise comparison of Seedance 2.0 and its leading competitors.

| Model Name | Core Features / USPs | Target Audience / Use Cases | Typical/Max Video Duration | Max Resolution | Key Strength / Limitation |

|---|---|---|---|---|---|

| Seedance 2.0 | Multimodal input (images, video, audio, text); extreme consistency; video editing/extension; audio-visual sync & native audio generation; director-level control; high-precision replication. | Content creators, agencies, marketers, filmmakers needing scalable production; E-commerce, narrative short films, social media, education, music videos. | 4 to 15 seconds | 2K (2048×1080) | Short-form focus (4-15s), stitching needed for longer narratives; steep learning curve for multimodal input. |

| Synthesia | Presenter-led videos from scripts; 140+ languages; realistic AI avatars (Express-2); multilingual voice cloning; multicam; AI dubbing; interactive features (quizzes, CTAs). | Corporate training, onboarding, internal communications, enterprises needing consistent, professional, avatar-driven videos. | Up to 4 hours | Not specified | Leader for business/training, professional avatar-driven videos from script, predictable output. |

| OpenAI Sora 2 | Unmatched narrative intelligence, emotional storytelling; cinematic quality; complex scene understanding; temporal consistency; advanced physics simulation; character-driven scenes. | Filmmakers, storytellers prioritizing depth, researchers, experimental long-form content; creative professionals with higher budget. | Up to 20 seconds (25s on Pro) | 1080p | Unmatched narrative intelligence, emotional storytelling, advanced physics simulation; slower and pricier than alternatives. |

| Google Veo 3.1 | Film-grade lighting, physics, integrated audio; native 4K output; built-in dialogue, SFX, music generation; excellent prompt understanding; real-world motion accuracy. | Projects demanding high production value, such as trailers, branded films, advertising, broadcast work. | Up to 8 seconds (extendable) | Native 4K | Technical leader for professional productions, native 4K, integrated audio; limited clip length (8s) requiring stitching. |

| Runway Gen-4.5 | "Artist's Model"; creative control; expressive camera choreography; advanced motion brush; Director Mode; image-to-video with depth/motion; premium style transfer. | Filmmakers, video editors, creative professionals seeking artistic control; directors, designers experimenting with shot language; music videos, short films. | Up to 10 seconds (extendable) | 1080p native, 4K upscaling | Unmatched creative control, expressive camera choreography; lacks native audio, physical realism can lag behind competitors. |

| Kling AI | Balanced realism, stability, cost-efficiency; realistic human characters, best-in-class face consistency; natural movements, believable expressions, lip-syncing. | Commercial advertising, branded visuals, content creators focusing on human subjects; talking-head videos, social media, UGC-style ads. | 3 to 15 seconds | 1080p | Best-in-class face consistency and natural human movements; slower generation for complex scenes, less creative for non-human subjects. |

| HeyGen | Personalized and translated videos at scale; AI-powered video translation; custom avatar creation, voice cloning; interactive avatars; Brand Kits, generative fill. | Sales teams, marketers, businesses, agencies for influencer ads, personalized video campaigns, corporate comms, client pitches, training. | 1 to 5 minutes | Not specified | Specializes in scalable, personalized, translated videos with stable AI avatars; some avatar realism/movement concerns reported. |

Key Performance Differentiators

When comparing Seedance 2.0 against its alternatives, several key performance metrics and capabilities emerge:

- Multimodal Input Complexity: Seedance 2.0's "@ mention reference system" for up to 12 multimodal inputs provides unparalleled specificity, making it ideal for scenarios requiring precise replication of existing creative templates or complex effects . While other models accept various inputs, few offer such granular control over their application.

- Consistency and Stability: Seedance 2.0's "extreme consistency" in maintaining character IP, facial features, and visual style throughout a clip is a significant advantage, particularly for commercial advertisements and narrative shorts where continuity is paramount . Sora 2 excels in temporal consistency and complex physics, while Kling AI is noted for best-in-class face consistency in human characters .

- Audio-Visual Sync and Generation: Seedance 2.0's native simultaneous audio and video generation, including phoneme-level lip-sync, offers a robust solution for rhythmic ad editing, music videos, and character actions synchronized with audio . Google Veo 3.1 also offers integrated audio, dialogue, and music generation, positioning it for high-production value projects 13. Runway Gen-4.5, conversely, currently lacks native audio generation .

- Director-Level Control: Seedance 2.0's ability to understand complex narratives and automatically choreograph camera movements aligns it with "director-level" thinking . Runway Gen-4.5, the "Artist's Model," also provides extensive creative control with features like an advanced motion brush and Director Mode, catering to filmmakers and VFX artists .

Output Specifications: Resolution, Duration, and Speed

- Resolution: Seedance 2.0 delivers 2K resolution (2048x1080) output . This is competitive but surpassed by Google Veo 3.1's native 4K output and Runway Gen-4.5's 4K upscaling capabilities 13.

- Video Duration: Seedance 2.0 is optimized for short-form content, with videos typically ranging from 4 to 15 seconds . This short-form focus is a common limitation among AI video generators, often requiring manual stitching for longer narratives, which can lead to visible "seams" . In contrast, Synthesia offers videos up to 4 hours, HeyGen up to 5 minutes, and Sora 2 up to 25 seconds for Pro plans, offering more flexibility for extended content .

- Generation Speed: While Seedance 2.0 is reported to be 30% faster than previous versions, rendering HD video in 2-5 seconds, its overall generation time of approximately 60-180+ seconds per clip is not real-time . Kling AI also notes slower generation times for complex scenes (5-30 minutes), while Pika Labs prioritizes fast generation speeds for social media .

Target Audience and Use Case Alignment

Seedance 2.0 targets a broad professional audience including content creators, agencies, marketers, and filmmakers, excelling in e-commerce, short narrative films, social media content, and music videos where consistent brand imagery or synchronized visuals are key .

- Enterprise & Training: Synthesia and HeyGen specialize in business applications. Synthesia leads in corporate training and internal communications with avatar-driven videos from scripts . HeyGen is ideal for personalized and translated videos at scale for sales and marketing, offering features like custom avatars and voice cloning .

- Cinematic & Narrative: OpenAI Sora 2 is designed for filmmakers and storytellers prioritizing narrative depth, emotional storytelling, and advanced physics simulation, suitable for experimental long-form content . Google Veo 3.1 focuses on film-grade lighting, physics, and integrated audio for high-production value projects like trailers and branded films .

- Creative & Artistic Control: Runway Gen-4.5 caters to filmmakers and video editors seeking artistic control and experimenting with camera choreography and style transfer .

- Human-Centric Content: Kling AI stands out for its realistic human characters, best-in-class face consistency, and natural movements, making it strong for commercial advertising and social media content featuring human subjects .

Limitations and Trade-offs

Despite its strengths, Seedance 2.0 has limitations that lead users to seek alternatives. Its short-form focus (4-15 seconds) requires manual stitching for longer content, potentially introducing inconsistencies . The model can exhibit "drift on longer shots" beyond 6 seconds, and struggle with "texture conflict" in fine patterns, or "ignored micro-cues" like exact typography . Its multimodal input system, while powerful, has a "steep learning curve" and can lead to unpredictable blending of styles with vague or conflicting references .

Seedance 2.0 also faces ethical constraints, such as the suspension of its "photo-to-voice" feature due to privacy concerns and the prohibition of uploading real human faces as reference material . This can limit creative freedom for creators. Additionally, it lacks integrated advanced editing tools, unlike some competitors, necessitating external software for post-production tasks like masking or compositing 6. The model's "Cinematic Monoculture" can also make it challenging to generate non-cinematic or imperfect footage without extensive micro-prompting 21.

Scalability and Integration Capabilities

Seedance 2.0's official REST API is set to launch through Volcengine 22, facilitating integration into broader workflows, though third-party API platforms already offer access 22. Access is primarily through ByteDance's internal ecosystem (Jimeng/Dreamina), which can be restrictive for external developers . Platforms like WaveSpeedAI, which aggregates over 600 AI models and offers a unified API, represent a different approach to scalability, providing unparalleled access to diverse technologies, including Seedance v3 and Kling 2.0 10. For complex AI agent ecosystems, specialized platforms like LangGraph or CrewAI are preferred over single-purpose video generators like Seedance 2.0 23. Synthesia, with its Excel Add-in and LMS exports, demonstrates strong enterprise integration capabilities .

Pricing Considerations

Cost-effectiveness is a significant driver for users considering alternatives. While Seedance 2.0 offers optimized price-performance ratios on platforms like Atlas Cloud, its credit-based pricing can lead to "unexpected costs," especially with iterative design processes . Cost per minute varies significantly by resolution and features, ranging from $0.10/min for Basic 720p to $0.80/min for Cinema 2K 2. For high-volume video production, subscription models offered by alternatives like Dzine or Kling may prove more economical . Kling also offers a free tier 13.

Guiding Principles for Model Selection

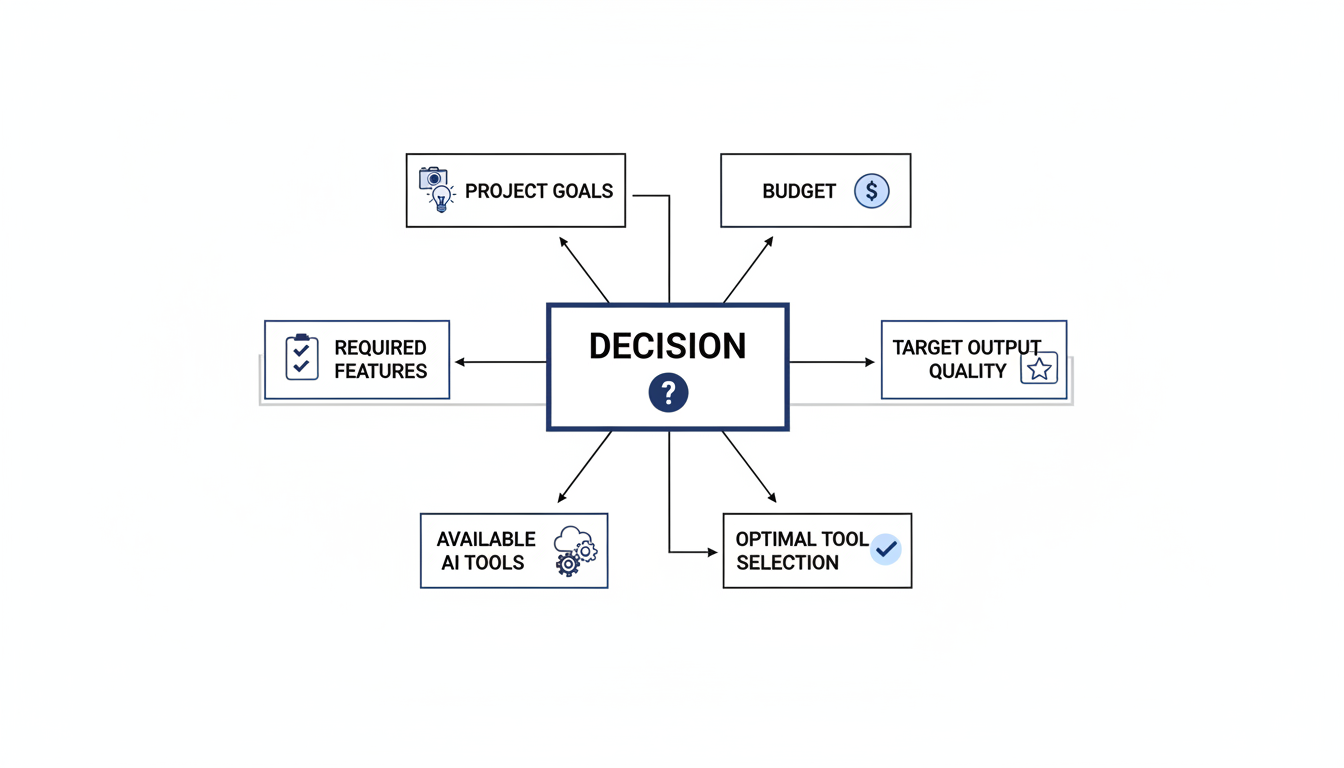

Choosing the best AI video generation tool in 2026 requires a structured approach that aligns with specific project needs and strategic objectives.

-

Define Project Requirements:

- Video Duration: Is the primary need for short, punchy clips (Seedance 2.0, Veo 3.1, Runway Gen-4.5, Kling AI) or longer narratives and presentations (Synthesia, HeyGen, Sora 2)?

- Creative Control vs. Automation: Is highly granular artistic control and expressive camera choreography paramount (Runway Gen-4.5) or is the goal to automate content generation from scripts with minimal human intervention (Synthesia)?

- Character Focus: Does the project require hyper-realistic human characters with consistent appearance and natural movements (Kling AI, HeyGen) or does it focus more on complex environments and abstract concepts (Sora 2, Veo 3.1)?

- Multimodal Complexity: How many reference types (images, video, audio, text) will be used, and how precisely must the model interpret them? Seedance 2.0 excels here, but consider the learning curve .

-

Evaluate Output Quality & Specialization:

- Realism & Physics: For projects demanding unmatched narrative intelligence, emotional storytelling, and advanced physics simulation, Sora 2 is a strong contender . For film-grade lighting and integrated audio, Veo 3.1 is superior .

- Resolution & Frame Rate: Native 4K output (Veo 3.1) or higher frame rates (Kling 3.0 offers 60fps) may be crucial for broadcast or high-fidelity applications .

- Audio Integration: If native, perfectly synchronized audio, dialogue, and lip-sync are critical, Seedance 2.0 and Veo 3.1 offer robust solutions .

-

Consider Workflow & Integration:

- API Access: Assess the availability and maturity of APIs for integration into existing pipelines. WaveSpeedAI provides a unified API for a multitude of models 10.

- Ecosystem Compatibility: Does the tool integrate seamlessly with existing editing software, cloud platforms, or enterprise systems (e.g., LMS exports for Synthesia) ?

- Learning Curve: For users prioritizing ease of use over complex control, simpler interfaces or models like Kling may be more appealing .

-

Analyze Cost-Effectiveness & Scalability:

- Pricing Model: Compare credit-based systems (Seedance 2.0) with subscription models (Kling, Dzine) to determine which is more economical for anticipated production volumes and iteration needs .

- High-Volume Production: For scaling content creation, platforms offering predictable costs and efficient workflows (e.g., HeyGen for personalized videos) might be preferred .

-

Address Ethical and Policy Constraints:

- Human Likeness: If the creative vision requires using real human faces as references, Seedance 2.0's ethical constraints and prohibition on such inputs might necessitate exploring alternatives .

By systematically evaluating these factors, users can identify the AI video generation model that best fits their specific project requirements, budget, technical capabilities, and creative aspirations, leveraging the strengths of each platform in this rapidly evolving market.

Conclusion: Making an Informed Decision

Seedance 2.0, launched by ByteDance around February 2026, marks a significant architectural and qualitative leap in multimodal video generation, transforming it from experimental demos to production-ready systems . Its core strengths lie in providing precise control over video output, making it suitable for commercial workflows across various industries 24. Key capabilities include multimodal input support for up to 12 files (images, videos, audio, text) , extreme consistency and stability crucial for character IP continuity , and powerful video editing with infinite extension features . The model also boasts native audio generation through its Dual-Branch Diffusion Transformer, ensuring synchronized sound and phoneme-level lip-sync in multiple languages . Furthermore, Seedance 2.0 offers "director-level" control, understanding complex narrative logic and orchestrating camera language, along with high-precision replication of creative templates . It delivers 2K resolution output (2048x1080) for 4 to 15-second clips, with generation speeds 30% faster than previous versions . ByteDance's investment strategically strengthens platform efficiency and content supply, reflecting its dominance in short-form video .

Despite its advanced capabilities, Seedance 2.0 presents several limitations and reasons for users to seek alternatives. These include potential drift on longer shots, where stylistic wobbles or color shifts can occur beyond 6 seconds, and issues with texture conflict for fine patterns . The model may miss micro-cues like exact typography and struggle with text rendering, often garbling on-screen labels . Its primary focus remains on short-form content (4-15 seconds), making longer single-pass storytelling challenging due to the need for stitching clips . Seedance 2.0 also lacks integrated advanced editing tools, necessitating external software for complex post-production 6. The multimodal input system, while powerful, has a steep learning curve due to its "@" command syntax and can lead to unpredictable blending if references conflict . Generation speed, though improved, is not real-time, taking approximately 60-180+ seconds per clip for complex setups . Furthermore, access to Seedance 2.0 is often gated and limited, primarily through official ByteDance channels . Ethical constraints, such as the suspension of "photo-to-voice" and the prohibition of real human faces as reference material, impose significant usage limitations for creators . Cost can be a factor, with enterprise access requiring significant deposits and variable pricing per minute depending on resolution and features .

The AI video generation landscape in 2026 offers a diverse array of powerful alternatives, each catering to specific needs:

- Synthesia excels in business and training, offering realistic AI avatars, multilingual voice cloning, and extended video durations for non-technical teams .

- OpenAI Sora 2 is renowned for its narrative intelligence, emotional storytelling, and cinematic quality, ideal for filmmakers and experimental long-form content with its up to 25-second clips .

- Google Veo 3.1/3.2 leads in film-grade lighting, physics, and integrated audio, providing native 4K output for professional productions and advertising .

- Runway Gen-4.5 positions itself as the "Artist's Model" with unmatched creative control, expressive camera choreography, and rich toolsets for filmmakers and VFX artists .

- Kling AI offers a balanced solution for realistic human characters, excelling in face consistency and natural movements for commercial advertising and talking-head videos .

- HeyGen specializes in personalized and translated videos at scale, featuring custom avatars and voice cloning for sales, marketing, and global audiences .

- WaveSpeedAI functions as an aggregator, providing unparalleled access to over 600 AI models, including exclusive ones like Kling 2.0 and Seedance v3, through a unified API 10.

- Specialized tools like Luma AI (Dream Machine / Ray3.14) focus on photorealistic scenes, while Pika Labs caters to social-first creative content .

Making an informed decision requires evaluating a range of factors. Users should carefully consider the specific features offered, such as multimodal input capabilities, integrated editing tools, audio synchronization, and director-level controls [derived from general context]. Pricing models are crucial, weighing credit-based systems against subscription plans . Aligning with the tool's target audience and its output specifications—including desired quality, realism, resolution, frame rate, and generation speed—is paramount . Usability, including the learning curve and interface, alongside ethical policies (especially regarding human likeness and data privacy), and the availability of community support, should also be assessed .

Ultimately, selecting the most suitable AI video generation tool hinges on aligning its capabilities with specific project goals. Whether the need is for commercial advertisements, long-form narratives, creative experimentation, or scaled personalized content, understanding budget constraints, desired quality versus speed trade-offs, and internal workflow requirements will guide the choice towards the optimal solution in this rapidly evolving AI video landscape.