Seedance 2.0 vs. Other AI Video Models: A Comparative Analysis

Introduction: The Evolving Landscape of AI Video Generation

The realm of artificial intelligence has witnessed unprecedented advancements, with AI video generation emerging as one of its most dynamic and rapidly evolving frontiers. From simple text-to-video capabilities to sophisticated models capable of producing photorealistic and highly customizable content, the technology is fundamentally transforming digital content creation across industries. This swift progression continues to push the boundaries of what is possible, enabling creators, marketers, and researchers to visualize ideas with previously unattainable efficiency and realism.

Amidst this exciting innovation, a new formidable player has entered the arena: Seedance 2.0. Heralded as a significant leap forward, Seedance 2.0 promises to deliver enhanced performance, novel features, and a potentially disruptive approach to AI-powered video synthesis. This report aims to provide a comprehensive and impartial comparative analysis of Seedance 2.0 against its established counterparts in the AI video generation market. Our objective is to meticulously evaluate its technical capabilities, performance metrics, and creative potential relative to other leading models, thereby setting the stage for a detailed examination of its strengths, weaknesses, and overall market position.

Seedance 2.0: Deep Dive into its Capabilities and Innovations

Seedance 2.0, developed by ByteDance, represents a significant leap in AI video generation, moving beyond randomized content creation to offer precision control for professional creators. Launched around February 8th-12th, 2026, with a broader official release anticipated around February 24th, 2026, it aims to provide unprecedented command over cinematic language .

Comprehensive Overview of Core Features

Seedance 2.0 is equipped with an impressive suite of features designed to empower sophisticated video production:

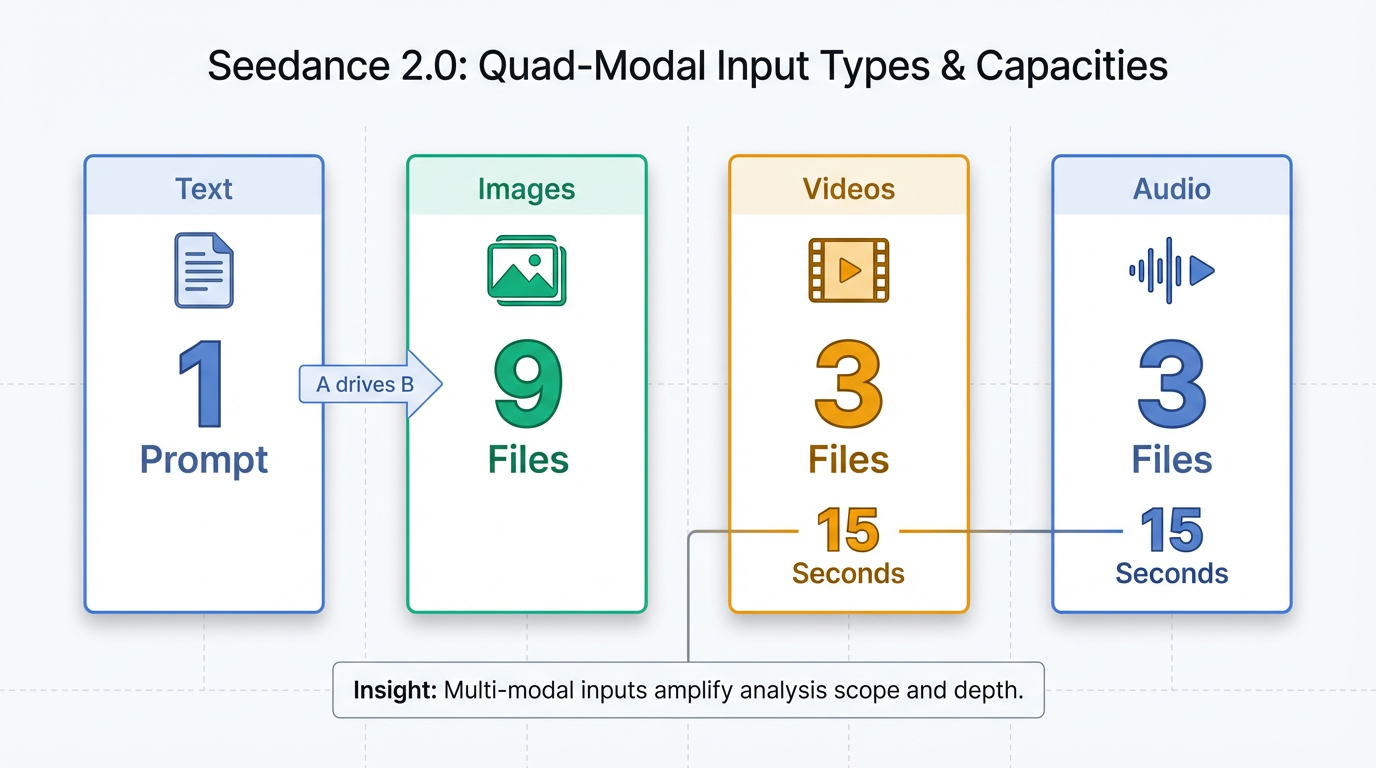

- Multimodal Input System (Quad-modal): A defining characteristic, Seedance 2.0 accepts text, up to nine images, up to three video clips (totaling a maximum of 15 seconds), and up to three MP3 audio files (totaling a maximum of 15 seconds) simultaneously . This "Reference Capability" uses an "@ mention system" within prompts to explicitly direct how each uploaded asset influences the generation, allowing users to define visual style, motion, rhythm, and narrative .

- Director Mode and Control Panel: This feature provides parameter-level controls to adjust physical movement, camera trajectories, and lighting dynamics, granting users precise control over performance, lighting, shadow, and camera movement .

- Native Audio-Visual Co-Generation: Audio and visual tracks are generated simultaneously and integrated, eliminating the need for separate post-production. It includes multi-language dialogue with precise lip-sync in over eight languages, context-aware ambient sounds, action-linked sound effects, and background music. The system also supports multi-speaker voice cloning using uploaded real voices .

- Cinematic Camera Mastery: Seedance 2.0 supports complex camera movements such as pans, tilts, zooms, handheld or Steadicam simulations, dolly shots, orbits, tracking, Hitchcock zooms, and whip pans. A "Keyframe Control" feature allows for defining exact start and end points for every motion . It can also extract and apply camera movements from reference videos .

- Enhanced Base Quality and Physics Accuracy: The platform delivers outstanding motion stability and physical restoration, ensuring objects interact according to real-world rules, including gravity, momentum, and inertia, with realistic materials and environmental interactions. Motion is consistently fluid and natural .

- Precise Instruction Following: Achieving higher usability in complex scenarios, Seedance 2.0 offers upgraded instruction-following and consistency, enabling users to command the entire video creation process like a professional director .

- Video Editing and Extension: Users can modify existing videos without regenerating them from scratch. Capabilities include character replacement, content addition or deletion, scene extension, style transfer, narrative changes, and smooth video concatenation .

- Creative Template Replication: Seedance 2.0 can replicate entire creative concepts, including advertising formats, visual effects, film techniques, and editing styles from provided reference materials .

Key Technological Innovations and Architectural Improvements

The robust capabilities of Seedance 2.0 are underpinned by several technological breakthroughs:

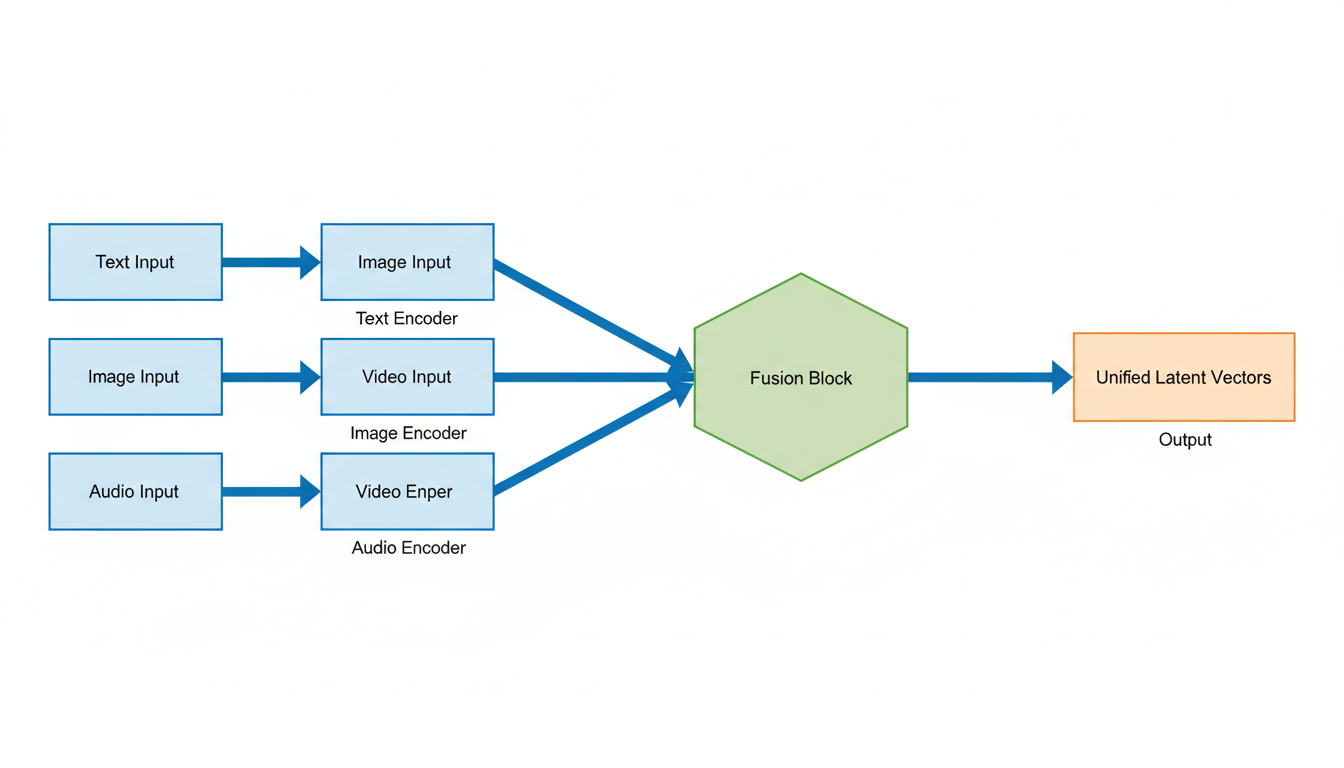

- Multi-Reference Transformer (MRT) Engine / Dual-branch Diffusion Transformer Architecture: This architecture enhances scalability and attention mechanisms through a dual-branch design, with one transformer dedicated to video and another to audio. This ensures constant communication for perfect synchronization between sound and visuals . The architecture cleverly combines diffusion models with transformer-based temporal modules to balance creative flexibility and structural stability 1.

- Narrative Planner / Automatic Storyboarding: Functioning much like a storyboard artist, this component breaks down narrative prompts into a sequence of distinct, coherent camera shots. It orchestrates generation and utilizes shared consistency data to maintain consistent character appearance, clothing, and lighting across cuts .

- Proprietary "Identity-Lock" Mechanism (Global Identity Persistence): This innovation uses a new visual anchoring algorithm to ensure flawless character retention—including facial features, clothing details, and body proportions—and object consistency for elements like logos, text, environments, and visual styles across multiple shots and scene transitions, effectively solving the "character drift" issue .

- Quad-Modal Encoder: This encoder processes text, images, video, and audio through pre-trained encoders, converting them into a unified language of latent vectors for mathematical representation 2.

Significant Improvements Over Predecessors and Market Competitors

Seedance 2.0 marks a substantial advancement over its predecessor and stands out against market rivals:

- Over Seedance 1.5: It represents a significant leap in generation quality, offering higher usability for complex interaction and motion scenes, alongside notable improvements in physical accuracy, visual realism, and controllability 3. It is approximately 30% faster than Seedance 1.5 and delivers doubled resolution 4.

- Vs. Competitors (e.g., Sora 2, Kling 3.0, Veo 3.1): Seedance 2.0 is positioned to outperform models like Veo 3.1 and Kling 3.0 5. It offers unmatched multimodal control and a broader range of creative guidance compared to other models . Crucially, it explicitly addresses the integration of sound and continuity, which other models like Sora 2 have historically lacked in public demonstrations 6. Seedance 2.0 also holds an edge in stylized content such as anime due to specialized training and character-locking features 1. Its "Director-level control" via its quad-modal input system is currently unmatched 7, although it still lags slightly behind Sora 2 in highly complex physical interaction scenarios 7.

Notable Performance Benchmarks

Seedance 2.0 demonstrates strong performance metrics across key areas:

- Resolution: It primarily offers native 2K (2048x1080 or 2048x1152) resolution output, suitable for social media, web content, and most digital distribution channels . This resolution provides approximately 77% more pixels than 1080p, resulting in sharper detail 8. While some sources also mention "up to 4K upscaling support" 1 or pushing resolution "up to 2160p" 9, native generation is consistently cited as 2K.

- Duration: The platform generates videos ranging from 4 to 15 seconds, with some early predictions suggesting capabilities for 20 seconds or even 30-60 seconds .

- Frame Rate: Videos are produced at a standard 24 frames per second (fps) .

- Speed: Seedance 2.0 is reportedly 30% faster than previous versions and competitors like Kling AI . A typical 2K multi-shot sequence generates in approximately 60 seconds 10, with short clips generating as fast as 2-5 seconds 7. This speed is optimized for consumer-grade use and allows for rapid prototyping .

- Audio Quality: The audio quality is described as excellent overall, with notably cleaner output and distinct spatial positioning due to its dual-branch audio architecture .

- Internal Benchmarks: Seedance 2.0 leads in various dimensions across different task types, including Text-to-Video, Image-to-Video, and Multimodal tasks, according to its internal SeedVideoBench-2.0 benchmarks 11.

| Metric | Value |

|---|---|

| Resolution | Native 2K (2048x1080 or 2048x1152) |

| Duration | 4 to 15 seconds |

| Frame Rate | 24 frames per second |

| Speed | 30% faster than previous versions; 2K multi-shot in ~60 seconds; Short clips 2-5 seconds |

Unique Selling Propositions (USPs)

Seedance 2.0 distinguishes itself in the market through several unique selling propositions:

- Unmatched Multimodal Control: The @ reference system offers unprecedented creative flexibility and precision, moving beyond simple text prompts to concrete asset-based direction .

- Industry-Leading Multi-Shot Storytelling: With its persistent character and object identity features, Seedance 2.0 enables coherent, long-form narratives and complex scene transitions without the common issue of "character drift" .

- Integrated Audio-Visual Experience: The native co-generation of synchronized audio, including multi-language lip-sync, alongside video simplifies workflows and significantly enhances realism .

- Efficiency for Professional Workflows: Seedance 2.0 offers significant efficiency gains, capable of achieving in just five minutes what would traditionally take a full creative team an entire day, fostering a "zero-cost" production model for rapid iteration and creative exploration .

- Optimized for Professional and Commercial Use: The platform is specifically tailored for industrial-grade creation scenarios, with features supporting post-production compatibility, such as exporting depth maps and alpha masks .

Key Competitors in AI Video: An Overview

In the rapidly evolving AI video generation market of 2026, Seedance 2.0 (including variants like 1.5 Pro and v3) faces significant competition from other advanced models 12. While Seedance 1.5 Pro, developed by ByteDance (the company behind TikTok and CapCut), boasts a Dual-Branch Diffusion Transformer with 4.5 billion parameters and excels in native audio-visual generation with millisecond-precision synchronization and multi-language dialogue, making it ideal for international content, TikTok/Reels, and dialogue-heavy videos 12, and Seedance 1.0 Pro Fast demonstrated high motion energy and imaging quality in some benchmarks 13, other prominent platforms offer distinct advantages. By 2026, the market has seen significant advancements, with models capable of producing 4K resolution videos, longer durations, improved motion coherence, and sophisticated multi-modal capabilities 14. The focus has shifted towards cinematic quality, realistic physics, synchronized audio, and greater creative control . This section introduces three of the most consistently ranked top alternatives and direct competitors to advanced platforms like Seedance 2.0: OpenAI's Sora 2, Google's Veo 3/3.1, and RunwayML's Gen-4.5.

OpenAI's Sora 2

Sora 2, developed by OpenAI, distinguishes itself with high realism and an advanced understanding of physics, enabling it to generate complex scenes with multiple characters and specific motion 15. It generates synchronized audio, including dialogue, ambient sounds, and effects, alongside its visuals 16. The model is highly versatile, capable of producing photorealistic, animated, or stylized outputs 16, and maintains character and "world state" consistency across multiple shots 16. Sora 2 also offers social features through an iOS/Android app for sharing and remixing, can simulate "failures" for previsualization, and includes C2PA watermarking and metadata tracking 12. It supports video lengths up to 15-25 seconds at 1080p 12 and benefits from a partnership with Disney in early 2026 for character generation capabilities 17. Its primary audience includes filmmakers and content creators seeking high-quality output 15, as well as social content creators focused on character-driven narratives, community-based creation 12, and researchers or experimental projects .

Google's Veo 3 / 3.1

Google DeepMind's Veo 3 and its enhanced version, 3.1, are recognized for their exceptional cinematic flair, consistent output, and deep understanding of cinematic language, encompassing camera angles, lighting, pacing, and mood . These models natively synchronize audio—including dialogue, sound effects, and ambient noise—with visuals 16. Veo 3.1 can produce high-fidelity clips up to 60 seconds 18 and supports 4K resolution output 12. It excels at maintaining character consistency throughout extended sequences 18 and is integrated into broader ecosystems such as Canva Pro 19 and Google's Gemini/Imagen 4 flow tool 12. Veo 3/3.1 is primarily aimed at professional film productions 16, creators of cinematic and long-form content critically requiring native audio 12, and marketers or visual storytellers 20.

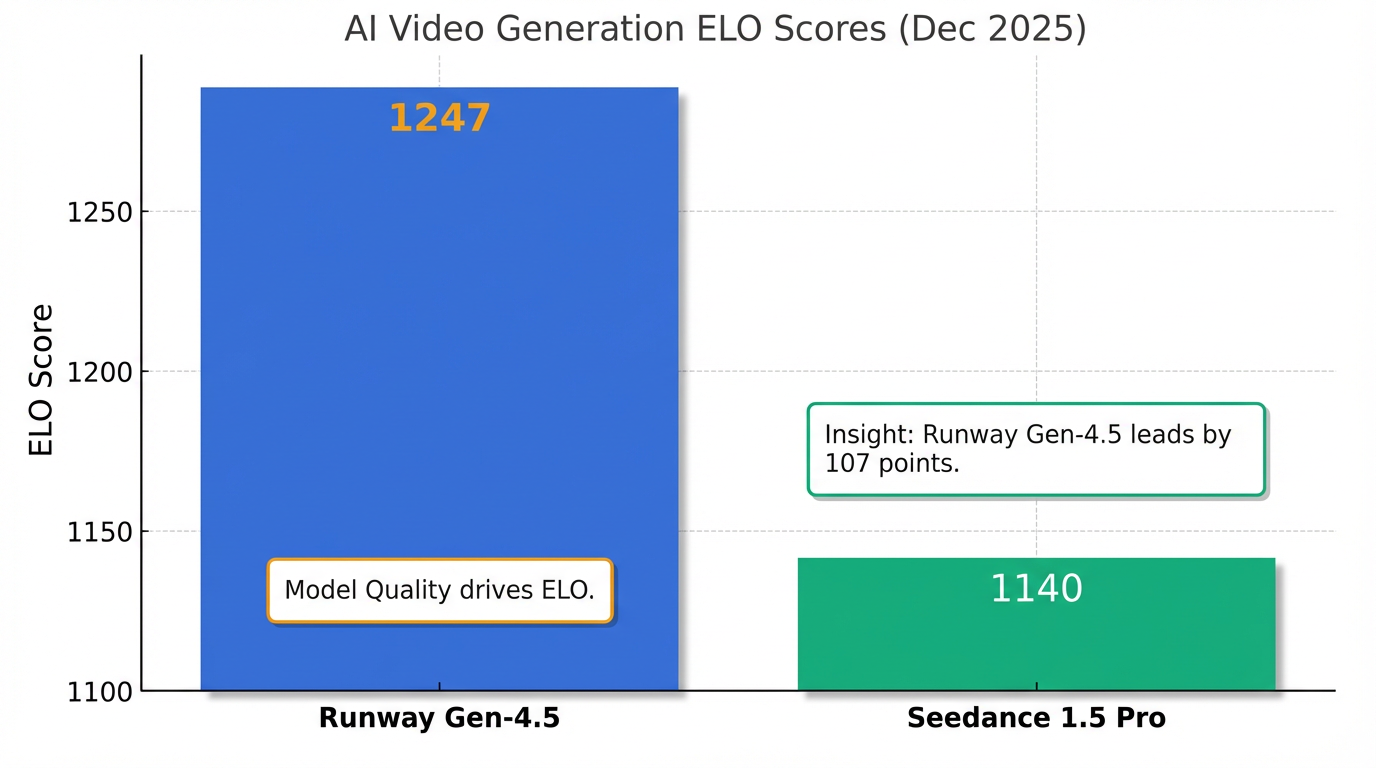

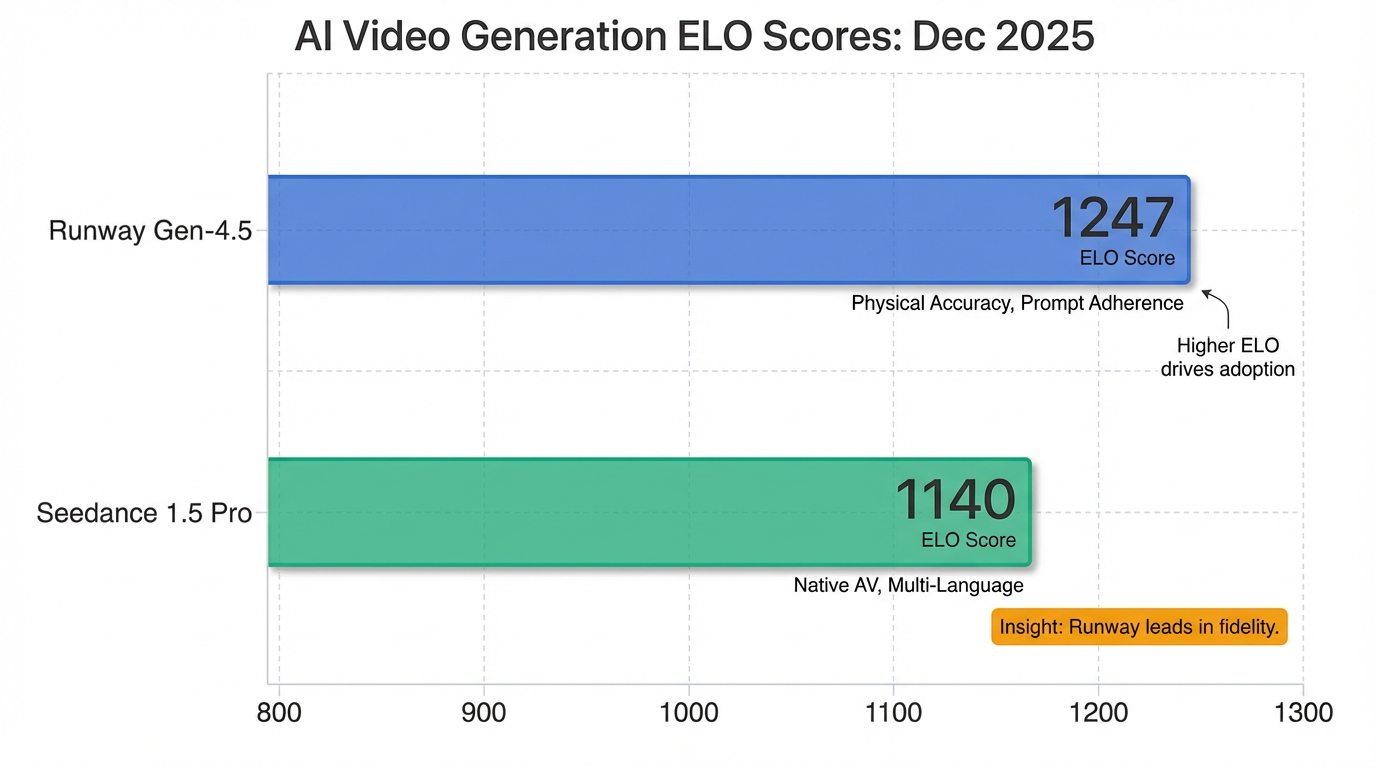

RunwayML's Gen-4.5

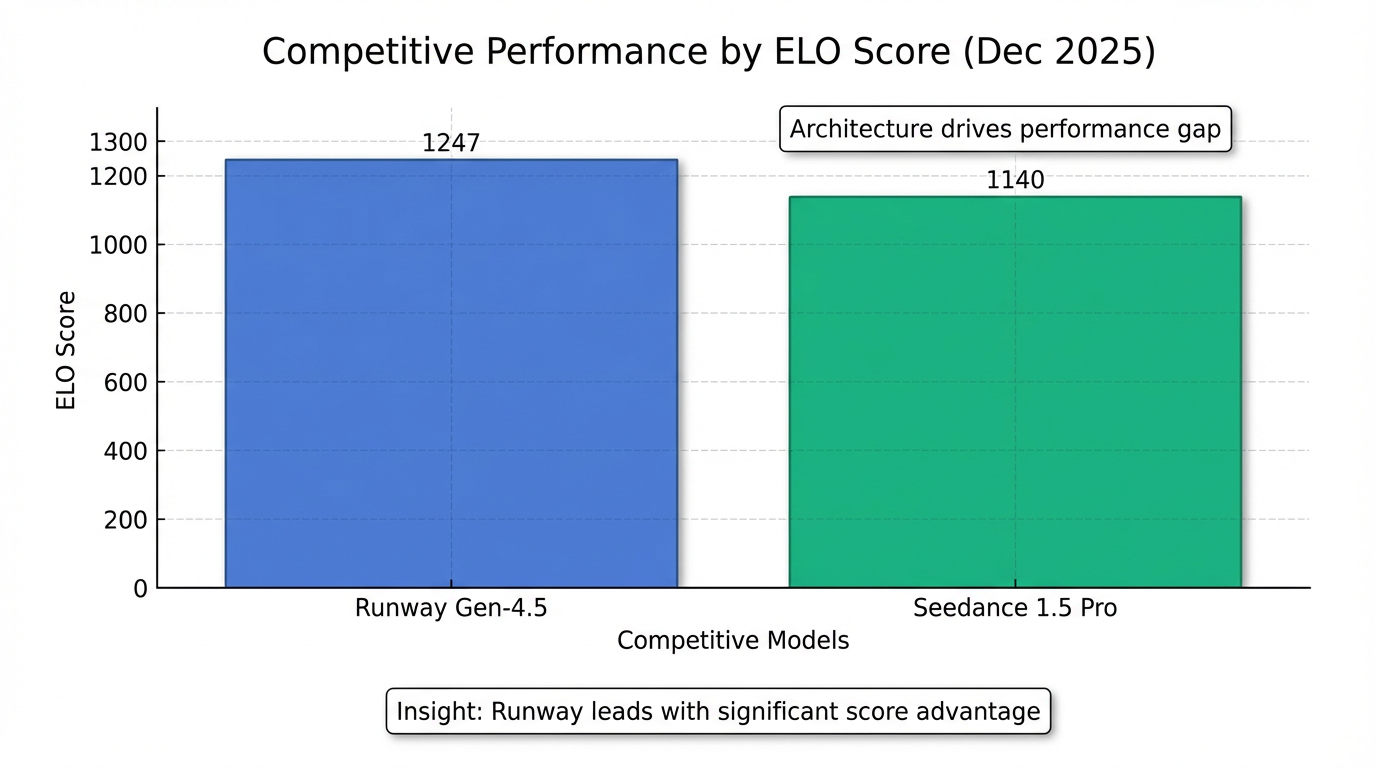

RunwayML's Gen-4.5, developed in collaboration with NVIDIA, achieved the top ranking for physical accuracy, prompt adherence, and motion quality in December 2025, with an ELO score of 1247 12. It is particularly noted for its ability to simulate realistic object weight, momentum, and force 12. Gen-4.5 provides a comprehensive suite of advanced editing tools, including a motion brush, camera controls, video inpainting, object removal, and background changes 15. It delivers high visual fidelity with HD/1080p cinematic clips, typically ranging from 4 to 20 seconds 12, and is optimized for speed on NVIDIA's latest GPUs 12. The model also supports collaborative features for team projects 15. This makes Runway Gen-4.5 highly appealing to professionals creating product demos, music videos, or other productions demanding precise control 12, as well as creative professionals, filmmakers, and video editors seeking extensive artistic and technical command 15.

Head-to-Head: Comparative Analysis of Seedance 2.0 and Leading Models

The advanced AI video generation market of 2026 is highly competitive, with Seedance 2.0 positioned alongside prominent models like OpenAI's Sora 2, Google DeepMind's Veo 3/3.1, and RunwayML's Gen-4.5. While Seedance 1.5 Pro already demonstrates strong capabilities with its Dual-Branch Diffusion Transformer and millisecond-precision audio-visual synchronization, achieving an ELO score of approximately 1140 in December 2025, Seedance 2.0 represents a significant leap 12. This section provides a detailed comparative analysis across key parameters to highlight the distinct advantages and strategic positioning of each model.

| Parameter | Seedance 2.0 | Sora 2 | Veo 3/3.1 | Runway Gen-4.5 |

|---|---|---|---|---|

| Video Quality & Realism | Outstanding motion stability & physical restoration (gravity, momentum, inertia). Fluid motion, realistic materials. Leads internal benchmarks. Lags slightly behind Sora 2 in complex physical interaction. | High realism & physics understanding. Generates complex scenes with multiple characters & specific motion. Versatile (photorealistic, animated, stylized). | Cinematic flair, consistent output. Exceptional understanding of cinematic language (camera angles, lighting, pacing, mood). High-fidelity clips. | #1 for physical accuracy, prompt adherence, motion quality (ELO 1247 Dec 2025). Simulates realistic object weight, momentum, force. High visual fidelity. |

| Generation Speed | 30% faster than predecessors/competitors. 2K multi-shot sequence in ~60s; short clips 2-5s. Optimized for rapid prototyping. | Not specified. | Not specified. | Optimized for speed on NVIDIA's latest GPUs. |

| Control Options | Multimodal (Quad-modal) input with @ reference system (text, images, video, audio). Director Mode, Control Panel (physical movement, camera, lighting). Cinematic Camera Mastery (keyframe, motion extraction). Precise Instruction Following. Video Editing & Extension. | Generates specific motion, complex scenes. Simulates 'failures' for previsualization. | Exceptional understanding of cinematic language (camera angles, lighting, pacing, mood). | Comprehensive advanced editing tools: motion brush, camera controls, video inpainting, object removal, background changes. Precise control. |

| Audio Integration | Native Audio-Visual Co-Generation: simultaneous, integrated. Multi-language dialogue (lip-sync 8+ languages), ambient sounds, SFX, background music. Multi-speaker voice cloning. Excellent quality, distinct spatial positioning via dual-branch architecture. | Generates synchronized audio (dialogue, ambient sounds, effects) alongside visuals. | Generates native audio synchronized with visuals (dialogue, SFX, ambient noise). Critical for native audio projects. | Not specified. |

| Consistency | Proprietary 'Identity-Lock' Mechanism (Global Identity Persistence) ensures flawless character/object retention across shots/transitions (solves 'character drift'). Narrative Planner maintains consistency across cuts. Leads in stylized content like anime. | Maintains character and 'world state' consistency across multiple shots. | Consistent output. Excels at maintaining character consistency across extended sequences. | High prompt adherence and physical accuracy. |

| Resolution | Primarily native 2K (2048x1080/1152). ~77% more pixels than 1080p. Some mention up to 4K upscaling support, but native 2K is consistent. | Up to 1080p. | 4K resolution output. | HD/1080p cinematic clips. |

| Length | 4 to 15 seconds. Early predictions: 20-60 seconds. | Up to 15-25 seconds. | Up to 60 seconds (Veo 3.1). | Typically 4-20 seconds long. |

| Integration & Workflow | Industrial-grade with post-production compatibility (depth maps, alpha masks). Modifies existing videos. API (OpenAI-compatible). Available on Dreamina AI, Doubao, WaveSpeedAI, ImagineArt, Dzine. Atlas Cloud support planned. | iOS/Android app for sharing/remixing. C2PA watermarking/metadata. Disney partnership for character generation. | Integrated into Canva Pro and Google's Gemini/Imagen 4 flow tool. | Supports collaborative features for team projects. |

| Target Audience | Professional creators, commercial production, advertising, e-commerce, marketing, narrative shorts, film pre-vis, independent content, music videos, social media (TikTok/Reels). | Filmmakers, high-quality content creators. Social creators, character-driven narratives, community-based creation. Researchers, experimental projects, narrative storytelling. | Professional film productions. Creators of cinematic productions, long-form content, projects needing native audio. Marketers, visual storytellers. | Product demos, music videos, professional productions demanding precise control. Creative professionals, filmmakers, video editors seeking artistic/technical control. |

Video Quality and Realism

Seedance 2.0 delivers outstanding motion stability and physical restoration, ensuring objects interact according to real-world rules like gravity, momentum, and inertia, with fluid and natural motion and realistic materials . It leads in various dimensions across different task types in its internal SeedVideoBench-2.0 benchmarks 11. However, it still lags slightly behind Sora 2 in highly complex physical interaction scenarios 7.

OpenAI's Sora 2 is recognized for its high realism and understanding of physics, capable of generating complex scenes with multiple characters and specific motion, producing versatile outputs that can be photorealistic, animated, or highly stylized . Google's Veo 3/3.1 stands out for its cinematic flair, consistent output, and an exceptional understanding of cinematic language, including camera angles, lighting, pacing, and mood . It generates high-fidelity clips 18. RunwayML's Gen-4.5 has achieved the #1 ranking for physical accuracy, prompt adherence, and motion quality, earning an ELO score of 1247 in December 2025, and is particularly known for simulating realistic object weight, momentum, and force with high visual fidelity 12.

Generation Speed

Seedance 2.0 is reportedly 30% faster than its predecessors and competitors like Kling AI . A typical 2K multi-shot sequence can be generated in approximately 60 seconds, with short clips generating as fast as 2-5 seconds . This speed is optimized for consumer-grade use and allows for rapid prototyping . While specific numerical speed metrics for Sora 2 and Veo 3/3.1 are not provided in the available data, Runway Gen-4.5 is noted for being optimized for speed on NVIDIA's latest GPUs 12.

Control and Customization Options

Seedance 2.0's defining feature is its multimodal input system, accepting text, up to 9 images, up to 3 videos, and up to 3 audio files simultaneously . Its "@ mention system" in prompts allows users to explicitly direct how each asset influences generation, defining visual style, motion, rhythm, and narrative . The "Director Mode" and "Control Panel" provide parameter-level controls for physical movement, camera trajectories, and lighting dynamics . It supports complex camera movements with "Keyframe Control" and can extract movements from reference videos . Seedance 2.0 also allows modification of existing videos and replication of entire creative concepts . This "Director-level control" via its quad-modal input system is currently unmatched 7.

Sora 2 is capable of generating complex scenes with specific motion and can simulate "failures" for previsualization . Veo 3/3.1 demonstrates an exceptional understanding of cinematic language, influencing camera angles, lighting, pacing, and mood . Runway Gen-4.5 offers a comprehensive suite of advanced editing tools, including motion brush, camera controls, video inpainting, object removal, and background changes, catering to professionals demanding precise control .

Audio Integration

Seedance 2.0 features native audio-visual co-generation, where audio and visual tracks are created simultaneously and integrated, eliminating the need for separate post-production . It includes multi-language dialogue with precise lip-sync in 8+ languages, context-aware ambient sounds, action-linked sound effects, and background music, also supporting multi-speaker voice cloning . Its dual-branch audio architecture contributes to excellent overall audio quality with distinct spatial positioning . Seedance 2.0 explicitly addresses the integration of sound, a feature that models like Sora 2 have historically lacked in public demonstrations 6.

Sora 2 generates synchronized audio, including dialogue, ambient sounds, and effects, alongside its visuals . Similarly, Veo 3/3.1 generates native audio synchronized with visuals, encompassing dialogue, sound effects, and ambient noise, making it crucial for projects requiring integrated sound . Information on Runway Gen-4.5's native audio integration was not detailed in the provided materials.

Consistency

Seedance 2.0 addresses the "character drift" issue with its proprietary "Identity-Lock" Mechanism (Global Identity Persistence), utilizing a new visual anchoring algorithm to ensure flawless character retention (facial features, clothing, body proportions) and object consistency (logos, text, environments) across multiple shots and scene transitions . Its "Narrative Planner" also acts as an automatic storyboarding tool, orchestrating generation and using shared consistency data to maintain character appearance, clothing, and lighting across cuts . Seedance 2.0 holds an edge in stylized content like anime due to its specialized training and character-locking features 1.

OpenAI's Sora 2 is noted for maintaining character and "world state" consistency across multiple shots 16. Google's Veo 3/3.1 consistently produces output and excels at maintaining character consistency across extended sequences . Runway Gen-4.5 is recognized for its high prompt adherence and physical accuracy, contributing to visual consistency 12.

Resolution and Length

Seedance 2.0 primarily offers native 2K (2048x1080 or 2048x1152) resolution output, providing approximately 77% more pixels than 1080p for sharper detail . While some sources mention "up to 4K upscaling support" or pushing resolution "up to 2160p", native generation is consistently cited as 2K . It generates videos ranging from 4 to 15 seconds, with early predictions suggesting capabilities for 20 seconds or even 30-60 seconds . The frame rate is typically 24 frames per second (fps) .

In comparison, Sora 2 supports video lengths up to 15-25 seconds at 1080p . Veo 3/3.1 can produce high-fidelity clips up to 60 seconds and supports 4K resolution output . Runway Gen-4.5 typically generates HD/1080p cinematic clips that are 4-20 seconds long 12.

Integration and Workflow Compatibility

Seedance 2.0 is tailored for industrial-grade creation scenarios, with features supporting post-production compatibility such as exporting depth maps and alpha masks . It allows for modification of existing videos without regenerating from scratch . Its API is designed to be OpenAI-compatible, with broader official release and API access expected around February 24th, 2026 . Seedance 2.0 is available on platforms including Dreamina AI, Doubao, WaveSpeedAI, ImagineArt, and Dzine, with Atlas Cloud also announcing plans for support .

OpenAI's Sora 2 features an iOS/Android app for sharing and remixing, along with C2PA watermarking and metadata tracking 12. It also benefits from a partnership with Disney in early 2026 for character generation 17. Google's Veo 3/3.1 is integrated into broader ecosystems like Canva Pro and Google's Gemini/Imagen 4 flow tool . Runway Gen-4.5 supports collaborative features for team projects 15.

Target Audience and Specific Use Cases

Seedance 2.0 is optimized for professional and commercial use, serving applications such as professional commercial production, advertising campaigns, e-commerce product videos, marketing materials, and narrative short films . It is also ideal for film pre-visualization, independent content creation, music video production, and social media content . Its multi-language lip-sync capability supports content localization efforts, significantly lowering production costs across various industries .

Sora 2 targets filmmakers and content creators needing high-quality output, as well as social content creators, those focused on character-driven narratives, and community-based creation . It also serves researchers and experimental projects, and narrative storytelling . Veo 3/3.1 is aimed at professional film productions, creators of cinematic productions, long-form content, and marketers and visual storytellers, especially for projects critically requiring native audio . Runway Gen-4.5 is suited for product demos, music videos, and professional productions demanding precise control, appealing to creative professionals, filmmakers, and video editors seeking artistic and technical control .

Pricing Structures

Information regarding the specific pricing structures for Seedance 2.0 and its competitors (Sora 2, Veo 3/3.1, and Runway Gen-4.5) is not detailed in the provided reference materials. Early rollout plans for Seedance 2.0 in China did raise privacy and ethical concerns on the Jimeng platform regarding the generation of personal voice characteristics 21.

Overall Competitive Analysis

Seedance 2.0 distinguishes itself with unmatched multimodal control through its @ reference system, offering unprecedented creative flexibility and precision . Its industry-leading multi-shot storytelling, enabled by persistent character and object identity, allows for coherent, long-form narratives without "character drift" . The native co-generation of synchronized audio, including multi-language lip-sync, streamlines workflows and enhances realism . Seedance 2.0 offers significant efficiency gains for professional workflows, capable of achieving in 5 minutes what might traditionally take a full creative team a day, fostering a "zero-cost" production model for rapid iteration .

While Seedance 2.0 excels in control and multimodal integration, Sora 2 maintains a strong position in raw realism and complex physics understanding . Veo 3/3.1 leads in cinematic quality, 4K output, and extended video durations . Runway Gen-4.5, with its top ranking in physical accuracy and comprehensive editing tools, caters to professionals requiring granular control over visual effects . The market is rapidly evolving, with a general shift towards cinematic quality, realistic physics, synchronized audio, and greater creative control across all leading models . Each model offers distinct advantages, contributing to a diverse and dynamic AI video production landscape.

Conclusion: Strategic Choices and Future Trajectories

The rapid evolution of AI video generation in 2026 presents professional creators with an unprecedented array of tools, each with distinct strengths tailored to specific production needs. ByteDance's Seedance 2.0, OpenAI's Sora 2, Google DeepMind's Veo 3/3.1, and RunwayML's Gen-4.5 lead this competitive landscape, pushing the boundaries of realism, control, and efficiency.

Summary of Comparative Strengths and Differentiators

Seedance 2.0 distinguishes itself through its unmatched multimodal control, facilitated by a quad-modal input system and an "@ mention system" that allows for explicit direction of visual style, motion, rhythm, and narrative from diverse assets (text, images, video, audio) . Its proprietary "Identity-Lock" mechanism is crucial for industry-leading multi-shot storytelling by ensuring consistent character and object retention across scenes, effectively solving the "character drift" issue . Furthermore, its native audio-visual co-generation with multi-language lip-sync simplifies workflows and enhances realism, making it highly efficient for professional and commercial applications . Seedance 2.0 primarily offers native 2K resolution, with generation reportedly 30% faster than its predecessors and competitors like Kling AI . It also excels in stylized content like anime due to specialized training and character-locking features 1.

In contrast, Sora 2 stands out for its exceptional realism and advanced physics understanding, capable of generating complex scenes with multiple characters and intricate motion. It produces synchronized audio and maintains character and "world state" consistency, making it ideal for narrative storytelling and high-quality film productions . Veo 3/3.1 shines with its cinematic flair, consistent output, and deep comprehension of cinematic language, including camera angles and mood. With support for up to 60-second clips and 4K resolution, alongside native synchronized audio, it targets professional film productions and long-form content creation . Lastly, Runway Gen-4.5 leads in physical accuracy, prompt adherence, and motion quality, with an ELO score of 1247. Its comprehensive suite of advanced editing tools, such as motion brush and video inpainting, positions it strongly for product demos, music videos, and professional productions demanding precise technical and artistic control .

Recommendations for Optimal Model Selection

Choosing the right AI video generation model depends critically on project requirements and desired outcomes:

- For Commercial Production, Precise Control, and Consistent Branding/Characters: Seedance 2.0 is the superior choice, especially for advertising campaigns, e-commerce videos, and social media content where consistent identity and specific stylistic replication are paramount . Its robust multimodal input offers director-level command over every aspect of the cinematic language 7.

- For High-Fidelity Realism, Complex Scenes, and Narrative Storytelling: Sora 2 is best suited for filmmakers and content creators needing photorealistic outputs, intricate character interactions, and seamless transitions in character-driven narratives .

- For Cinematic Quality, Long-Form Content, and High Resolution: Veo 3/3.1 is ideal for professional film productions, documentaries, and marketers who prioritize a cinematic aesthetic, extended duration, and 4K output .

- For Granular Control over Physics, Motion, and Post-Production Editing: Runway Gen-4.5 offers unparalleled control for motion graphics artists, music video producers, and professionals requiring precise manipulation of visual elements and realistic physics .

Emerging Trends and Future Trajectories in AI Video Creation

The AI video generation market is evolving at an accelerated pace, with several key trends shaping its future:

- Resolution and Duration: While Seedance 2.0 offers native 2K output with hints of 4K upscaling, the industry is clearly moving towards native 4K and even higher resolutions, alongside capabilities for significantly longer video durations (up to several minutes) .

- Enhanced Multimodal Integration: The Quad-modal encoder of Seedance 2.0 exemplifies the increasing sophistication of combining text, images, video, and audio inputs for richer, more nuanced generation 2. Future iterations will likely see deeper contextual understanding and more seamless integration across all modalities.

- Precision Control and Customization: The shift from basic text prompts to "director-level" control, as seen in Seedance 2.0's Director Mode and keyframe controls, signifies a move towards greater user command over every aspect of video generation, including camera trajectories, lighting, and performance .

- Ethical Considerations and Transparency: Concerns regarding privacy (as experienced by Seedance 2.0's initial rollout 21) and the need for content authenticity will drive further development in watermarking (e.g., Sora 2's C2PA watermarking 12) and ethical AI frameworks.

Long-Term Impact on Professional Workflows and Creative Possibilities

The advent of advanced AI video models like Seedance 2.0 marks a pivotal shift in content production. The long-term impact will be transformative:

- Streamlined Professional Workflows: Tasks that once required days for a full creative team, such as pre-visualization, storyboarding (Seedance 2.0's Narrative Planner ), and rapid prototyping, can now be accomplished in minutes . This accelerates creative cycles and allows professionals to focus on higher-level creative direction.

- Reduced Content Production Costs: AI-driven generation fosters a "zero-cost" production model for initial iterations and specific content types, democratizing access to high-quality video creation and enabling smaller teams or independent creators to compete with larger studios .

- Expanded Creative Possibilities: Tools like Seedance 2.0's "Creative Template Replication" allow for the rapid exploration of diverse visual effects, film techniques, and editing styles. This opens new avenues for personalized content, experimental art forms, and efficient content localization through multi-language lip-sync capabilities 22. The ability to modify existing videos without regeneration also adds unprecedented flexibility in post-production .

In conclusion, the AI video generation market of 2026 is characterized by robust competition and continuous innovation. Seedance 2.0, with its emphasis on multimodal control, identity persistence, and integrated audio-visual generation, is well-positioned for professional and commercial applications demanding precision and efficiency. However, the diverse strengths of its competitors like Sora 2, Veo 3/3.1, and Runway Gen-4.5 underscore a future where specialized AI tools will cater to an ever-widening spectrum of creative needs, fundamentally reshaping the landscape of video production.