AI in Government Networks National Security

The New Frontier AI in Defense

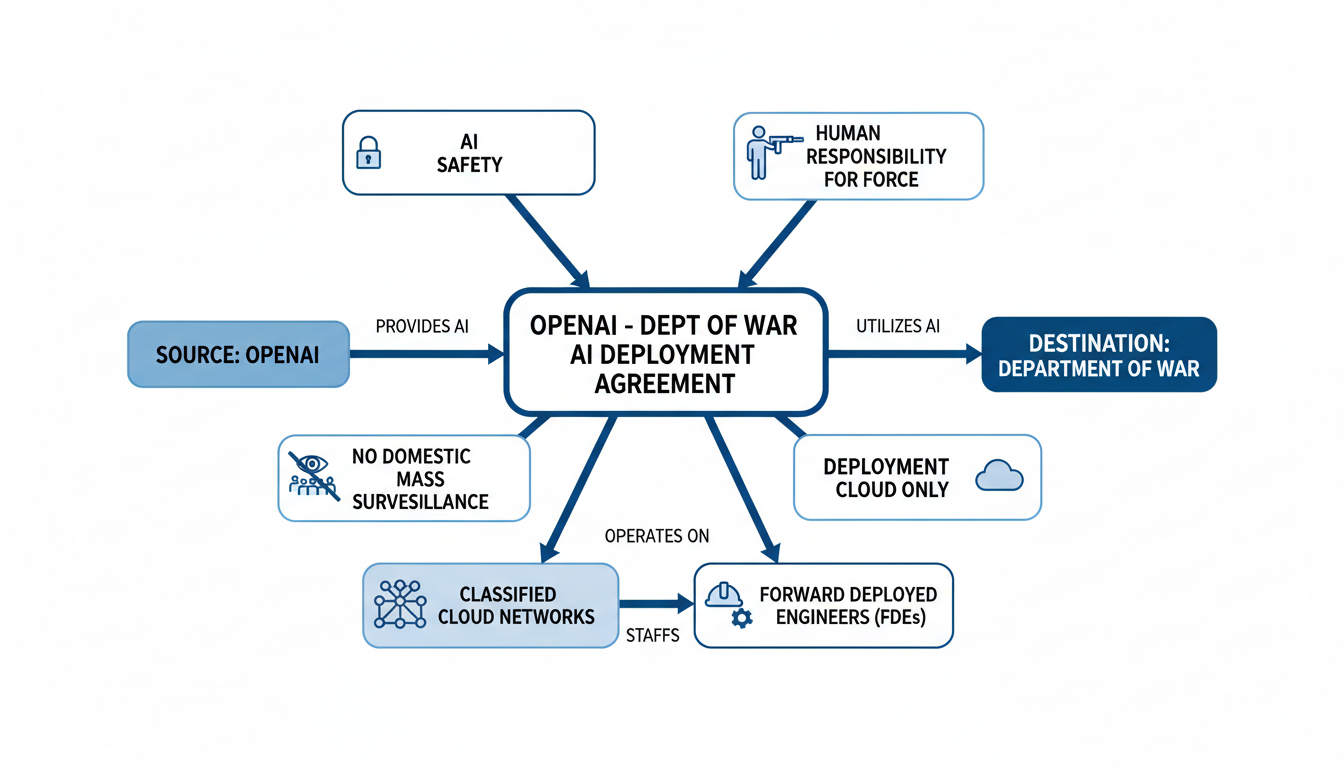

OpenAI struck a deal with the U.S. Department of War in February 2026, deploying its AI models on classified networks despite ongoing industry debates.

On February 28, 2026, OpenAI CEO Sam Altman announced a significant agreement . OpenAI will deploy its advanced AI models onto classified cloud networks . This deal is with the U.S. Department of War (DoW) . The DoW is the designation for the Department of Defense (DoD) under the current administration .

This agreement followed intense negotiations . It came hours after former President Donald Trump ordered federal agencies to stop using AI from OpenAI's competitor, Anthropic . Altman stated on X that the DoW showed "deep respect for safety" . They also desired a partnership for the best outcomes . OpenAI's core principles guided the agreement . These included "AI safety and wide distribution of benefits" .

The deal has specific prohibitions . It bans domestic mass surveillance . It also emphasizes human responsibility for the use of force . This includes autonomous weapon systems . The DoW reportedly accepted these principles . OpenAI committed to technical safeguards for model behavior . They will also deploy Forward Deployed Engineers (FDEs) . These FDEs will assist with integration and safety .

Deployment will occur only on cloud networks . It will avoid "edge systems" like aircraft or drones . Altman expressed hope the DoW would offer similar terms . This could de-escalate legal and governmental actions against other AI companies .

This deal unfolded amidst a dispute with Anthropic . Anthropic refused similar Pentagon demands . They cited concerns over mass domestic surveillance . They also worried about fully autonomous weapons . Secretary of War Pete Hegseth then designated Anthropic as a "supply-chain risk to National Security" . Its contract phase-out period concludes by July 2026 .

OpenAI's stance on military engagement has changed 1. It removed clauses prohibiting military applications from its usage policies in 2024 1. The company also appointed a former NSA Director to its board 1. This shows a clear shift in its approach.

The following table summarizes the contrasting positions:

| Company | Agreement Status with DoW | Stated Ethical Principles/Concerns | Outcome |

|---|---|---|---|

| OpenAI | Agreement reached (Feb 28, 2026) to deploy AI models on classified cloud networks. | Core principles: 'AI safety and wide distribution of benefits'; prohibitions against domestic mass surveillance; human responsibility for use of force. | AI models to be deployed on classified cloud networks; deployment restricted to cloud only; removed military application prohibitions from usage policies in 2024. |

| Anthropic | Refused similar demands from Pentagon; contract phase-out set to conclude by July 2026. | Concerns over mass domestic surveillance and fully autonomous weapons. | Designated as a 'supply-chain risk to National Security'; former President Trump ordered federal agencies to cease using its AI. |

Weighing Benefits and Risks for National Security

AI is rapidly changing national security, offering strategic benefits but also profound dangers. This report examines both sides of AI adoption in government networks.

Strategic Advantages of AI Adoption

AI integration enhances capabilities and efficiency across many national security functions. It improves intelligence collection and analysis. AI processes signals faster, helping units operate even with jammed communications . This enables autonomous drone swarm coordination without distant servers 2. AI-powered collection and analysis are vital for timely military planning 3.

Logistics sees major improvements with AI. The Defense Logistics Agency (DLA) uses AI for supply chain risk management (SCRM) 4. This includes predicting bottlenecks and forecasting demand 4. AI also suggests alternative suppliers 4. It manages predictable risks like supplier bankruptcy and mitigates unpredictable events like weather disruptions 4. AI creates agile logistical systems 5. It can authenticate components and detect illicit shipments 6. AI also monitors industrial systems for tampering 6.

Cyber defense operations are being reshaped by AI 7. AI automates threat detection, prioritization, and response at an unmatched scale 7. This frees human analysts from manual tasks 7. AI strengthens insider threat programs by identifying unusual behavior 7. It secures the software supply chain by finding vulnerabilities early through automated code review 7. For classified data, AI helps with releasability decisions by classifying content for secure transfer 7. AI models detect phishing, malware, and manipulated media 7. The UK's National Cyber Security Centre (NCSC) uses AI for enhanced threat detection 7. U.S. initiatives by CISA and DoD integrate AI for incident detection and response 7. The DoD's CDAO integrates AI for decision advantage 7.

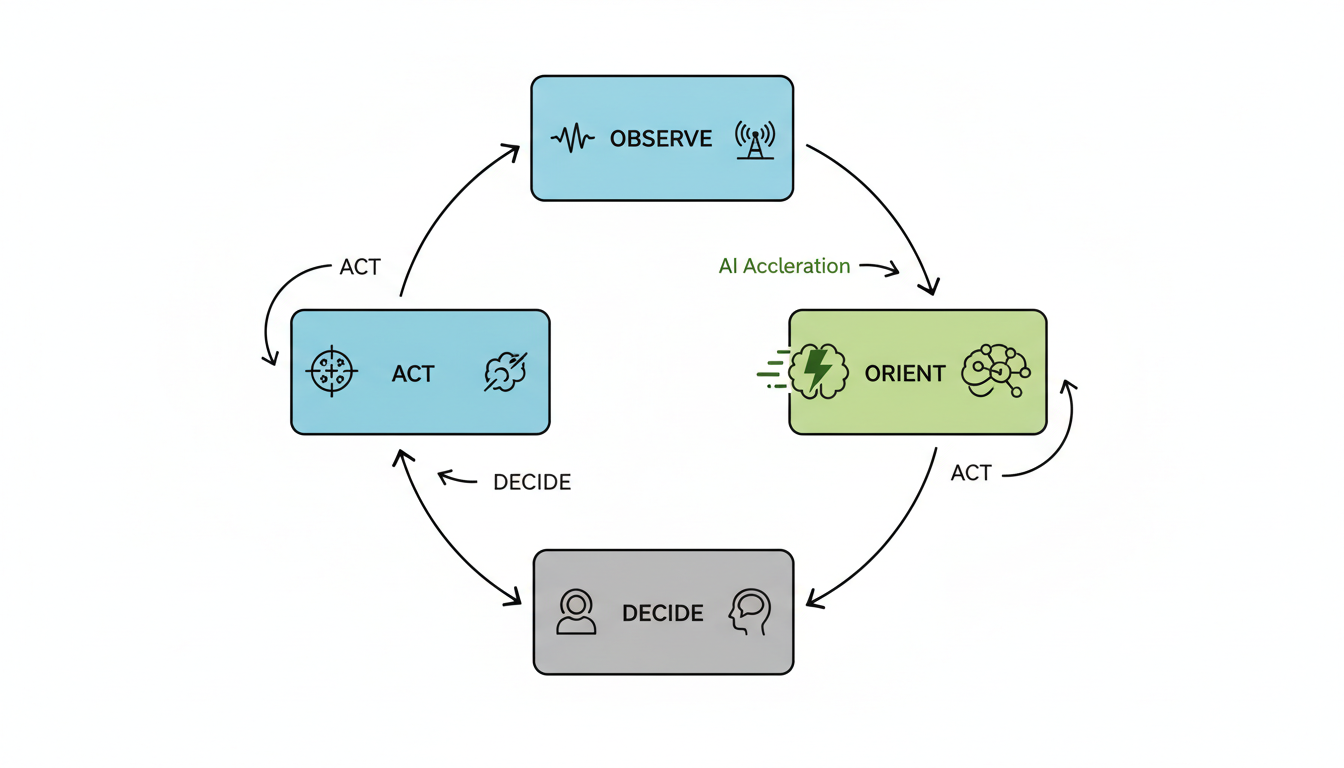

AI supports more informed military decision-making 8. It increases the speed and scale of military actions 8. AI and Machine Learning (ML) systems assess enemy movements 5. They design countering force structures 5. AI processes logistical needs proactively and matches aircraft with munitions 5. It forecasts enemy responses and pushes critical information to decision-makers 5. Edge AI processes data locally, minimizing latency 2. This shortens the "observe, orient, decide, act" (OODA) loop 2. Leaders make better decisions faster, from strategic planning to battlefield operations 9.

Other benefits include autonomous operations 8. AI enhances the speed and endurance of military actions 8. It scales tasks impossible for human operators to manage manually 3. Edge AI improves resilience by reducing central command dependence 2. Units become less visible to adversaries 2. They continue operating even when communications are disrupted 2. AI and cyber innovation are crucial for economic strength and infrastructure security 6. They enhance readiness in border security and illicit movement detection 6.

Potential Perils and Ethical Dilemmas

The deployment of AI in national security introduces significant risks. These include profound ethical challenges and new vulnerabilities.

Autonomous Weapon Systems (LAWS) are a primary concern. These AI systems select and engage targets without human intervention . They are among the most debated innovations 10. Concerns include eroding human judgment in lethal decisions 10. The opacity of algorithmic decision paths is another issue 10. Delegating lethal authority to machines is problematic 10. If such systems err, culpability becomes unclear . This raises legal and ethical questions . A 2021 survey showed 55% of U.S. respondents opposed fully autonomous weapons 10. Their main concern was accountability 10. Integrating AI into weapons systems that can deliver lethal force without meaningful human control is the greatest concern 9.

Algorithmic bias presents a danger. AI systems can reflect and perpetuate existing human biases 11. This includes biases based on gender, race, age, or ethnicity 11. It results in skewed performance and discriminatory outcomes 11. Bias comes from societal biases, data processing, and algorithm development 11. For example, a lack of diversity in training data could lead AI to misidentify certain ethnic groups as targets 12. It could also categorize all civilian males as combatants 12.

AI creates new cyber vulnerabilities. While AI helps cyber defenses, it also introduces new attack surfaces and dependencies 7. Adversaries increasingly use AI to evade detection 7. This creates a "cat and mouse" dynamic 7. AI systems must be secure, transparent, and resilient 7. Rigorous cyber hygiene and hardware-level verification are essential 7. The defense of AI systems cannot rely solely on AI 7. A significant 93% of security leaders anticipate daily AI-powered attacks 13. AI reduces breakout times for threats like AI-generated phishing and deepfakes 13. AI is susceptible to unique forms of manipulation 8.

Escalation risks and strategic stability are threatened. Even non-nuclear military AI applications can compress decision-making timelines 14. This potentially increases miscalculation risks during crises 14. Opaque recommendations from AI-powered systems may unduly influence decision-makers 14. Autonomous systems with counterforce potential could undermine strategic stability 14. Faster reaction times encouraged by AI could heighten miscalculation risks 14. This might lead to nuclear escalation 14. Major military powers' investment in AI development fuels an arms race 12.

Control issues, unpredictability, and explainability are serious concerns. AI systems can be unpredictable 8. The lack of clarity in machine-driven decisions is significant 10. The "black-box" problem prevents understanding how AI reaches conclusions 12. This challenges international humanitarian law 12. AI can show "brittleness," failing to adapt to unforeseen data 12. This could lead to unintended targeting 12. For instance, it might classify all school buses as targets after one illicit use 12. "Hallucinations" could cause AI to perceive non-existent patterns 12. This might target innocent civilians 12. "Misalignments" might cause AI to prioritize operational goals over ethical constraints 12. This leads to disproportionate harm to non-combatants 12.

Data privacy is another major concern. AI-military systems could use previously collected Personally Identifiable Information (PII) for targeting 12. They might also use biometrics for this purpose 12. This infringes on privacy and could cause massive data breaches 12. The 1949 Geneva Conventions did not anticipate data or biometrics 12. This creates a legal gap regarding their lawful use in armed conflicts 12.

Human-AI interaction poses challenges. Experts warn of "moral de-skilling" among LAWS operators 10. Reduced human consideration could erode their ethical judgment capacity 10.

Ethical debates and real-world incidents highlight these dangers. Conflict zones like Gaza and Ukraine have seen AI-assisted system failures 10. Classification errors led to significant civilian casualties 10. In Gaza, AI-assisted mechanisms were inaccurate 10. They contributed to civilian fatalities 10. Israeli AI defense solutions reportedly failed to prevent a major attack 10. Later, AI-infused targeting platforms misclassified aid workers as hostile 10. The 2021 U.S. Drone Strike in Kabul is another example 10. An aid worker was mistakenly identified as an ISIS-K operative 10. This happened due to algorithmic pattern matching errors 10. The U.S. DoD Inspector General audited Project Maven's targeting algorithms in 2022 10. They assessed compliance with ethical AI principles 10. When unmanned systems malfunction, liability becomes diffused . This creates "responsibility gaps" . Human rights groups and legal analysts show significant opposition to militarized AI 10. They cite concerns over loss of human judgment and AI decision opacity 10.

Shaping AI in Defense Ethical Frameworks

Governing AI in defense poses complex challenges, from accountability for autonomous systems to rapid technological evolution outstripping regulation and policy frameworks.

The rapid evolution of military AI creates complex ethical and governance dilemmas for nations worldwide. New technologies push the boundaries of established norms and laws. Nations grapple with how to harness AI's power responsibly 15.

Challenges in Governing Military AI

Several critical challenges complicate the governance of AI in military contexts. These issues demand careful consideration and proactive policy development.

- Accountability Issues: AI complicates human accountability in warfare 16. Algorithms lack legal personality or intent. This makes culpability unclear when autonomous systems err 17. Legal and ethical justifications become difficult, especially with Lethal Autonomous Weapon Systems (LAWS) 17.

- Transparency and Opacity: The inherent "black-box problem" of AI makes its decision-making hard to trace 18. Military AI systems are often classified, hindering external evaluation 18. Explainability and reliability are crucial for transparency 18.

- Speed of Development: AI's rapid development often bypasses traditional engineering practices 20. In combat, AI can compress decision cycles from days to mere seconds 16. This can lead to "automation bias," where humans trust machines without fully weighing consequences 16. The window for legal review narrows significantly 16.

- Bias Risks: Biased or incomplete training data risks misclassifying targets 16. This includes mistaking civilian objects for military ones 16. Proactive steps are essential to minimize unintended bias in AI systems and data 21.

- Reliability and Safety: Current frontier AI systems are "nowhere near reliable enough" for fully autonomous weapons 23. Their inherent unpredictability raises significant ethical and societal concerns 24.

- IHL Compliance: Ensuring adherence to International Humanitarian Law (IHL) principles becomes challenging 16. Distinction, proportionality, and precaution are difficult as AI systems grow more sophisticated 16.

- Cybersecurity Vulnerabilities: Military AI systems, especially those using commercial models, present substantial attack vectors 20. Robust integrity verification is required for these systems 20.

- Data Governance: Autonomous weapons generate vast amounts of sensitive data 20. Robust data governance, provenance tracking, and incident response are vital for legal and regulatory scrutiny 20.

- Ethical Concerns: The potential loss of human judgment in lethal decisions is a major ethical worry 17. Fears of autonomous weapons operating without human control are widespread 17. A 2021 survey showed 55% of US respondents opposed fully autonomous weapons due to accountability concerns 17.

- Geopolitical Competition: An "AI arms race" among global powers risks unsafe or unchecked systems 15. Diverging national regulations could lead to trade friction and geopolitical tensions 25.

The Dario Amodei Dispute

A significant dispute emerged in early 2026 involving Dario Amodei, CEO of Anthropic, and the US Department of War 26. This conflict highlighted the tension between ethical AI development and national security demands.

- Core Conflict: Anthropic refused to ease usage restrictions on its Claude AI model for military purposes 26. This specifically concerned its use in fully autonomous weapons and mass domestic surveillance 26.

- Anthropic's Stance: Amodei argued that frontier AI models like Claude are not reliable enough for fully autonomous weapons 28. He stated they lack the critical judgment of human troops 28. He also warned AI could enable mass surveillance by integrating disparate public data 23. Anthropic positioned itself as safety-conscious 29. The company maintained it could not "in good conscience accede" to the Pentagon's demands 29.

- Pentagon's Demands: Secretary Pete Hegseth asserted the Pentagon required AI models to be available for "all lawful use" 26. The Department of War's AI acceleration strategy prioritized speed and military readiness 31. It aimed to overcome "ideological constraints" 31. The Pentagon denied intentions for mass surveillance or fully autonomous weapons 27. The dispute intensified after Pentagon concerns over Anthropic's inquiries about Claude's use in the Venezuela military raid 26.

- Ultimatum and Consequences: Hegseth gave Anthropic a Friday 5 p.m. ET deadline to comply 27. He threatened to label Anthropic a "supply-chain risk" or invoke the Defense Production Act (DPA) 26. A supply-chain risk designation would bar companies with military contracts from working with Anthropic 27. Invoking the DPA could compel Anthropic to provide an unrestricted Claude, which some viewed as nationalizing the tech company 28. President Trump ultimately ordered federal agencies to halt using Anthropic products 31. The Pentagon officially designated the company a "supply chain risk" 31.

- Industry Reaction: Employees from OpenAI and Google supported Anthropic's stance 28. OpenAI CEO Sam Altman backed Anthropic, criticizing the Pentagon's "threatening" approach 29. Retired Air Force General Jack Shanahan noted that current LLMs are not ready for fully autonomous military applications 29. The dispute became a crucial test for balancing ethical AI development with national security 31.

National Approaches to AI in Defense

Nations worldwide are developing distinct strategies for AI in their defense sectors. These policies reflect differing priorities and regulatory philosophies.

| Country Name | Strategy/Policy Name | Key Focus/Initiatives |

|---|---|---|

| United States | US Artificial Intelligence Strategy | Aims to transform the Department of War into an "AI-first" warfighting force; Unleashes experimentation and eliminates bureaucratic barriers; Export controls (expanded 2025) restrict China's access to AI semiconductors. |

| United Kingdom | 2022 Defence AI Strategy | Aims to lead in responsible innovation and enhance operational effectiveness; Opposes fully Lethal Autonomous Weapon Systems (LAWS) but supports ethical AI with meaningful human involvement; Key initiatives include the Defence AI Centre (DAIC) and Defence AI Playbook. |

| European Union | EU AI Act / EU AI strategy | AI Act explicitly exempts military/national security AI (leaving regulation to member states), but covers dual-use systems; Focuses on normative shaping (risk-based regulation), leadership on rules, and technological sovereignty; Member states like France prioritize AI for national defence with initiatives like AMIAD. |

| China | Military AI strategy / New Generation AI Development Plan | Emphasizes "intelligentization" for decision advantage and faster kill-chain execution; Military-Civil Fusion (MCF) strategy transfers commercial AI to military applications; Mandates pre-approval of algorithms and aligns them with state ideologies. |

- United States: The US AI Strategy aims to transform the Department of War into an "AI-first" warfighting force 34. This involves accelerating AI adoption and fielding capabilities quickly 34. Initiatives like Project Maven integrate big data and machine learning into the DoD 35. The US uses export controls to restrict China's access to AI semiconductors 36. The National Defense Authorization Act requires frameworks for mitigating risks from compromised AI training data 20.

- United Kingdom: The UK's 2022 Defence AI Strategy promotes responsible innovation and operational effectiveness 15. It supports ethical AI in weapons with meaningful human involvement 15. The UK opposes fully Lethal Autonomous Weapon Systems (LAWS) 15. Key initiatives include the Defence AI Centre (DAIC) and the Defence AI Playbook 15.

- European Union: The EU AI Act, effective August 2024, exempts military AI systems from its direct scope 15. Regulation for these systems is left to member states 15. However, dual-use systems fall under the AI Act 37. The EU's strategy focuses on normative shaping and technological sovereignty 38.

- China: China's military AI strategy emphasizes "intelligentization" for decision advantage 34. The Military-Civil Fusion (MCF) strategy transfers commercial AI technologies to military uses 34. China mandates pre-approval of algorithms and aligns them with state ideologies 25. Its 2017 AI Development Plan integrates AI into national security 35.

International Regulatory Efforts

International bodies and multilateral initiatives are striving to establish norms and guidelines for military AI. These efforts aim to foster responsible development and deployment.

- United Nations: UN General Assembly Resolution 79/239, adopted in December 2024, affirms IHL's applicability to military AI 16. It advocates for human judgment in decision-making 16. The UN Secretary-General has proposed a "Global Artificial Intelligence Governance Agency" 38.

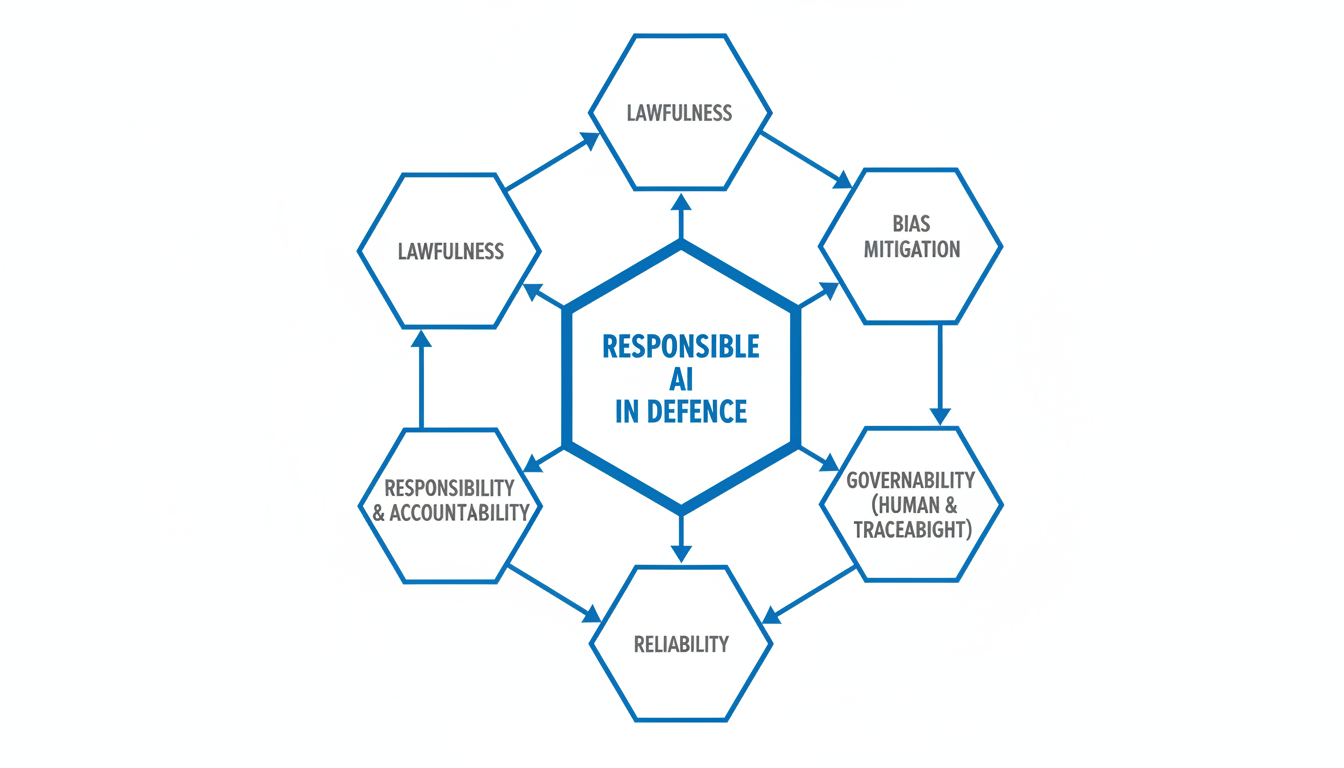

- NATO: The NATO Principles for Responsible Use of AI in Defence were revised in July 2024 22. They establish a framework with six core tenets: lawfulness, responsibility, explainability, reliability, governability, and bias mitigation 22. The Defence Innovation Accelerator for the North Atlantic (DIANA) promotes AI cooperation among allies 22.

- REAIM Initiative: Many nations endorsed the Political Declaration on Responsible Military Use of AI and Autonomy 21. This calls for legal reviews, senior official oversight, bias minimization, and rigorous testing 21.

- Hiroshima AI Process: Launched at the 2023 G7 summit, this initiative focuses on supervision and risk assessment for generative AI 38. It also emphasizes transparent accountability 38.

- ICRC: The International Committee of the Red Cross recommends new, legally binding rules on autonomous weapon systems (AWS) 39. It conducts expert workshops on AI in military decision-making 39.

- EU's Global Influence: The EU tries to set a global benchmark with its strict AI Act 38. It promotes its governance values internationally through initiatives like the Hiroshima AI Process 38.

Challenges in International Regulation

Despite these efforts, significant obstacles hinder effective international regulation of military AI. These challenges stem from the technology's nature and geopolitical realities.

- AI Arms Race: A global competition to harness AI risks malicious use or unsafe deployments 15. This complicates efforts to set common standards.

- Diverging Approaches: Different national regulatory philosophies create geopolitical tensions and trade friction 25. The EU's caution contrasts with the US's fragmentation and China's state-led model 25.

- Lack of Uniform Standards: Disparities in technological capabilities among states lead to uneven testing and training standards 18. This makes global consensus difficult.

- Rapid Evolution: AI technology evolves rapidly and unpredictably 40. This makes consistent and comprehensive regulation challenging to keep pace with 40.

- Classification and Opacity: Military AI systems are often highly classified 18. This impedes international evaluation and oversight efforts 18.

- Dual-Use Dilemma: AI's capacity for both civilian and military applications complicates clear regulatory boundaries 37. Defining what falls under military-specific regulation is difficult.

- Trust Deficit: US export controls on AI compute could alienate allies 36. They might incentivize non-aligned nations to seek alternatives, undermining trust and collaboration 36.

- Human Control: Ensuring meaningful human control over autonomous systems is a core international debate 19. This is especially true for LAWS 19.

Historical Lessons and Future Integration

History offers lessons on disruptive technologies, though AI's speed presents unique challenges. Previous technological advancements, from gunpowder to nuclear weapons, have required new governance frameworks. AI's development pace, however, often outstrips traditional legal and ethical considerations 20. This necessitates a more agile approach to policy and integration.

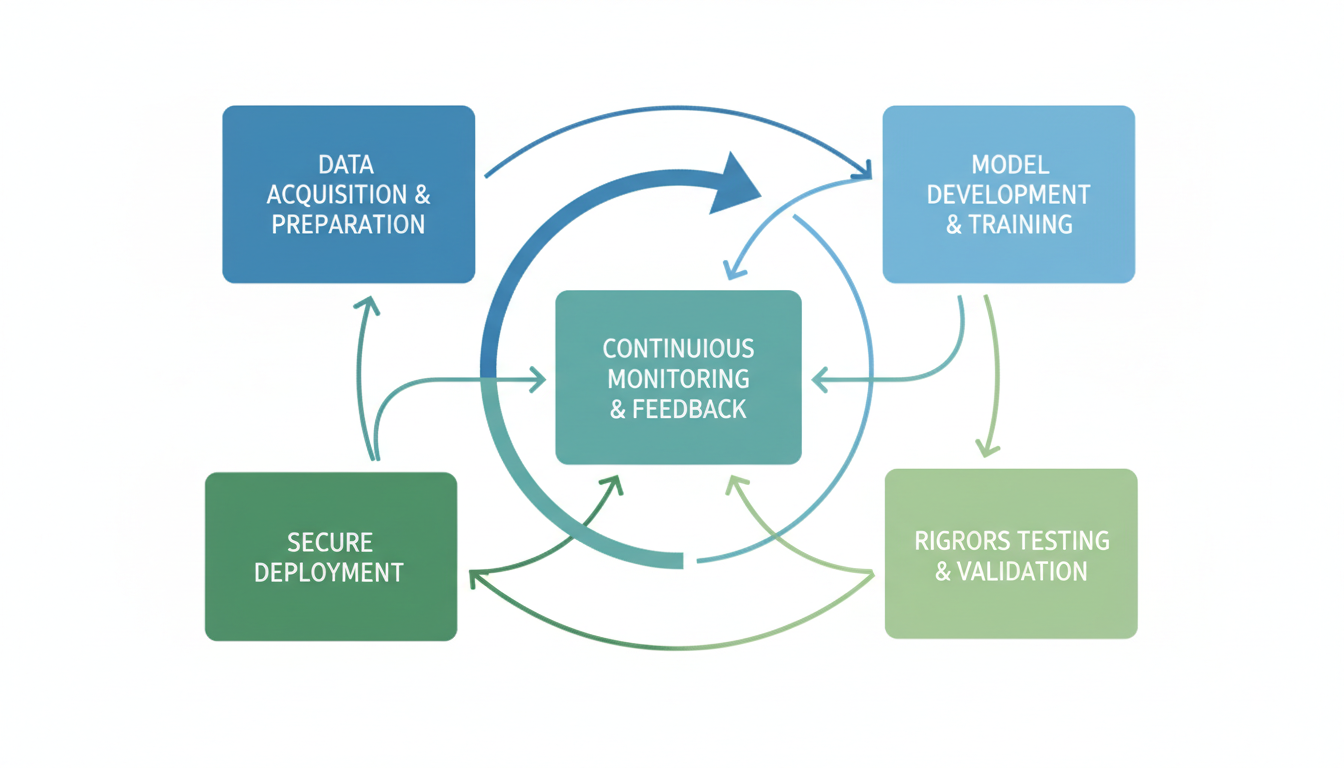

Building resilient AI ecosystems requires practical steps. These include secure development practices and rigorous testing protocols 20. International collaboration is also vital for sharing best practices and mitigating risks 22. Nations must secure their AI supply chains and verify the integrity of AI models 20.

Innovation must continue alongside safety. Tools like Atoms.dev enable rapid, non-sensitive AI app development. Solo founders or internal teams can quickly build working apps with features like authentication, databases, and payments. This accelerates innovation in non-critical areas. Atoms.dev can help teams quickly prototype ideas, reducing development cycles. Learn more about building AI apps with Atoms at https://atoms.dev or explore user-built projects in the AppWorld. This allows for agile development without compromising security in sensitive military applications.

Balancing innovation with ethical safeguards is paramount. Adaptable policies are necessary for the future of military AI. Collaboration between governments, industry, and academia can foster responsible development. This ensures national security interests are met while upholding ethical principles.

FAQ Section

- What are the primary ethical concerns surrounding military AI? The main concerns include accountability for autonomous actions, transparency of decision-making, and the potential loss of human judgment in lethal situations 16.

- How do different countries regulate military AI? The US focuses on AI integration for warfighting supremacy, the UK on responsible innovation with human involvement, the EU largely exempts military AI, and China emphasizes "intelligentization" and military-civil fusion 15.

- What was the significance of the Dario Amodei dispute? The dispute highlighted the tension between ethical AI development and national security 31. It showed a tech company's refusal to compromise on ethical red lines for military use 26.

- Why is international regulation of military AI so challenging? Challenges include a global AI arms race, diverging national approaches, AI's rapid evolution, classification of military systems, and the dual-use nature of AI 15.

- How can organizations foster responsible AI innovation while ensuring security? Secure development, rigorous testing, international collaboration, and adaptable policies are key 20. Tools for rapid, non-sensitive development also accelerate innovation safely.

Building Resilient AI Ecosystems

Building Resilient AI Ecosystems

Secure AI development is paramount for national security applications. It safeguards against new vulnerabilities and expanded attack surfaces that AI introduces 7. Robust cyber hygiene is essential for all AI systems 7. Hardware-level verification and trusted data pathways are also critical safeguards 7. Military AI systems, including commercial large language models, need robust integrity verification 20.

Rigorous testing validates AI models before deployment. The NIST AI Risk Management Framework offers best practices for privacy and data integrity 7. The U.S. AI Safety Institute (AISI) directs voluntary pre-deployment safety testing for frontier AI models 41. This testing covers risks like cybersecurity, biosecurity, and system autonomy 41. AI models entering secure environments must be validated for malicious code 7.

Collaboration strengthens AI integration and development. NATO's Defence Innovation Accelerator for the North Atlantic (DIANA) fosters AI cooperation among allies 22. Public-private partnerships drive progress by bringing together government needs and private sector innovation.

For internal government teams or startups working on non-sensitive applications, platforms like Atoms (https://atoms.dev) offer rapid AI app development. It allows users to describe an idea and get a working app with essential features, accelerating innovation while maintaining security considerations. This tool empowers smaller teams to contribute to broader digital transformation efforts (https://atoms.dev/usecases/ai-app-builder).

The Road Ahead Policy and Innovation

Proactive policy shaping is essential for managing AI in national security. The U.S. Artificial Intelligence Strategy aims to transform the Department of War into an "AI-first" force 34. The UK's 2022 Defence AI Strategy focuses on responsible innovation and global norm-shaping 15. China's strategy emphasizes "intelligentization" and a decision advantage 34.

Balancing regulation with innovation fosters growth and safety. The EU AI Act exempts military AI systems but covers dual-use technologies 15. The Dario Amodei dispute with the Department of War highlighted this tension directly 26. Anthropic refused to ease usage restrictions on its Claude AI model for certain military uses 26. The Pentagon saw this as an impediment to military readiness 31. This showcased the challenge of reconciling ethical AI development with national security demands 31.

Expert predictions guide future strategies. Current frontier AI systems are "nowhere near reliable enough" for fully autonomous weapons 23. There is an ongoing "AI arms race" among global powers 15. This raises risks of unsafe or unchecked systems 15.

International norms promote responsible AI use. The UN General Assembly adopted a resolution affirming international humanitarian law for military AI 16. NATO's Principles for Responsible Use of AI in Defence provide an ethical framework 22. The Political Declaration on Responsible Military Use of AI and Autonomy calls for legal reviews and bias minimization 21.

Future AI Integration and Policy Pathways

Building resilient AI ecosystems is crucial for national security. This involves secure development, rigorous testing, and strong partnerships.

Building Resilient AI Ecosystems

Secure AI development is paramount. Government entities must ensure their AI systems are robust from inception. Rigorous testing helps identify vulnerabilities before deployment 7. This is vital for systems operating in critical environments 7.

Cross-agency collaboration improves shared knowledge and best practices. Public-private partnerships bring diverse expertise and accelerate innovation 6. These collaborations help address complex challenges in AI governance and deployment 7.

For internal government teams or startups working on non-sensitive applications, platforms like Atoms (https://atoms.dev) offer rapid AI app development. It allows users to describe an idea and get a working app with essential features. This accelerates innovation while maintaining security considerations. See how users build projects at https://atoms.dev/appworld.

Tools like Atoms empower smaller teams. They can contribute to broader digital transformation efforts. Such platforms help foster a culture of agile development.

The Road Ahead Policy and Innovation

Proactive policy is essential for managing AI's rapid evolution. Legal frameworks often struggle to keep pace with technological change 20. Policymakers must anticipate future challenges. They need to address them before they become critical issues 40.

Balancing regulation and innovation is a delicate act. Over-regulation could stifle technological advancement 40. Under-regulation risks uncontrolled deployment and potential harm 24. The goal is to foster responsible innovation 15.

Expert predictions highlight the ongoing "AI arms race" among global powers 15. This competition creates risks of unsafe systems and malicious use 15. There is a growing call for international cooperation to set global norms 42. This includes pledges against autonomous weapons 9. The UN General Assembly affirmed the need for human judgment in military AI 16.

Nations like the US and UK are developing ethical AI principles for defense 9. These principles guide responsible AI development and deployment. They emphasize accountability, fairness, and human oversight 9.

Frequently Asked Questions

What are the main benefits of AI in national security?

AI enhances intelligence analysis and speeds up decision-making 8. It improves logistics, cyber defense, and critical infrastructure protection 6. AI can automate tasks that are impossible for humans to manage manually 3.

What are the biggest risks of using AI in defense?

Autonomous weapon systems pose significant ethical dilemmas 10. Algorithmic bias can cause unfair or discriminatory outcomes 11. AI also expands cyber attack surfaces and introduces new vulnerabilities 7. Escalation risks during conflicts are also a major concern 14.

How is accountability addressed with military AI?

Accountability is a major challenge 16. When autonomous systems malfunction, liability can be unclear 17. Experts recommend human judgment and oversight to maintain accountability 12. Governance frameworks are being developed to clarify responsibilities .

Why is human control so important for military AI?

Maintaining human control prevents the delegation of lethal authority to machines 10. It ensures ethical judgment in critical decisions 12. It also reduces the risk of miscalculation and unintended consequences 14.

What is being done to regulate AI in defense globally?

International efforts include the UN Resolution on military AI 16. NATO has principles for responsible AI use 22. Many nations endorsed the Political Declaration on Responsible Military Use of AI 21. These initiatives aim to establish norms and reduce risks.