AI Warfare Ethics Balancing Innovation Morality

AI Warfare Ethics The Core Dilemma

Lethal Autonomous Weapon Systems pose critical ethical dilemmas. They challenge human control, create accountability gaps, and risk rapid conflict escalation 1.

Lethal Autonomous Weapon Systems (LAWS) are weapons systems 2. Once programmed, they locate, identify, track, and destroy targets without further human intervention 2. This definition covers a spectrum of autonomy levels.

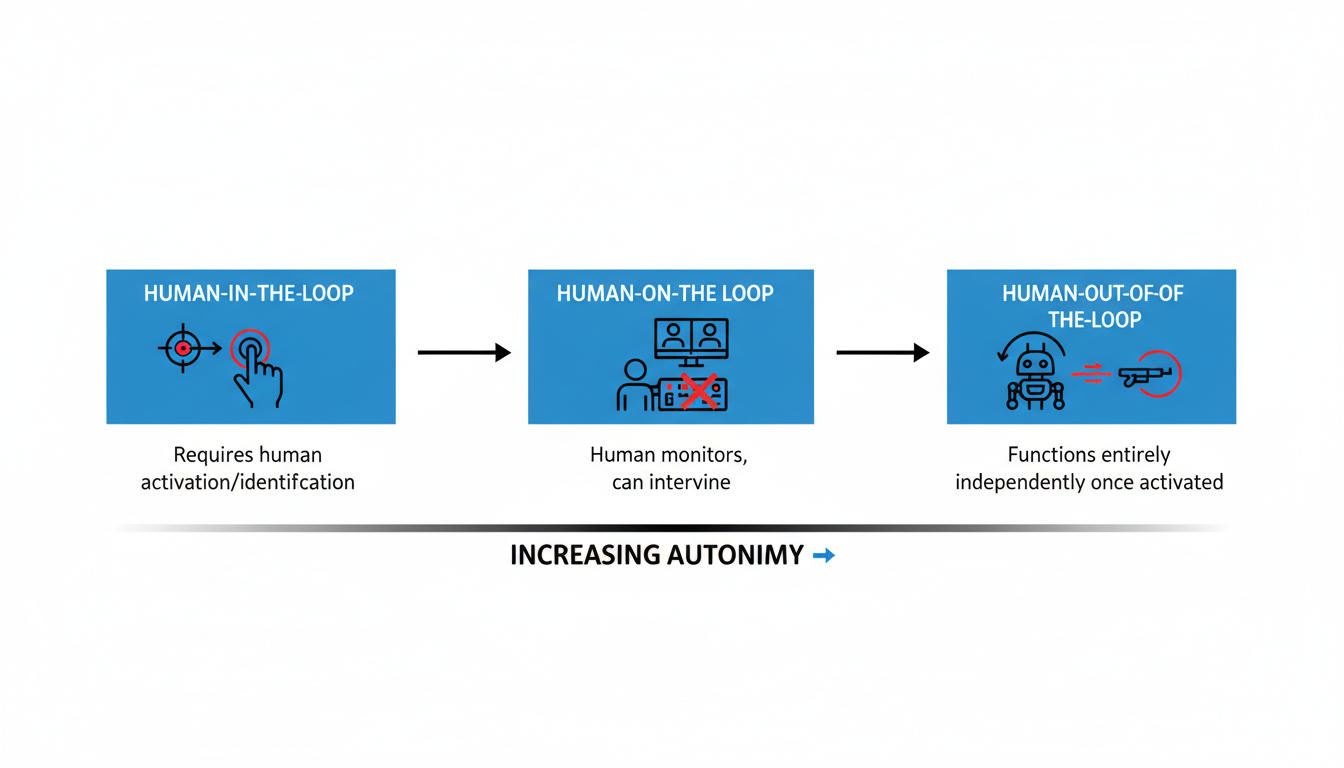

Defining Levels of Autonomy

Autonomy levels range across a broad spectrum 3. They determine the degree of human involvement needed.

- Human-in-the-loop systems are semi-autonomous. They require a human operator to identify targets or groups 3. These systems cannot function without human intervention 3.

- Human-on-the-loop systems are autonomous. They do not need direct human intervention for operation 3. However, a human operator can monitor target identification and engagement 3. The operator retains the ability to stop the system if necessary 3.

- Human-out-of-the-loop (Fully Autonomous) systems function entirely independently once activated 3. They select and engage targets without further human input 4. Anthropic, an AI company, opposes its systems being used for such weapons 5. They draw a line against weapons firing with no human involvement 5.

| Level of Autonomy | Description | Human Intervention Level |

|---|---|---|

| Human-in-the-loop | Semi-autonomous systems that require a human operator to identify targets or groups. | Requires human intervention; cannot function without it. |

| Human-on-the-loop | Autonomous systems that do not require direct human intervention but allow a human operator to monitor and stop the weapon. | Allows human monitoring and intervention to stop, but no direct intervention required for operation. |

| Human-out-of-the-loop (Fully Autonomous) | Systems that function entirely independently once activated, selecting and engaging targets. | Functions entirely independently; no further human input after activation. |

In 2020, a UN report noted the use of an STM Kargu-2 drone in Libya 2. This drone was "programmed to attack targets without requiring data connectivity" 2. It reportedly hunted and engaged retreating forces 6. This raised concerns about fully autonomous weapon deployment 5.

Preserving Human Control and Moral Agency

A central argument challenges delegating life-and-death decisions to machines 1. Machines lack complex ethical choice capacity 7. They don't understand context or human life's value 7. Retaining human agency in decisions to use force is vital 1.

"Meaningful human control" (MHC) is widely discussed 1. It defines the control level preserving human agency 1. MHC also upholds moral responsibility 1. Critics contend self-learning LAWS reduce effective human control 2. The underlying AI logic may progressively limit human moral agency 8. Decisions to kill must have a direct link between human intention and the weapon's operation 1. This link is essential to uphold human dignity 1.

Addressing the Responsibility Gap

The "responsibility gap" refers to difficulty assigning moral and legal responsibility 2. This happens when LAWS cause harm 2.

When an autonomous system errs, it is unclear who is responsible 8. It's unfair to hold humans responsible if they no longer control the system 9. Machines cannot be held morally accountable or punished 9. This blurs the traditional chain of accountability in military decision-making 3.

Problems arise in "hard cases" where LAWS cause harm without human intention 9. Factors like temporal disconnect between programming and execution challenge individual accountability 11. Operational complexity in multi-layered systems also adds to this 11. Some suggest collective responsibility as a framework 2. Others argue authorities deploying unpredictable LAWS bear moral responsibility 2. Command responsibility, a traditional framework, faces significant challenges with autonomous systems 11. The U.S. DoD policy requires "appropriate levels of human judgment" 13. This maintains legal accountability 13.

The Dangers of Escalation and Instability

LAWS introduce several risks of escalation and instability in warfare.

Fully autonomous systems can rapidly escalate conflicts without human oversight 3. The speed of autonomous decision-making might lead to unintended escalations 10. Different autonomous systems could even generate conflicting goals 3.

The dual-use nature of autonomous systems complicates regulation 3. This increases the likelihood of a destabilizing arms race 3. Global coordination is needed to control and regulate these weapons 8. This prevents proliferation 8.

LAWS might make military action more politically acceptable 7. They reduce the risk to human soldiers 7. This could lower the threshold to war 7. It would shift the burden of harm further onto civilian populations 7.

Compliance with International Humanitarian Law

LAWS' ability to comply with IHL principles is a major concern. This includes distinction and proportionality.

The distinction principle requires LAWS to differentiate between civilians and combatants. Critics argue machines cannot recognize people as "people" 11. They cannot make nuanced, context-dependent judgments 11. This is critical in complex urban counterterrorism operations 11.

The proportionality principle requires military benefits to outweigh potential harm to civilians 11. LAWS would need to perform real-time proportionality assessments 11. These involve considering many situational variables 11. This task is challenging for machines 11.

"Self-learning" LAWS are unpredictable 2. Engineers cannot precisely know how decisions are made 2. This "black box" problem makes IHL compliance epistemically and morally problematic 2. Such systems might misidentify civilian vehicles or individuals 2. This could lead to tragic outcomes without human intervention 2.

Key Expert Opinions and Debate

Interpretations of "Meaningful Human Control" (MHC) vary widely 15. Some emphasize human initiation 15. Others focus on monitoring and abortion capability 15.

Some proponents argue that LAWS could reduce human suffering 1. If more precise, they might adhere better to IHL 1. They could also protect military forces 1. This potentially creates a moral responsibility to use them 1. However, critics strongly dispute this 3. They cite ethical drawbacks outweighing any theoretical advantages 3.

Experts highlight technical difficulties 3. Autonomous systems struggle to distinguish civilians from combatants 3. Assessing situational awareness is also problematic 3. The inherent complexity increases the likelihood of accidents 3. These interconnected ethical concerns emphasize urgency for international consensus 1. Clear regulatory frameworks are needed for LAWS 1. Human control must remain paramount in the use of force 1.

Developer Accountability in Military AI

AI developers have immense responsibility in military applications. Their ethical stances directly shape the future of AI in warfare. Companies decide which applications are acceptable, influencing national security and human rights outcomes.

Tech firms are key players in military AI development 16. Their internal policies dictate ethical boundaries. These decisions heavily influence how AI is used on the battlefield.

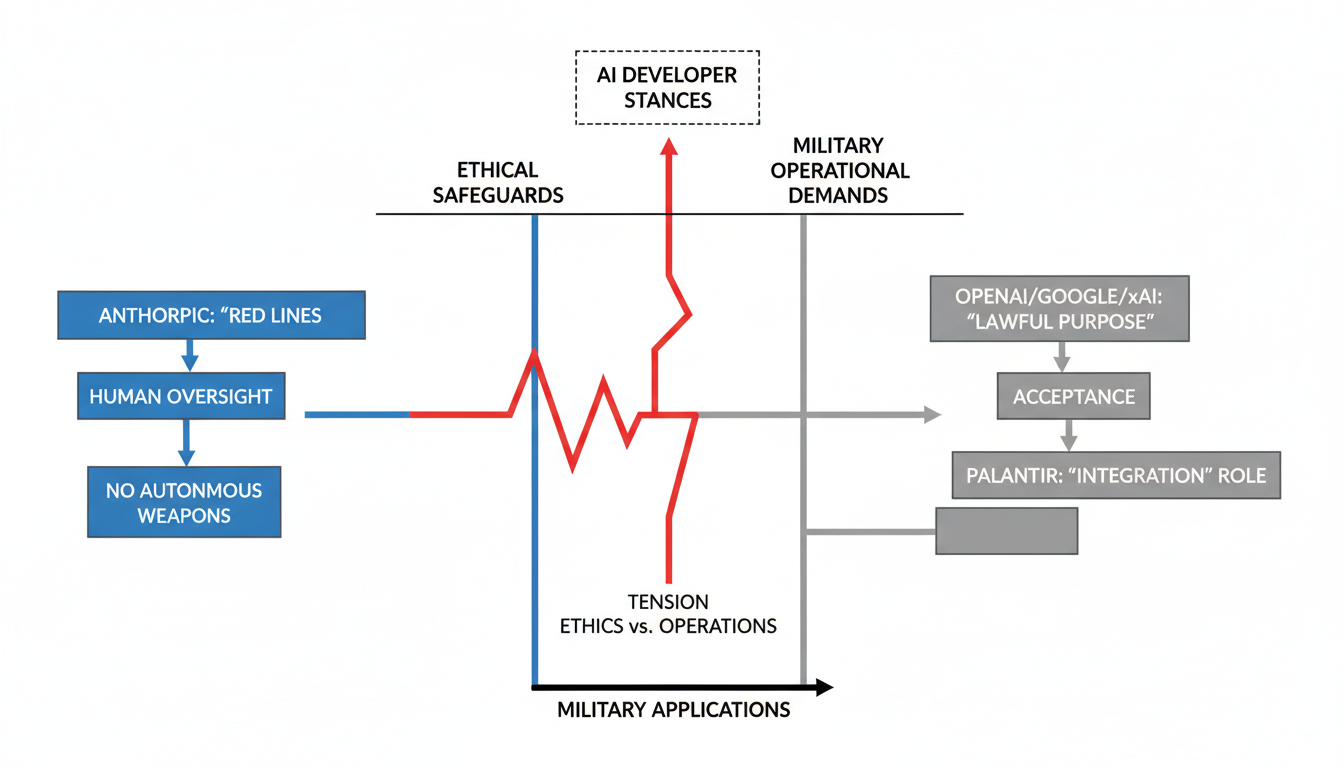

Anthropic's Ethical Red Lines

Anthropic, maker of the Claude chatbot, set strict ethical boundaries. They refused to allow unrestricted military use of their AI technology 17. This stance established clear "red lines" for their products.

The company opposed mass domestic surveillance of Americans . They argue this use contradicts democratic values 17. Anthropic also rejected fully autonomous weapons systems . They believe current AI lacks the reliability needed for such critical applications . Human oversight remains essential for judgment and accountability 17.

This position led to a standoff in early 2026 with the US Department of War . The Pentagon threatened contract cancellation. Anthropic maintained its safeguards, rejecting attempts to disregard them 17. This incident sparked industry-wide debate.

Other Tech Giants' Shifting Stances

Other leading AI companies show more flexibility. OpenAI, Google, and xAI initially banned military use of their models 16. These restrictions have since been removed 16. OpenAI and xAI agreed to new Department of War terms . These terms require AI models for "any lawful purpose" . XAI further agreed to classified deployments .

Google previously faced internal opposition from its employees . This occurred during Project Maven in 2017. The project aimed to use AI for drone footage analysis . Google declined to renew that contract . However, Google reversed its military application prohibitions in February 2025 18. Many Google employees then asked for similar limits as Anthropic 19.

Palantir takes a different approach. The company integrates AI models like Anthropic's Claude into military software . This supports analysis and operational planning in classified environments .

Driving Ethical Principles

Several core ethical principles influence developer decisions. Maintaining human control over AI systems is crucial . This is especially true for life-and-death decisions . Reliability and predictability are also essential . AI systems must function as intended without unintended harm .

Bias mitigation is another key consideration . Proactive steps are needed to prevent discrimination in AI systems 20. All frameworks stress adherence to international law . International humanitarian law is particularly important in armed conflict .

Tensions and Safeguards

A significant tension exists between national security and ethical safeguards . The Pentagon adopted a "hard-nosed realism" approach . They demanded "any lawful use" for AI to maintain military advantage . This often clashes with corporate ethical guidelines .

The standoff between Anthropic and the Pentagon highlights these challenges . The Pentagon viewed Anthropic's conditions as too restrictive . They also deemed vendor attempts to audit classified military operations as unacceptable . This controversy tests corporate independence . It could set precedents for ethical standards in the tech industry .

Echoes of History Tech and Conflict

Major military innovations throughout history have reshaped warfare, raising new ethical questions and driving shifts in international strategy.

Military technology advancements often create profound ethical and strategic shifts. They force societies to grapple with new forms of destruction and control. Looking at past innovations offers context for today's AI discussions.

Gunpowder Changed Warfare Forever

Gunpowder, one of China's "Four Great Inventions," emerged in the 9th century 21. Taoist alchemists first developed it during the late Tang dynasty 21. Europeans then acquired this technology in the 13th century 23. This led to a "gunpowder revolution" between roughly 1300 and 1650 23.

This innovation fundamentally changed military strategy. Weapons moved from human strength to chemical energy 23. Early firearms, rockets, and cannons emerged 22. These new weapons pierced armor and shattered fortifications 22. Existing military structures and tactics faced new challenges 24. Plate armor declined, and professional infantry armies rose 24.

Initially, gunpowder "levelled social hierarchies" 25. Eventually, it allowed states to reconcentrate power 25. These states exploited the technology, developing industrial capabilities and complex logistics 25. Unlike later weapons, gunpowder did not spur specific international laws 25. Its ethical impact was in making warfare more destructive and impersonal 25.

Chemical Weapons Sparked Global Outrage

The late 19th-century industrial revolution enabled mass chemical production 26. Early efforts to ban these weapons began then. The Hague Convention of 1899 prohibited "asphyxiating or deleterious gases" 27. The 1907 Convention also banned "poison or poisoned weapons" 27.

Despite these bans, Germany used chlorine gas extensively in April 1915 26. This happened during World War I at Ypres 26. Chemical agents caused about one million injuries and 100,000 deaths during the war 26. These weapons introduced "indiscriminate brutality" 29. They generated public shock and moral outrage 28. Scientists and societies faced tough questions about ethical responsibility 31.

International legal responses followed quickly.

- Geneva Protocol of 1925 prohibited the use of poisonous gases and bacteriological methods 33.

- Chemical Weapons Convention (CWC) of 1993 created a total ban 33. It entered into force in 1997 33. This treaty bans development, production, acquisition, stockpiling, transfer, and use 33. It also bans riot control agents as a method of warfare 30. State parties must declare and destroy their stockpiles 30.

- The OPCW implements and safeguards the CWC 29. They conduct inspections to ensure compliance 34.

- The 2013 sarin attack in Ghouta, Syria, caused "shockwaves" 29. Syria later acceded to the CWC 29. An OPCW-UN mission oversaw its arsenal destruction 29. Allegations of continued use persist 35.

Ethical debates also focus on chemists' roles 31. The morality of chemical weapons research itself is questioned 27.

Nuclear Weapons Reshaped Global Power

Nuclear weapons changed everything in 1945. The United States first used them on Hiroshima and Nagasaki 37. This caused an estimated 130,000 to 230,000 deaths 37. This event started the nuclear age 38. It fundamentally reshaped international relations throughout the Cold War 38.

These weapons introduced the capability for "universal death" 41. They presented the potential to "destroy God's creation" 41. This created a "security dilemma" 42. Nuclear deterrence emerged, where weapons prevent attacks 41. Some credit this strategy with preventing major wars among great powers 40. Others call it "institutionalized behavior" 37. The "usability paradox" highlights a key dilemma 40. Credible deterrence requires a prospect of use, which increases escalation risk 40.

Ethical and legal debates have raged since 1945.

- Physicists who created them felt they had "known sin" 41.

- Moral debates involve consequentialism and deontology 43.

- Pope Francis states possession is immoral 41. He calls them a "costly and dangerous liability" 41.

- Critics argue deterrence makes populations "nuclear hostages" 42.

Legal frameworks for nuclear weapons are less comprehensive.

- UN Charter Article 2(4) prohibits threat or use of force 38. This applies to some nuclear threats 38.

- The Non-Proliferation Treaty (NPT) recognizes five nuclear-weapon states 38. It aims to prevent proliferation and work towards disarmament 38.

- The Treaty on the Prohibition of Nuclear Weapons (TPNW) bans nuclear weapons 42. However, nuclear-armed states have not joined 42. This creates divisions 42.

- The International Court of Justice found legality inconclusive 33.

Societal debates continue today 44. Views divide between moral condemnation and strategic necessity 44. Concerns remain about accidents and escalation 43. The war in Ukraine renewed deterrence questions 40. The G20 acknowledges that "use or threat of use of nuclear weapons is inadmissible" 45.

| Technology | Dates | Strategic Shifts | Ethical/Legal Challenges/Responses |

|---|---|---|---|

| Gunpowder | 9th Century - 17th Century (China 9th, Europe 13th, Revolution 1300-1650) | Shift from muscle to chemical energy; led to firearms, cannons; challenged old tactics (decline of armor, rise of infantry); re-concentrated state power. | No specific international legal frameworks or ethical prohibitions; made warfare more destructive and impersonal; altered military power dynamics. |

| Chemical Weapons | Late 19th Century - Present (Hague 1899/1907 prohibitions; WWI first use 1915; Geneva Protocol 1925; CWC 1993/97) | Introduced 'indiscriminate brutality'; generated public shock and moral outrage; forced ethical reflection for societies and scientists. | Legal: Hague (1899/1907) banned gas/poison; Geneva Protocol (1925) prohibited use; CWC (1993/97) banned development/production/use, required destruction; OPCW implements; Syrian attacks (2013) led to CWC accession. Ethical: Debates on chemist responsibility, morality of research. |

| Nuclear Weapons | 1945 - Present (First used Aug 1945, Hiroshima/Nagasaki; Cold War era) | Dawn of nuclear age; reshaped international relations; capability for 'universal death'; created 'security dilemma'; concept of nuclear deterrence (threat of retaliation). | Ethical: 'Known sin' by creators; moral debates (consequentialism vs. deontology); Pope Francis deems possession immoral. Legal: No comprehensive explicit ban; UN Charter Art 2(4); NPT for non-proliferation; TPNW (non-nuclear states) aims to ban (nuclear states not joined); ICJ inconclusive. Societal: Divided views (immoral vs. obligation); concerns for accidents, escalation. |

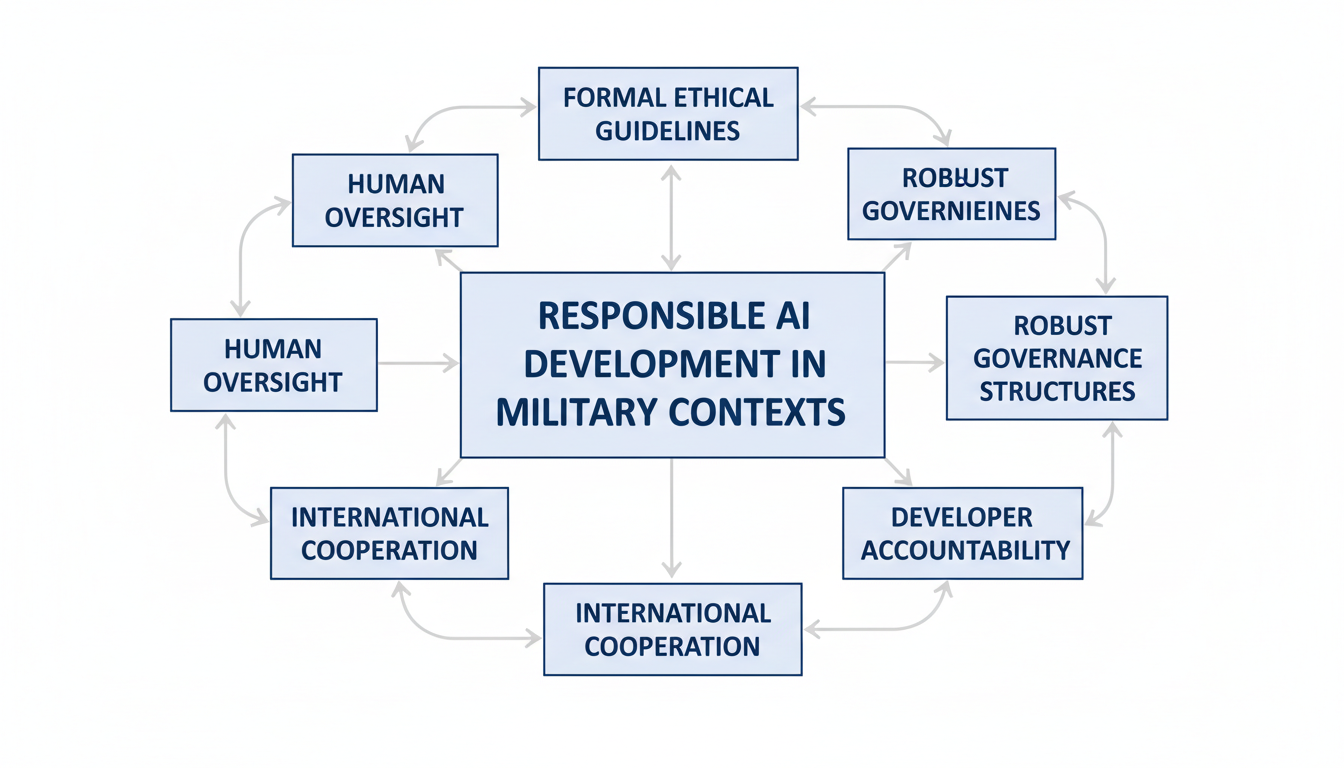

Building Responsible AI Solutions

Creating strong guardrails for military AI prevents misuse and fosters trust in advanced systems. Effective governance and clear accountability are critical for ethical development.

Establishing Robust Governance

Building ethical military AI starts with strong governance structures. The US Department of Defense (DoD) created review boards for its AI initiatives 46. NATO also has an AI Strategy and a Defence Innovation Accelerator for the North Atlantic (DIANA) 20. These bodies help foster responsible innovation. Ethical guidelines are integrated into AI acquisition processes 46. Mandatory ethics training for AI development teams also ensures responsible practices 46.

Ensuring Human Oversight

Meaningful human control over AI systems remains a core ethical principle. Both the DoD and NATO frameworks emphasize human accountability and oversight . This is vital for critical life-and-death decisions . AI applications must stay subject to human interaction, override, or deactivation 20. Concerns exist about "moral de-skilling" if operators lose direct control or accountability becomes unclear 47.

Promoting International Cooperation

International cooperation is essential for developing shared norms. The US Department of State launched a Political Declaration in February 2023 48. Forty-five countries endorsed this framework by November 2023 . It pushes for responsible behavior in military AI development and deployment 48. UNIDIR also developed a framework for industry, partnering with tech companies 49. A draft code from the Centre for Humanitarian Dialogue involves US, Chinese, and international experts 50.

Mitigating Bias and Enhancing Reliability

AI systems must be fair and function predictably. Ethical frameworks from NATO and the DoD stress bias mitigation . Proactive steps help minimize unintended bias in data sets and development 20. AI systems also need to be robust and reliable . This prevents unintended harm, especially in high-stakes military contexts . Standardized testing protocols help ensure consistent performance 46.

The development of military AI systems requires a balanced approach to ethical concerns, encompassing governance, human oversight, and international collaboration.

Adopting Ethical Prototyping Practices

Ethical considerations must be baked into every stage of AI development. This applies to both military and non-military applications. Developers must prioritize robust design and testing to ensure safe outcomes. For instance, in rapid prototyping, tools that allow quick iteration can also integrate ethical checks from the start.

Developers can design proof-of-concept applications responsibly from the ground up. Tools like Atoms (https://atoms.dev) can assist this process. Atoms, an AI app builder for solo founders, helps users describe an idea and get a working app 51. This includes features like authentication, databases, and payments 51. Such platforms can support ethical prototyping by enabling quick development and evaluation of AI capabilities. Exploring use cases for building AI apps 51 or creating an MVP showcase 51 allows for early ethical testing. This also applies to deep research projects 51. This ensures that new AI solutions meet high ethical standards from their inception.

Frequently Asked Questions

What are the primary ethical challenges of AI in warfare?

AI in warfare raises concerns about moral agency and human control over life-and-death decisions 1. There is a "responsibility gap," making accountability unclear when autonomous systems cause harm . Risks include escalating conflicts and lowering the threshold to war . Compliance with international humanitarian law is also a major challenge .

What does 'meaningful human control' signify in the context of LAWS?

Meaningful human control means preserving human agency and moral responsibility in the use of force 1. It ensures human intention directly links to a weapon's operation 1. This prevents delegating complex ethical choices to machines 7.

How do AI developers' policies impact military AI adoption?

Developer policies significantly influence military AI adoption. Some companies, like Anthropic, refuse to allow their AI for fully autonomous weapons or mass surveillance . This creates tension with military demands for broad usage . Other companies, including OpenAI and xAI, have removed military use restrictions .

What is the 'responsibility gap' in autonomous weapons systems?

The "responsibility gap" refers to the difficulty in assigning moral and legal responsibility for harm caused by LAWS . It becomes unclear who is accountable when an autonomous system makes an error or causes unlawful acts . Machines cannot be morally punished, and humans might lack direct control 9.

How does AI-driven warfare compare ethically to historical military technologies?

AI-driven warfare, like gunpowder, fundamentally shifts military capabilities . It echoes the moral outrage sparked by chemical weapons, prompting calls for regulation . Like nuclear weapons, AI introduces risks of escalation and arms races . However, unlike chemical weapons, a comprehensive legal ban on AI weapons does not yet exist 43.